Hi, Thanks for this repo :-D

When I run the demo.py I get the following error:

python3 demo.py --output /home/ws/Desktop/ --config-file configs/coco-instance/yolomask.yaml --input /home/ws/data/dataset/nets_kinneret_only24_2/images/0_2.jpg --opts MODEL.WEIGHTS output/coco_yolomask/model_final.pth

Install mish-cuda to speed up training and inference. More importantly, replace the naive Mish with MishCuda will give a ~1.5G memory saving during training.

[05/08 10:45:50 detectron2]: Arguments: Namespace(confidence_threshold=0.21, config_file='configs/coco-instance/yolomask.yaml', input='/home/ws/data/dataset/nets_kinneret_only24_2/images/0_2.jpg', nms_threshold=0.6, opts=['MODEL.WEIGHTS', 'output/coco_yolomask/model_final.pth'], output='/home/ws/Desktop/', video_input=None, webcam=False)

10:45:50 05.08 INFO yolomask.py:86]: YOLO.ANCHORS: [[142, 110], [192, 243], [459, 401], [36, 75], [76, 55], [72, 146], [12, 16], [19, 36], [40, 28]]

10:45:50 05.08 INFO yolomask.py:90]: backboneshape: [64, 128, 256, 512], size_divisibility: 32

[[142, 110], [192, 243], [459, 401], [36, 75], [76, 55], [72, 146], [12, 16], [19, 36], [40, 28]]

/home/ws/.local/lib/python3.6/site-packages/torch/functional.py:445: UserWarning: torch.meshgrid: in an upcoming release, it will be required to pass the indexing argument. (Triggered internally at ../aten/src/ATen/native/TensorShape.cpp:2157.)

return _VF.meshgrid(tensors, **kwargs) # type: ignore[attr-defined]

[05/08 10:45:52 fvcore.common.checkpoint]: [Checkpointer] Loading from output/coco_yolomask/model_final.pth ...

[05/08 10:45:53 d2.checkpoint.c2_model_loading]: Following weights matched with model:

| Names in Model | Names in Checkpoint

| Shapes |

|:--------------------------------------------------|:------------------------------------------------------------------------------------------------------|:-------------------------------|

| backbone.dark2.0.bn.* | backbone.dark2.0.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark2.0.conv.weight | backbone.dark2.0.conv.weight

| (64, 32, 3, 3) |

| backbone.dark2.1.conv1.bn.* | backbone.dark2.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (32,) () (32,) (32,) (32,) |

| backbone.dark2.1.conv1.conv.weight | backbone.dark2.1.conv1.conv.weight

| (32, 64, 1, 1) |

| backbone.dark2.1.conv2.bn.* | backbone.dark2.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (32,) () (32,) (32,) (32,) |

| backbone.dark2.1.conv2.conv.weight | backbone.dark2.1.conv2.conv.weight

| (32, 64, 1, 1) |

| backbone.dark2.1.conv3.bn.* | backbone.dark2.1.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark2.1.conv3.conv.weight | backbone.dark2.1.conv3.conv.weight

| (64, 64, 1, 1) |

| backbone.dark2.1.m.0.conv1.bn.* | backbone.dark2.1.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (32,) () (32,) (32,) (32,) |

| backbone.dark2.1.m.0.conv1.conv.weight | backbone.dark2.1.m.0.conv1.conv.weight

| (32, 32, 1, 1) |

| backbone.dark2.1.m.0.conv2.bn.* | backbone.dark2.1.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (32,) () (32,) (32,) (32,) |

| backbone.dark2.1.m.0.conv2.conv.weight | backbone.dark2.1.m.0.conv2.conv.weight

| (32, 32, 3, 3) |

| backbone.dark3.0.bn.* | backbone.dark3.0.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark3.0.conv.weight | backbone.dark3.0.conv.weight

| (128, 64, 3, 3) |

| backbone.dark3.1.conv1.bn.* | backbone.dark3.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.conv1.conv.weight | backbone.dark3.1.conv1.conv.weight

| (64, 128, 1, 1) |

| backbone.dark3.1.conv2.bn.* | backbone.dark3.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.conv2.conv.weight | backbone.dark3.1.conv2.conv.weight

| (64, 128, 1, 1) |

| backbone.dark3.1.conv3.bn.* | backbone.dark3.1.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark3.1.conv3.conv.weight | backbone.dark3.1.conv3.conv.weight

| (128, 128, 1, 1) |

| backbone.dark3.1.m.0.conv1.bn.* | backbone.dark3.1.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.0.conv1.conv.weight | backbone.dark3.1.m.0.conv1.conv.weight

| (64, 64, 1, 1) |

| backbone.dark3.1.m.0.conv2.bn.* | backbone.dark3.1.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.0.conv2.conv.weight | backbone.dark3.1.m.0.conv2.conv.weight

| (64, 64, 3, 3) |

| backbone.dark3.1.m.1.conv1.bn.* | backbone.dark3.1.m.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.1.conv1.conv.weight | backbone.dark3.1.m.1.conv1.conv.weight

| (64, 64, 1, 1) |

| backbone.dark3.1.m.1.conv2.bn.* | backbone.dark3.1.m.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.1.conv2.conv.weight | backbone.dark3.1.m.1.conv2.conv.weight

| (64, 64, 3, 3) |

| backbone.dark3.1.m.2.conv1.bn.* | backbone.dark3.1.m.2.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.2.conv1.conv.weight | backbone.dark3.1.m.2.conv1.conv.weight

| (64, 64, 1, 1) |

| backbone.dark3.1.m.2.conv2.bn.* | backbone.dark3.1.m.2.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| backbone.dark3.1.m.2.conv2.conv.weight | backbone.dark3.1.m.2.conv2.conv.weight

| (64, 64, 3, 3) |

| backbone.dark4.0.bn.* | backbone.dark4.0.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark4.0.conv.weight | backbone.dark4.0.conv.weight

| (256, 128, 3, 3) |

| backbone.dark4.1.conv1.bn.* | backbone.dark4.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.conv1.conv.weight | backbone.dark4.1.conv1.conv.weight

| (128, 256, 1, 1) |

| backbone.dark4.1.conv2.bn.* | backbone.dark4.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.conv2.conv.weight | backbone.dark4.1.conv2.conv.weight

| (128, 256, 1, 1) |

| backbone.dark4.1.conv3.bn.* | backbone.dark4.1.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark4.1.conv3.conv.weight | backbone.dark4.1.conv3.conv.weight

| (256, 256, 1, 1) |

| backbone.dark4.1.m.0.conv1.bn.* | backbone.dark4.1.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.0.conv1.conv.weight | backbone.dark4.1.m.0.conv1.conv.weight

| (128, 128, 1, 1) |

| backbone.dark4.1.m.0.conv2.bn.* | backbone.dark4.1.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.0.conv2.conv.weight | backbone.dark4.1.m.0.conv2.conv.weight

| (128, 128, 3, 3) |

| backbone.dark4.1.m.1.conv1.bn.* | backbone.dark4.1.m.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.1.conv1.conv.weight | backbone.dark4.1.m.1.conv1.conv.weight

| (128, 128, 1, 1) |

| backbone.dark4.1.m.1.conv2.bn.* | backbone.dark4.1.m.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.1.conv2.conv.weight | backbone.dark4.1.m.1.conv2.conv.weight

| (128, 128, 3, 3) |

| backbone.dark4.1.m.2.conv1.bn.* | backbone.dark4.1.m.2.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.2.conv1.conv.weight | backbone.dark4.1.m.2.conv1.conv.weight

| (128, 128, 1, 1) |

| backbone.dark4.1.m.2.conv2.bn.* | backbone.dark4.1.m.2.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| backbone.dark4.1.m.2.conv2.conv.weight | backbone.dark4.1.m.2.conv2.conv.weight

| (128, 128, 3, 3) |

| backbone.dark5.0.bn.* | backbone.dark5.0.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (512,) () (512,) (512,) (512,) |

| backbone.dark5.0.conv.weight | backbone.dark5.0.conv.weight

| (512, 256, 3, 3) |

| backbone.dark5.1.conv1.bn.* | backbone.dark5.1.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark5.1.conv1.conv.weight | backbone.dark5.1.conv1.conv.weight

| (256, 512, 1, 1) |

| backbone.dark5.1.conv2.bn.* | backbone.dark5.1.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (512,) () (512,) (512,) (512,) |

| backbone.dark5.1.conv2.conv.weight | backbone.dark5.1.conv2.conv.weight

| (512, 1024, 1, 1) |

| backbone.dark5.2.conv1.bn.* | backbone.dark5.2.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark5.2.conv1.conv.weight | backbone.dark5.2.conv1.conv.weight

| (256, 512, 1, 1) |

| backbone.dark5.2.conv2.bn.* | backbone.dark5.2.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark5.2.conv2.conv.weight | backbone.dark5.2.conv2.conv.weight

| (256, 512, 1, 1) |

| backbone.dark5.2.conv3.bn.* | backbone.dark5.2.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (512,) () (512,) (512,) (512,) |

| backbone.dark5.2.conv3.conv.weight | backbone.dark5.2.conv3.conv.weight

| (512, 512, 1, 1) |

| backbone.dark5.2.m.0.conv1.bn.* | backbone.dark5.2.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark5.2.m.0.conv1.conv.weight | backbone.dark5.2.m.0.conv1.conv.weight

| (256, 256, 1, 1) |

| backbone.dark5.2.m.0.conv2.bn.* | backbone.dark5.2.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| backbone.dark5.2.m.0.conv2.conv.weight | backbone.dark5.2.m.0.conv2.conv.weight

| (256, 256, 3, 3) |

| backbone.stem.conv.bn.* | backbone.stem.conv.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (32,) () (32,) (32,) (32,) |

| backbone.stem.conv.conv.weight | backbone.stem.conv.conv.weight

| (32, 12, 3, 3) |

| m.0.* | m.0.{bias,weight}

| (255,) (255,512,1,1) |

| m.1.* | m.1.{bias,weight}

| (255,) (255,256,1,1) |

| m.2.* | m.2.{bias,weight}

| (255,) (255,128,1,1) |

| neck.C3_n3.conv1.bn.* | neck.C3_n3.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_n3.conv1.conv.weight | neck.C3_n3.conv1.conv.weight

| (128, 256, 1, 1) |

| neck.C3_n3.conv2.bn.* | neck.C3_n3.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_n3.conv2.conv.weight | neck.C3_n3.conv2.conv.weight

| (128, 256, 1, 1) |

| neck.C3_n3.conv3.bn.* | neck.C3_n3.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_n3.conv3.conv.weight | neck.C3_n3.conv3.conv.weight

| (256, 256, 1, 1) |

| neck.C3_n3.m.0.conv1.bn.* | neck.C3_n3.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_n3.m.0.conv1.conv.weight | neck.C3_n3.m.0.conv1.conv.weight

| (128, 128, 1, 1) |

| neck.C3_n3.m.0.conv2.bn.* | neck.C3_n3.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_n3.m.0.conv2.conv.weight | neck.C3_n3.m.0.conv2.conv.weight

| (128, 128, 3, 3) |

| neck.C3_n4.conv1.bn.* | neck.C3_n4.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_n4.conv1.conv.weight | neck.C3_n4.conv1.conv.weight

| (256, 512, 1, 1) |

| neck.C3_n4.conv2.bn.* | neck.C3_n4.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_n4.conv2.conv.weight | neck.C3_n4.conv2.conv.weight

| (256, 512, 1, 1) |

| neck.C3_n4.conv3.bn.* | neck.C3_n4.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (512,) () (512,) (512,) (512,) |

| neck.C3_n4.conv3.conv.weight | neck.C3_n4.conv3.conv.weight

| (512, 512, 1, 1) |

| neck.C3_n4.m.0.conv1.bn.* | neck.C3_n4.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_n4.m.0.conv1.conv.weight | neck.C3_n4.m.0.conv1.conv.weight

| (256, 256, 1, 1) |

| neck.C3_n4.m.0.conv2.bn.* | neck.C3_n4.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_n4.m.0.conv2.conv.weight | neck.C3_n4.m.0.conv2.conv.weight

| (256, 256, 3, 3) |

| neck.C3_p3.conv1.bn.* | neck.C3_p3.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| neck.C3_p3.conv1.conv.weight | neck.C3_p3.conv1.conv.weight

| (64, 256, 1, 1) |

| neck.C3_p3.conv2.bn.* | neck.C3_p3.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| neck.C3_p3.conv2.conv.weight | neck.C3_p3.conv2.conv.weight

| (64, 256, 1, 1) |

| neck.C3_p3.conv3.bn.* | neck.C3_p3.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_p3.conv3.conv.weight | neck.C3_p3.conv3.conv.weight

| (128, 128, 1, 1) |

| neck.C3_p3.m.0.conv1.bn.* | neck.C3_p3.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| neck.C3_p3.m.0.conv1.conv.weight | neck.C3_p3.m.0.conv1.conv.weight

| (64, 64, 1, 1) |

| neck.C3_p3.m.0.conv2.bn.* | neck.C3_p3.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| neck.C3_p3.m.0.conv2.conv.weight | neck.C3_p3.m.0.conv2.conv.weight

| (64, 64, 3, 3) |

| neck.C3_p4.conv1.bn.* | neck.C3_p4.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_p4.conv1.conv.weight | neck.C3_p4.conv1.conv.weight

| (128, 512, 1, 1) |

| neck.C3_p4.conv2.bn.* | neck.C3_p4.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_p4.conv2.conv.weight | neck.C3_p4.conv2.conv.weight

| (128, 512, 1, 1) |

| neck.C3_p4.conv3.bn.* | neck.C3_p4.conv3.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.C3_p4.conv3.conv.weight | neck.C3_p4.conv3.conv.weight

| (256, 256, 1, 1) |

| neck.C3_p4.m.0.conv1.bn.* | neck.C3_p4.m.0.conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_p4.m.0.conv1.conv.weight | neck.C3_p4.m.0.conv1.conv.weight

| (128, 128, 1, 1) |

| neck.C3_p4.m.0.conv2.bn.* | neck.C3_p4.m.0.conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.C3_p4.m.0.conv2.conv.weight | neck.C3_p4.m.0.conv2.conv.weight

| (128, 128, 3, 3) |

| neck.bu_conv1.bn.* | neck.bu_conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.bu_conv1.conv.weight | neck.bu_conv1.conv.weight

| (256, 256, 3, 3) |

| neck.bu_conv2.bn.* | neck.bu_conv2.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.bu_conv2.conv.weight | neck.bu_conv2.conv.weight

| (128, 128, 3, 3) |

| neck.lateral_conv0.bn.* | neck.lateral_conv0.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| neck.lateral_conv0.conv.weight | neck.lateral_conv0.conv.weight

| (256, 512, 1, 1) |

| neck.reduce_conv1.bn.* | neck.reduce_conv1.bn.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| neck.reduce_conv1.conv.weight | neck.reduce_conv1.conv.weight

| (128, 256, 1, 1) |

| orien_head.neck_orien.0.conv_block.0.weight | orien_head.neck_orien.0.conv_block.0.weight

| (128, 256, 1, 1) |

| orien_head.neck_orien.0.conv_block.1.* | orien_head.neck_orien.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| orien_head.neck_orien.1.conv_block.0.weight | orien_head.neck_orien.1.conv_block.0.weight

| (256, 128, 3, 3) |

| orien_head.neck_orien.1.conv_block.1.* | orien_head.neck_orien.1.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| orien_head.neck_orien.2.conv_block.0.weight | orien_head.neck_orien.2.conv_block.0.weight

| (128, 256, 1, 1) |

| orien_head.neck_orien.2.conv_block.1.* | orien_head.neck_orien.2.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| orien_head.neck_orien.3.conv_block.0.weight | orien_head.neck_orien.3.conv_block.0.weight

| (256, 128, 3, 3) |

| orien_head.neck_orien.3.conv_block.1.* | orien_head.neck_orien.3.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| orien_head.neck_orien.4.conv_block.0.weight | orien_head.neck_orien.4.conv_block.0.weight

| (128, 256, 1, 1) |

| orien_head.neck_orien.4.conv_block.1.* | orien_head.neck_orien.4.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| orien_head.orien_m.0.conv_block.0.weight | orien_head.orien_m.0.conv_block.0.weight

| (256, 128, 3, 3) |

| orien_head.orien_m.0.conv_block.1.* | orien_head.orien_m.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| orien_head.orien_m.1.conv_block.0.weight | orien_head.orien_m.1.conv_block.0.weight

| (128, 256, 1, 1) |

| orien_head.orien_m.1.conv_block.1.* | orien_head.orien_m.1.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| orien_head.orien_m.2.conv_block.0.weight | orien_head.orien_m.2.conv_block.0.weight

| (256, 128, 3, 3) |

| orien_head.orien_m.2.conv_block.1.* | orien_head.orien_m.2.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| orien_head.orien_m.3.conv_block.0.weight | orien_head.orien_m.3.conv_block.0.weight

| (128, 256, 1, 1) |

| orien_head.orien_m.3.conv_block.1.* | orien_head.orien_m.3.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (128,) () (128,) (128,) (128,) |

| orien_head.orien_m.4.conv_block.0.weight | orien_head.orien_m.4.conv_block.0.weight

| (256, 128, 3, 3) |

| orien_head.orien_m.4.conv_block.1.* | orien_head.orien_m.4.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (256,) () (256,) (256,) (256,) |

| orien_head.orien_m.5.* | orien_head.orien_m.5.{bias,weight}

| (18,) (18,256,1,1) |

| orien_head.up_levels_2to5.0.conv_block.0.weight | orien_head.up_levels_2to5.0.conv_block.0.weight

| (64, 64, 1, 1) |

| orien_head.up_levels_2to5.0.conv_block.1.* | orien_head.up_levels_2to5.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| orien_head.up_levels_2to5.1.0.conv_block.0.weight | orien_head.up_levels_2to5.1.0.conv_block.0.weight

| (64, 128, 1, 1) |

| orien_head.up_levels_2to5.1.0.conv_block.1.* | orien_head.up_levels_2to5.1.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| orien_head.up_levels_2to5.2.0.conv_block.0.weight | orien_head.up_levels_2to5.2.0.conv_block.0.weight

| (64, 256, 1, 1) |

| orien_head.up_levels_2to5.2.0.conv_block.1.* | orien_head.up_levels_2to5.2.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

| orien_head.up_levels_2to5.3.0.conv_block.0.weight | orien_head.up_levels_2to5.3.0.conv_block.0.weight

| (64, 512, 1, 1) |

| orien_head.up_levels_2to5.3.0.conv_block.1.* | orien_head.up_levels_2to5.3.0.conv_block.1.{bias,num_batches_tracked,running_mean,running_var,weight} | (64,) () (64,) (64,) (64,) |

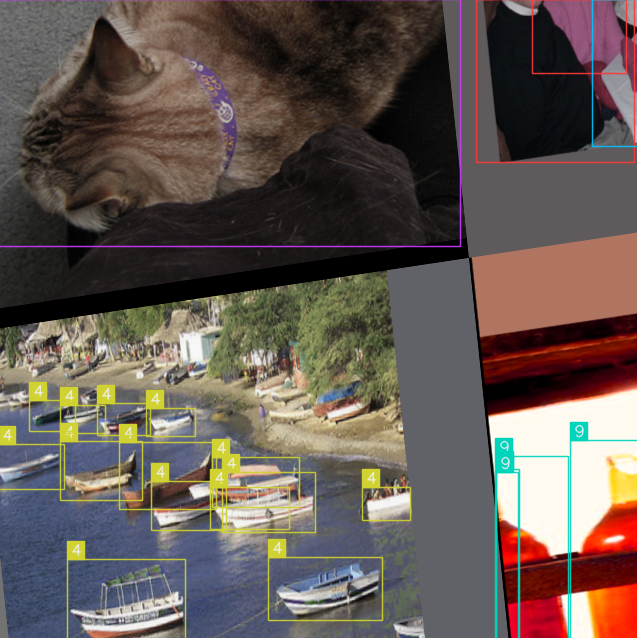

416 416 600

confidence thresh: 0.21

image after transform: (416, 416, 3)

cost: 0.02672123908996582, fps: 37.42341425983922

Traceback (most recent call last):

File "demo.py", line 211, in <module>

res = vis_res_fast(res, img, class_names, colors, conf_thresh)

File "demo.py", line 146, in vis_res_fast

img, clss, bit_masks, force_colors=None, draw_contours=False

File "/home/ws/.local/lib/python3.6/site-packages/alfred/vis/image/mask.py", line 302, in vis_bitmasks_with_classes

txt = f'{class_names[classes[i]]}'

TypeError: list indices must be integers or slices, not numpy.float32

I think this is happened because this line:

scores = ins.scores.cpu().numpy() clss = ins.pred_classes.cpu().numpy()

This cuse clss to by a numpy array and not a list of int.

Am I right. How it's works for you?

Also, in line (157):

if bboxes:

bboxes is not bool

Maybe it's should by:

if bboxes is not None:

Thanks