Gluon CV Toolkit

| Installation | Documentation | Tutorials |

GluonCV provides implementations of the state-of-the-art (SOTA) deep learning models in computer vision.

It is designed for engineers, researchers, and students to fast prototype products and research ideas based on these models. This toolkit offers four main features:

- Training scripts to reproduce SOTA results reported in research papers

- Supports both PyTorch and MXNet

- A large number of pre-trained models

- Carefully designed APIs that greatly reduce the implementation complexity

- Community supports

Demo

Check the HD video at Youtube or Bilibili.

Supported Applications

| Application | Illustration | Available Models |

|---|---|---|

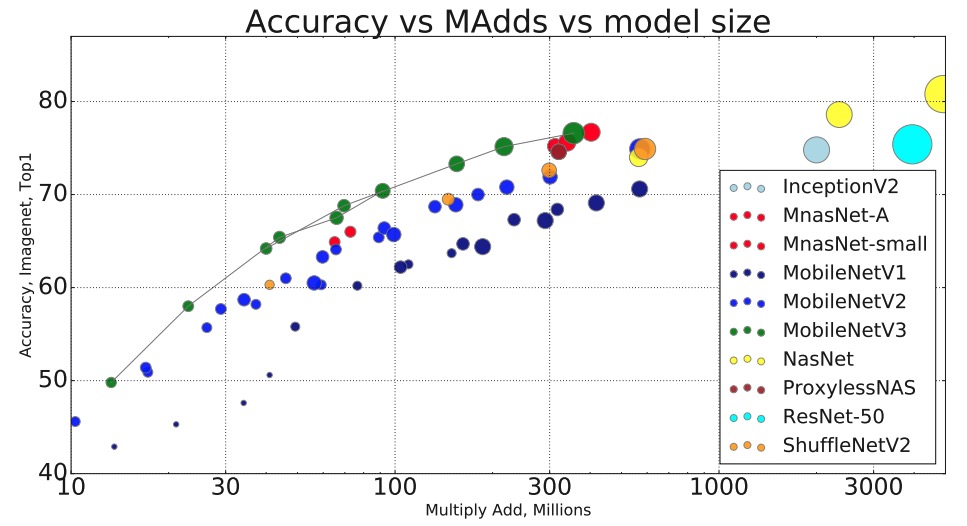

| Image Classification: recognize an object in an image. |

|

50+ models, including ResNet, MobileNet, DenseNet, VGG, ... |

| Object Detection: detect multiple objects with their bounding boxes in an image. |

|

Faster RCNN, SSD, Yolo-v3 |

| Semantic Segmentation: associate each pixel of an image with a categorical label. |

|

FCN, PSP, ICNet, DeepLab-v3, DeepLab-v3+, DANet, FastSCNN |

| Instance Segmentation: detect objects and associate each pixel inside object area with an instance label. |

|

Mask RCNN |

| Pose Estimation: detect human pose from images. |

Simple Pose | |

| Video Action Recognition: recognize human actions in a video. |

|

MXNet: TSN, C3D, I3D, I3D_slow, P3D, R3D, R2+1D, Non-local, SlowFast PyTorch: TSN, I3D, I3D_slow, R2+1D, Non-local, CSN, SlowFast, TPN |

| Depth Prediction: predict depth map from images. |

|

Monodepth2 |

| GAN: generate visually deceptive images |

|

WGAN, CycleGAN, StyleGAN |

| Person Re-ID: re-identify pedestrians across scenes |

|

Market1501 baseline |

Installation

GluonCV is built on top of MXNet and PyTorch. Depending on the individual model implementation(check model zoo for the complete list), you will need to install either one of the deep learning framework. Of course you can always install both for the best coverage.

Please also check installation guide for a comprehensive guide to help you choose the right installation command for your environment.

Installation (MXNet)

GluonCV supports Python 3.6 or later. The easiest way to install is via pip.

Stable Release

The following commands install the stable version of GluonCV and MXNet:

pip install gluoncv --upgrade

# native

pip install -U --pre mxnet -f https://dist.mxnet.io/python/mkl

# cuda 10.2

pip install -U --pre mxnet -f https://dist.mxnet.io/python/cu102mkl

The latest stable version of GluonCV is 0.8 and we recommend mxnet 1.6.0/1.7.0

Nightly Release

You may get access to latest features and bug fixes with the following commands which install the nightly build of GluonCV and MXNet:

pip install gluoncv --pre --upgrade

# native

pip install -U --pre mxnet -f https://dist.mxnet.io/python/mkl

# cuda 10.2

pip install -U --pre mxnet -f https://dist.mxnet.io/python/cu102mkl

There are multiple versions of MXNet pre-built package available. Please refer to mxnet packages if you need more details about MXNet versions.

Installation (PyTorch)

GluonCV supports Python 3.6 or later. The easiest way to install is via pip.

Stable Release

The following commands install the stable version of GluonCV and PyTorch:

pip install gluoncv --upgrade

# native

pip install torch==1.6.0+cpu torchvision==0.7.0+cpu -f https://download.pytorch.org/whl/torch_stable.html

# cuda 10.2

pip install torch==1.6.0 torchvision==0.7.0

There are multiple versions of PyTorch pre-built package available. Please refer to PyTorch if you need other versions.

The latest stable version of GluonCV is 0.8 and we recommend PyTorch 1.6.0

Nightly Release

You may get access to latest features and bug fixes with the following commands which install the nightly build of GluonCV:

pip install gluoncv --pre --upgrade

# native

pip install --pre torch torchvision torchaudio -f https://download.pytorch.org/whl/nightly/cpu/torch_nightly.html

# cuda 10.2

pip install --pre torch torchvision torchaudio -f https://download.pytorch.org/whl/nightly/cu102/torch_nightly.html

Docs

??

GluonCV documentation is available at our website.

Examples

All tutorials are available at our website!

Resources

Check out how to use GluonCV for your own research or projects.

- For background knowledge of deep learning or CV, please refer to the open source book Dive into Deep Learning. If you are new to Gluon, please check out our 60-minute crash course.

- For getting started quickly, refer to notebook runnable examples at Examples.

- For advanced examples, check out our Scripts.

- For experienced users, check out our API Notes.

Citation

If you feel our code or models helps in your research, kindly cite our papers:

@article{gluoncvnlp2020,

author = {Jian Guo and He He and Tong He and Leonard Lausen and Mu Li and Haibin Lin and Xingjian Shi and Chenguang Wang and Junyuan Xie and Sheng Zha and Aston Zhang and Hang Zhang and Zhi Zhang and Zhongyue Zhang and Shuai Zheng and Yi Zhu},

title = {GluonCV and GluonNLP: Deep Learning in Computer Vision and Natural Language Processing},

journal = {Journal of Machine Learning Research},

year = {2020},

volume = {21},

number = {23},

pages = {1-7},

url = {http://jmlr.org/papers/v21/19-429.html}

}

@article{he2018bag,

title={Bag of Tricks for Image Classification with Convolutional Neural Networks},

author={He, Tong and Zhang, Zhi and Zhang, Hang and Zhang, Zhongyue and Xie, Junyuan and Li, Mu},

journal={arXiv preprint arXiv:1812.01187},

year={2018}

}

@article{zhang2019bag,

title={Bag of Freebies for Training Object Detection Neural Networks},

author={Zhang, Zhi and He, Tong and Zhang, Hang and Zhang, Zhongyue and Xie, Junyuan and Li, Mu},

journal={arXiv preprint arXiv:1902.04103},

year={2019}

}

@article{zhang2020resnest,

title={ResNeSt: Split-Attention Networks},

author={Zhang, Hang and Wu, Chongruo and Zhang, Zhongyue and Zhu, Yi and Zhang, Zhi and Lin, Haibin and Sun, Yue and He, Tong and Muller, Jonas and Manmatha, R. and Li, Mu and Smola, Alexander},

journal={arXiv preprint arXiv:2004.08955},

year={2020}

}