Model-based 3D Hand Reconstruction via Self-Supervised Learning, CVPR2021

S2HAND: Model-based 3D Hand Reconstruction via Self-Supervised Learning S2HAND presents a self-supervised 3D hand reconstruction network that can join

Customised to detect objects automatically by a given model file(onnx)

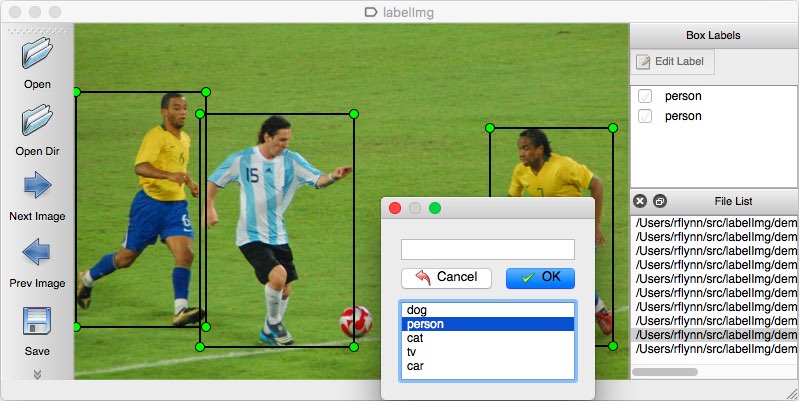

LabelImg LabelImg is a graphical image annotation tool. It is written in Python and uses Qt for its graphical interface. Annotations are saved as XML

Ultra-lightweight human body posture key point CNN model. ModelSize:2.3MB HUAWEI P40 NCNN benchmark: 6ms/img,

Ultralight-SimplePose Support NCNN mobile terminal deployment Based on MXNET(=1.5.1) GLUON(=0.7.0) framework Top-down strategy: The input image is t

This code is 3d-CNN model that can predict environmental value

Predict-environmental-value-3dCNN This code is 3d-CNN model that can predict environmental value. Firstly, I built a model that can create a lot of bu

This is a model to classify Vietnamese sign language using Motion history image (MHI) algorithm and CNN.

Vietnamese sign lagnuage recognition using MHI and CNN This is a model to classify Vietnamese sign language using Motion history image (MHI) algorithm

Attention-based CNN-LSTM and XGBoost hybrid model for stock prediction

Attention-based CNN-LSTM and XGBoost hybrid model for stock prediction Requirements The code has been tested running under Python 3.7.4, with the foll

HandTailor: Towards High-Precision Monocular 3D Hand Recovery

HandTailor This repository is the implementation code and model of the paper "HandTailor: Towards High-Precision Monocular 3D Hand Recovery" (arXiv) G

PyTorch reimplementation of minimal-hand (CVPR2020)

Minimal Hand Pytorch Unofficial PyTorch reimplementation of minimal-hand (CVPR2020). you can also find in youtube or bilibili bare hand youtube or bil