1876 Repositories

Python hierarchical-few-shot-generative-models Libraries

🤗 Transformers: State-of-the-art Machine Learning for Pytorch, TensorFlow, and JAX.

English | 简体中文 | 繁體中文 | 한국어 State-of-the-art Machine Learning for JAX, PyTorch and TensorFlow 🤗 Transformers provides thousands of pretrained models

Pre-training with Extracted Gap-sentences for Abstractive SUmmarization Sequence-to-sequence models

PEGASUS library Pre-training with Extracted Gap-sentences for Abstractive SUmmarization Sequence-to-sequence models, or PEGASUS, uses self-supervised

Fine-tuning scripts for evaluating transformer-based models on KLEJ benchmark.

The KLEJ Benchmark Baselines The KLEJ benchmark (Kompleksowa Lista Ewaluacji Językowych) is a set of nine evaluation tasks for the Polish language und

Dense Passage Retriever - is a set of tools and models for open domain Q&A task.

Dense Passage Retrieval Dense Passage Retrieval (DPR) - is a set of tools and models for state-of-the-art open-domain Q&A research. It is based on the

Few-shot Natural Language Generation for Task-Oriented Dialog

Few-shot Natural Language Generation for Task-Oriented Dialog This repository contains the dataset, source code and trained model for the following pa

This repository contains the code for running the character-level Sandwich Transformers from our ACL 2020 paper on Improving Transformer Models by Reordering their Sublayers.

Improving Transformer Models by Reordering their Sublayers This repository contains the code for running the character-level Sandwich Transformers fro

Code for the paper "Language Models are Unsupervised Multitask Learners"

Status: Archive (code is provided as-is, no updates expected) gpt-2 Code and models from the paper "Language Models are Unsupervised Multitask Learner

Library of deep learning models and datasets designed to make deep learning more accessible and accelerate ML research.

Tensor2Tensor Tensor2Tensor, or T2T for short, is a library of deep learning models and datasets designed to make deep learning more accessible and ac

An implementation of model parallel GPT-2 and GPT-3-style models using the mesh-tensorflow library.

GPT Neo 🎉 1T or bust my dudes 🎉 An implementation of model & data parallel GPT3-like models using the mesh-tensorflow library. If you're just here t

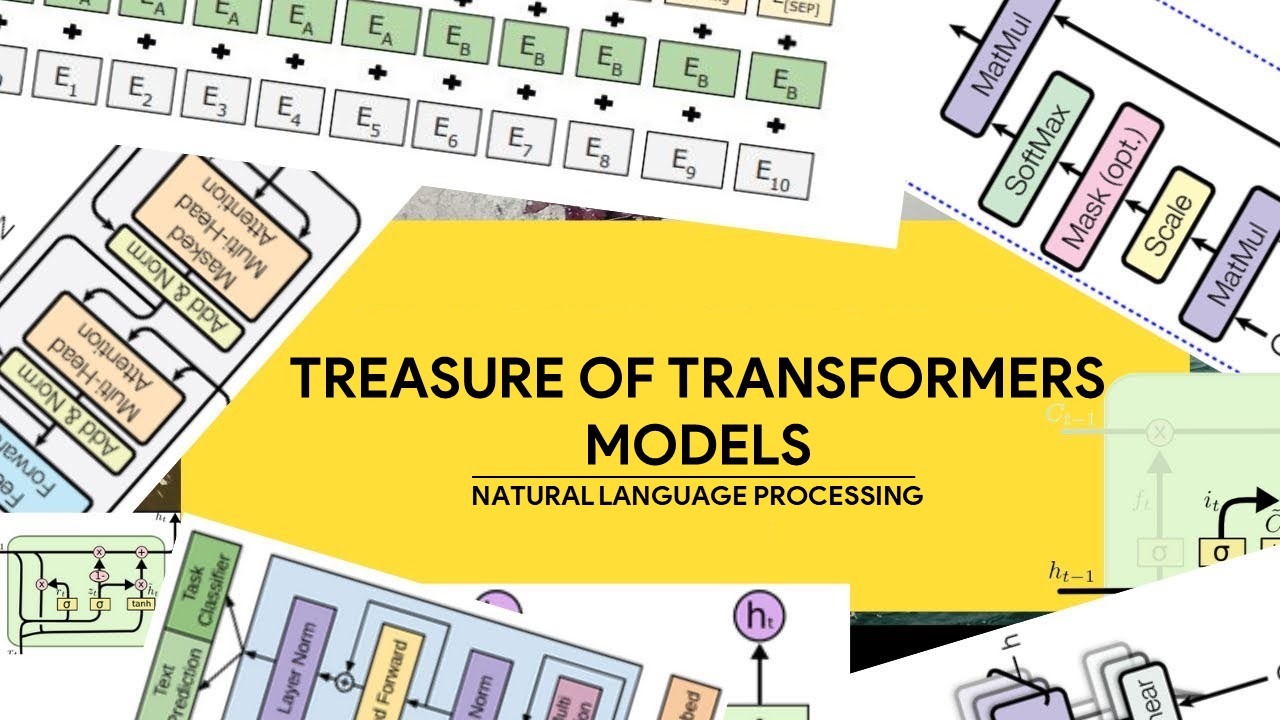

Awesome Treasure of Transformers Models Collection

💁 Awesome Treasure of Transformers Models for Natural Language processing contains papers, videos, blogs, official repo along with colab Notebooks. 🛫☑️

Using VapourSynth with super resolution models and speeding them up with TensorRT.

VSGAN-tensorrt-docker Using image super resolution models with vapoursynth and speeding them up with TensorRT. Using NVIDIA/Torch-TensorRT combined wi

[WACV21] Code for our paper: Samuel, Atzmon and Chechik, "From Generalized zero-shot learning to long-tail with class descriptors"

DRAGON: From Generalized zero-shot learning to long-tail with class descriptors Paper Project Website Video Overview DRAGON learns to correct the bias

Face and Body Tracking for VRM 3D models on the web.

Kalidoface 3D - Face and Full-Body tracking for Vtubing on the web! A sequal to Kalidoface which supports Live2D avatars, Kalidoface 3D is a web app t

The implementation of Learning Instance and Task-Aware Dynamic Kernels for Few Shot Learning

INSTA: Learning Instance and Task-Aware Dynamic Kernels for Few Shot Learning This repository provides the implementation and demo of Learning Instanc

💃 VALSE: A Task-Independent Benchmark for Vision and Language Models Centered on Linguistic Phenomena

💃 VALSE: A Task-Independent Benchmark for Vision and Language Models Centered on Linguistic Phenomena.

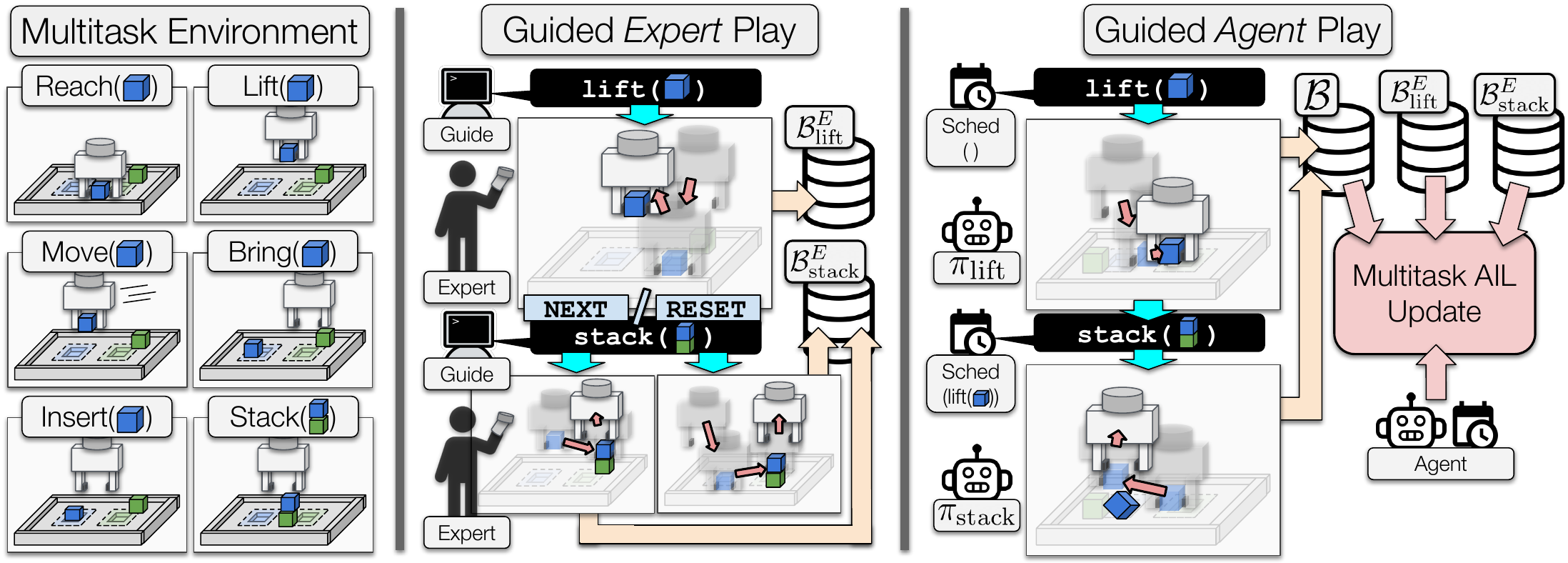

Learning from Guided Play: A Scheduled Hierarchical Approach for Improving Exploration in Adversarial Imitation Learning Source Code

Learning from Guided Play: A Scheduled Hierarchical Approach for Improving Exploration in Adversarial Imitation Learning Source Code

In generative deep geometry learning, we often get many obj files remain to be rendered

a python prompt cli script for blender batch render In deep generative geometry learning, we always get many .obj files to be rendered. Our rendered i

Formulae is a Python library that implements Wilkinson's formulas for mixed-effects models.

formulae formulae is a Python library that implements Wilkinson's formulas for mixed-effects models. The main difference with other implementations li

Convert onnx models to pytorch.

onnx2torch onnx2torch is an ONNX to PyTorch converter. Our converter: Is easy to use – Convert the ONNX model with the function call convert; Is easy

Hierarchical Clustering: O(1)-Approximation for Well-Clustered Graphs

Hierarchical Clustering: O(1)-Approximation for Well-Clustered Graphs This repository contains code to accompany the paper "Hierarchical Clustering: O

Robbing the FED: Directly Obtaining Private Data in Federated Learning with Modified Models

Robbing the FED: Directly Obtaining Private Data in Federated Learning with Modified Models This repo contains a barebones implementation for the atta

The python SDK for Eto, the AI focused data platform for teams bringing AI models to production

Eto Labs Python SDK This is the python SDK for Eto, the AI focused data platform for teams bringing AI models to production. The python SDK makes it e

Code for Temporally Abstract Partial Models

Code for Temporally Abstract Partial Models Accompanies the code for the experimental section of the paper: Temporally Abstract Partial Models, Khetar

Code for WECHSEL: Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models.

WECHSEL Code for WECHSEL: Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models. arXiv: https://arx

Experiments on continual learning from a stream of pretrained models.

Ex-model CL Ex-model continual learning is a setting where a stream of experts (i.e. model's parameters) is available and a CL model learns from them

Discovering Explanatory Sentences in Legal Case Decisions Using Pre-trained Language Models.

Statutory Interpretation Data Set This repository contains the data set created for the following research papers: Savelka, Jaromir, and Kevin D. Ashl

AdamW optimizer for bfloat16 models in pytorch.

Image source AdamW optimizer for bfloat16 models in pytorch. Bfloat16 is currently an optimal tradeoff between range and relative error for deep netwo

Generate high quality pictures. GAN. Generative Adversarial Networks

ESRGAN generate high quality pictures. GAN. Generative Adversarial Networks """ Super-resolution of CelebA using Generative Adversarial Networks. The

Code for WECHSEL: Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models.

WECHSEL Code for WECHSEL: Effective initialization of subword embeddings for cross-lingual transfer of monolingual language models. arXiv: https://arx

MLReef is an open source ML-Ops platform that helps you collaborate, reproduce and share your Machine Learning work with thousands of other users.

The collaboration platform for Machine Learning MLReef is an open source ML-Ops platform that helps you collaborate, reproduce and share your Machine

Predicting Baseball Metric Clusters: Clustering Application in Python Using scikit-learn

Clustering Clustering Application in Python Using scikit-learn This repository contains the prediction of baseball metric clusters using MLB Statcast

These are the materials for the paper "Few-Shot Out-of-Domain Transfer Learning of Natural Language Explanations"

Few-shot-NLEs These are the materials for the paper "Few-Shot Out-of-Domain Transfer Learning of Natural Language Explanations". You can find the smal

Code of the paper "Shaping Visual Representations with Attributes for Few-Shot Learning (ASL)".

Shaping Visual Representations with Attributes for Few-Shot Learning This code implements the Shaping Visual Representations with Attributes for Few-S

Integrate GraphQL with your Pydantic models

graphene-pydantic A Pydantic integration for Graphene. Installation pip install "graphene-pydantic" Examples Here is a simple Pydantic model: import u

Using image super resolution models with vapoursynth and speeding them up with TensorRT

vs-RealEsrganAnime-tensorrt-docker Using image super resolution models with vapoursynth and speeding them up with TensorRT. Also a docker image since

Implementation based on Paper - Learning a Probabilistic Latent Space of Object Shapes via 3D Generative-Adversarial Modeling

Implementation based on Paper - Learning a Probabilistic Latent Space of Object Shapes via 3D Generative-Adversarial Modeling

Code for paper: "Spinning Language Models for Propaganda-As-A-Service"

Spinning Language Models for Propaganda-As-A-Service This is the source code for the Arxiv version of the paper. You can use this Google Colab to expl

Official PyTorch implementation of the paper "Graph-based Generative Face Anonymisation with Pose Preservation" in ICIAP 2021

Contents AnonyGAN Installation Dataset Preparation Generating Images Using Pretrained Model Train and Test New Models Evaluation Acknowledgments Citat

A FAIR dataset of TCV experimental results for validating edge/divertor turbulence models.

TCV-X21 validation for divertor turbulence simulations Quick links Intro Welcome to TCV-X21. We're glad you've found us! This repository is designed t

Pydantic models for pywttr and aiopywttr.

Pydantic models for pywttr and aiopywttr.

A minimal, standalone viewer for 3D animations stored as stop-motion sequences of individual .obj mesh files.

ObjSequenceViewer V0.5 A minimal, standalone viewer for 3D animations stored as stop-motion sequences of individual .obj mesh files. Installation: pip

Authenticate your League of legends account on riot client in a few lines of code.

lol-authenticator v1.0.0 Content index Project Setup Dependencies Project Setup Dependencies Python v3.9.6 If you don't have Python installed on your

PyTorch implementation of normalizing flow models

PyTorch implementation of normalizing flow models

Codes to pre-train T5 (Text-to-Text Transfer Transformer) models pre-trained on Japanese web texts

t5-japanese Codes to pre-train T5 (Text-to-Text Transfer Transformer) models pre-trained on Japanese web texts. The following is a list of models that

PyAbsorp is a python module that has the main focus to help estimate the Sound Absorption Coefficient.

This is a package developed to be use to find the Sound Absorption Coefficient through some implemented models, like Biot-Allard, Johnson-Champoux and

SeqAttack: a framework for adversarial attacks on token classification models

A framework for adversarial attacks against token classification models

Generative Art Synthesizer - a python program that generates python programs that generates generative art

GAS - Generative Art Synthesizer Generative Art Synthesizer - a python program that generates python programs that generates generative art. Examples

Users can free try their models on SIDD dataset based on this code

SIDD benchmark 1 Train python train.py If you want to train your network, just modify the yaml in the options folder. 2 Validation python validation.p

PyTorch implementation of a collections of scalable Video Transformer Benchmarks.

PyTorch implementation of Video Transformer Benchmarks This repository is mainly built upon Pytorch and Pytorch-Lightning. We wish to maintain a colle

Watch faces morph into each other with StyleGAN 2, StyleGAN, and DCGAN!

FaceMorpher FaceMorpher is an innovative project to get a unique face morph (or interpolation for geeks) on a website. Yes, this means you can see fac

easyNeuron is a simple way to create powerful machine learning models, analyze data and research cutting-edge AI.

easyNeuron is a simple way to create powerful machine learning models, analyze data and research cutting-edge AI.

A lightweight library designed to accelerate the process of training PyTorch models by providing a minimal

A lightweight library designed to accelerate the process of training PyTorch models by providing a minimal, but extensible training loop which is flexible enough to handle the majority of use cases, and capable of utilizing different hardware options with no code changes required.

Synthetic Data Generation for tabular, relational and time series data.

An Open Source Project from the Data to AI Lab, at MIT Website: https://sdv.dev Documentation: https://sdv.dev/SDV User Guides Developer Guides Github

Code for ShadeGAN (NeurIPS2021) A Shading-Guided Generative Implicit Model for Shape-Accurate 3D-Aware Image Synthesis

A Shading-Guided Generative Implicit Model for Shape-Accurate 3D-Aware Image Synthesis Project Page | Paper A Shading-Guided Generative Implicit Model

The codebase for our paper "Generative Occupancy Fields for 3D Surface-Aware Image Synthesis" (NeurIPS 2021)

Generative Occupancy Fields for 3D Surface-Aware Image Synthesis (NeurIPS 2021) Project Page | Paper Xudong Xu, Xingang Pan, Dahua Lin and Bo Dai GOF

Official code for "Maximum Likelihood Training of Score-Based Diffusion Models", NeurIPS 2021 (spotlight)

Maximum Likelihood Training of Score-Based Diffusion Models This repo contains the official implementation for the paper Maximum Likelihood Training o

Codes for CyGen, the novel generative modeling framework proposed in "On the Generative Utility of Cyclic Conditionals" (NeurIPS-21)

On the Generative Utility of Cyclic Conditionals This repository is the official implementation of "On the Generative Utility of Cyclic Conditionals"

Autoregressive Models in PyTorch.

Autoregressive This repository contains all the necessary PyTorch code, tailored to my presentation, to train and generate data from WaveNet-like auto

Models, datasets and tools for Facial keypoints detection

Template for Data Science Project This repo aims to give a robust starting point to any Data Science related project. It contains readymade tools setu

Explainability of the Implications of Supervised and Unsupervised Face Image Quality Estimations Through Activation Map Variation Analyses in Face Recognition Models

Explainable_FIQA_WITH_AMVA Note This is the official repository of the paper: Explainability of the Implications of Supervised and Unsupervised Face I

Learning multiple gaits of quadruped robot using hierarchical reinforcement learning

Learning multiple gaits of quadruped robot using hierarchical reinforcement learning We propose a method to learn multiple gaits of quadruped robot us

Official implementation of "A Shared Representation for Photorealistic Driving Simulators" in PyTorch.

A Shared Representation for Photorealistic Driving Simulators The official code for the paper: "A Shared Representation for Photorealistic Driving Sim

(AAAI2022) Style Mixing and Patchwise Prototypical Matching for One-Shot Unsupervised Domain Adaptive Semantic Segmentation

SM-PPM This is a Pytorch implementation of our paper "Style Mixing and Patchwise Prototypical Matching for One-Shot Unsupervised Domain Adaptive Seman

Music Source Separation; Train & Eval & Inference piplines and pretrained models we used for 2021 ISMIR MDX Challenge.

Introduction 1. Usage (For MSS) 1.1 Prepare running environment 1.2 Use pretrained model 1.3 Train new MSS models from scratch 1.3.1 How to train 1.3.

Visual Adversarial Imitation Learning using Variational Models (VMAIL)

Visual Adversarial Imitation Learning using Variational Models (VMAIL) This is the official implementation of the NeurIPS 2021 paper. Project website

A collection of models, views, middlewares, and forms to help secure a Django project.

Django-Security This package offers a number of models, views, middlewares and forms to facilitate security hardening of Django applications. Full doc

RuleBERT: Teaching Soft Rules to Pre-Trained Language Models

RuleBERT: Teaching Soft Rules to Pre-Trained Language Models (Paper) (Slides) (Video) RuleBERT is a pre-trained language model that has been fine-tune

An MLOps framework to package, deploy, monitor and manage thousands of production machine learning models

Seldon Core: Blazing Fast, Industry-Ready ML An open source platform to deploy your machine learning models on Kubernetes at massive scale. Overview S

Uni-Fold: Training your own deep protein-folding models.

Uni-Fold: Training your own deep protein-folding models. This package provides and implementation of a trainable, Transformer-based deep protein foldi

Torchrecipes provides a set of reproduci-able, re-usable, ready-to-run RECIPES for training different types of models, across multiple domains, on PyTorch Lightning.

Recipes are a standard, well supported set of blueprints for machine learning engineers to rapidly train models using the latest research techniques without significant engineering overhead.Specifically, recipes aims to provide- Consistent access to pre-trained SOTA models ready for production- Reference implementations for SOTA research reproducibility, and infrastructure to guarantee correctness, efficiency, and interoperability.

State-of-the-art NLP through transformer models in a modular design and consistent APIs.

Trapper (Transformers wRAPPER) Trapper is an NLP library that aims to make it easier to train transformer based models on downstream tasks. It wraps h

Public repository of the 3DV 2021 paper "Generative Zero-Shot Learning for Semantic Segmentation of 3D Point Clouds"

Generative Zero-Shot Learning for Semantic Segmentation of 3D Point Clouds Björn Michele1), Alexandre Boulch1), Gilles Puy1), Maxime Bucher1) and Rena

EMNLP 2021 paper Models and Datasets for Cross-Lingual Summarisation.

This repository contains data and code for our EMNLP 2021 paper Models and Datasets for Cross-Lingual Summarisation. Please contact me at [email protected]

A modular application for performing anomaly detection in networks

Deep-Learning-Models-for-Network-Annomaly-Detection The modular app consists for mainly three annomaly detection algorithms. The system supports model

A concise but complete implementation of CLIP with various experimental improvements from recent papers

x-clip (wip) A concise but complete implementation of CLIP with various experimental improvements from recent papers Install $ pip install x-clip Usag

A concise but complete implementation of CLIP with various experimental improvements from recent papers

x-clip (wip) A concise but complete implementation of CLIP with various experimental improvements from recent papers Install $ pip install x-clip Usag

Model Validation Toolkit is a collection of tools to assist with validating machine learning models prior to deploying them to production and monitoring them after deployment to production.

Model Validation Toolkit is a collection of tools to assist with validating machine learning models prior to deploying them to production and monitoring them after deployment to production.

Rapid experimentation and scaling of deep learning models on molecular and crystal graphs.

LitMatter A template for rapid experimentation and scaling deep learning models on molecular and crystal graphs. How to use Clone this repository and

Markov Attention Models

Introduction This repo contains code for reproducing the results in the paper Graphical Models with Attention for Context-Specific Independence and an

Label Hallucination for Few-Shot Classification

Label Hallucination for Few-Shot Classification This repo covers the implementation of the following paper: Label Hallucination for Few-Shot Classific

The best solution of the Weather Prediction track in the Yandex Shifts challenge

yandex-shifts-weather The repository contains information about my solution for the Weather Prediction track in the Yandex Shifts challenge https://re

SHRIMP: Sparser Random Feature Models via Iterative Magnitude Pruning

SHRIMP: Sparser Random Feature Models via Iterative Magnitude Pruning This repository is the official implementation of "SHRIMP: Sparser Random Featur

S-attack library. Official implementation of two papers "Are socially-aware trajectory prediction models really socially-aware?" and "Vehicle trajectory prediction works, but not everywhere".

S-attack library: A library for evaluating trajectory prediction models This library contains two research projects to assess the trajectory predictio

Add a help desk or knowledge base to your Django project with only a few lines of boilerplate code.

This project is no longer maintained. If you are interested in taking over the project, email [email protected]. Welcome to django-knowledge! django-

Machine translation models released by the Gourmet project

Gourmet Models Overview The Gourmet project has released several machine translation models to translate low-resource languages. This repository conta

YourTTS: Towards Zero-Shot Multi-Speaker TTS and Zero-Shot Voice Conversion for everyone

YourTTS: Towards Zero-Shot Multi-Speaker TTS and Zero-Shot Voice Conversion for everyone In our recent paper we propose the YourTTS model. YourTTS bri

Few-Shot Object Detection via Association and DIscrimination

Few-Shot Object Detection via Association and DIscrimination Code release of our NeurIPS 2021 paper: Few-Shot Object Detection via Association and DIs

Uni-Fold: Training your own deep protein-folding models

Uni-Fold: Training your own deep protein-folding models. This package provides an implementation of a trainable, Transformer-based deep protein foldin

The easiest tool for extracting radiomics features and training ML models on them.

Simple pipeline for experimenting with radiomics features Installation git clone https://github.com/piotrekwoznicki/ClassyRadiomics.git cd classrad pi

✨️🐍 SPARQL endpoint built with RDFLib to serve machine learning models, or any other logic implemented in Python

✨ SPARQL endpoint for RDFLib rdflib-endpoint is a SPARQL endpoint based on a RDFLib Graph to easily serve machine learning models, or any other logic

Data pipelines for both TensorFlow and PyTorch!

rapidnlp-datasets Data pipelines for both TensorFlow and PyTorch ! If you want to load public datasets, try: tensorflow/datasets huggingface/datasets

Generative Handwriting using LSTM Mixture Density Network with TensorFlow

Generative Handwriting Demo using TensorFlow An attempt to implement the random handwriting generation portion of Alex Graves' paper. See my blog post

The Simplest DCGAN Implementation

DCGAN in TensorLayer This is the TensorLayer implementation of Deep Convolutional Generative Adversarial Networks. Looking for Text to Image Synthesis

Generative Adversarial Text to Image Synthesis

Text To Image Synthesis This is a tensorflow implementation of synthesizing images. The images are synthesized using the GAN-CLS Algorithm from the pa

Unsupervised Image to Image Translation with Generative Adversarial Networks

Unsupervised Image to Image Translation with Generative Adversarial Networks Paper: Unsupervised Image to Image Translation with Generative Adversaria

The implementation code for "DAGAN: Deep De-Aliasing Generative Adversarial Networks for Fast Compressed Sensing MRI Reconstruction"

DAGAN This is the official implementation code for DAGAN: Deep De-Aliasing Generative Adversarial Networks for Fast Compressed Sensing MRI Reconstruct

TensorFlow implementation of the paper "Hierarchical Attention Networks for Document Classification"

Hierarchical Attention Networks for Document Classification This is an implementation of the paper Hierarchical Attention Networks for Document Classi

Tensorflow implementation of Character-Aware Neural Language Models.

Character-Aware Neural Language Models Tensorflow implementation of Character-Aware Neural Language Models. The original code of author can be found h

A TensorFlow implementation of the Mnemonic Descent Method.

MDM A Tensorflow implementation of the Mnemonic Descent Method. Mnemonic Descent Method: A recurrent process applied for end-to-end face alignment G.

Hierarchical Attentive Recurrent Tracking

Hierarchical Attentive Recurrent Tracking This is an official Tensorflow implementation of single object tracking in videos by using hierarchical atte