1213 Repositories

Python self-training-ensembles Libraries

Colossal-AI: A Unified Deep Learning System for Large-Scale Parallel Training

ColossalAI An integrated large-scale model training system with efficient parallelization techniques Installation PyPI pip install colossalai Install

AugMax: Adversarial Composition of Random Augmentations for Robust Training

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

Exponential Graph is Provably Efficient for Decentralized Deep Training

Exponential Graph is Provably Efficient for Decentralized Deep Training This code repository is for the paper Exponential Graph is Provably Efficient

![[NeurIPS'21]](https://github.com/VITA-Group/AugMax/raw/main/images/AugMax.PNG)

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

AugMax: Adversarial Composition of Random Augmentations for Robust Training Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Anima Anandkumar, an

Official implementation of NeurIPS 2021 paper "Contextual Similarity Aggregation with Self-attention for Visual Re-ranking"

CSA: Contextual Similarity Aggregation with Self-attention for Visual Re-ranking PyTorch training code for CSA (Contextual Similarity Aggregation). We

Improving Transferability of Representations via Augmentation-Aware Self-Supervision

Improving Transferability of Representations via Augmentation-Aware Self-Supervision Accepted to NeurIPS 2021 TL;DR: Learning augmentation-aware infor

In this project I played with mlflow, streamlit and fastapi to create a training and prediction app on digits

Fastapi + MLflow + streamlit Setup env. I hope I covered all. pip install -r requirements.txt Start app Go in the root dir and run these Streamlit str

PyTorch implementation of "A Simple Baseline for Low-Budget Active Learning".

A Simple Baseline for Low-Budget Active Learning This repository is the implementation of A Simple Baseline for Low-Budget Active Learning. In this pa

Asterisk is a framework to generate high-quality training datasets at scale

Asterisk is a framework to generate high-quality training datasets at scale

![[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training](https://github.com/TLMichael/Delusive-Adversary/raw/main/fig/Thumbnail.png)

[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training

Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training Code for NeurIPS 2021 paper "Better Safe Than Sorry: Preventing Delu

code for generating data set ES-ImageNet with corresponding training code

es-imagenet-master code for generating data set ES-ImageNet with corresponding training code dataset generator some codes of ODG algorithm The variabl

A Context-aware Visual Attention-based training pipeline for Object Detection from a Webpage screenshot!

CoVA: Context-aware Visual Attention for Webpage Information Extraction Abstract Webpage information extraction (WIE) is an important step to create k

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

Project repo for the paper SILT: Self-supervised Lighting Transfer Using Implicit Image Decomposition

SILT: Self-supervised Lighting Transfer Using Implicit Image Decomposition (BMVC 2021) Project repo for the paper SILT: Self-supervised Lighting Trans

IEEE Winter Conference on Applications of Computer Vision 2022 Accepted

SSKT(Accepted WACV2022) Concept map Dataset Image dataset CIFAR10 (torchvision) CIFAR100 (torchvision) STL10 (torchvision) Pascal VOC (torchvision) Im

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

This is the official source code for SLATE. We provide the code for the model, the training code, and a dataset loader for the 3D Shapes dataset. This code is implemented in Pytorch.

SLATE This is the official source code for SLATE. We provide the code for the model, the training code and a dataset loader for the 3D Shapes dataset.

PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Condition Layer Normalization and Semi-Supervised Training in Text-To-Speech

Cross-Speaker-Emotion-Transfer - PyTorch Implementation PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Conditio

PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data.

Anti-Backdoor Learning PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data. Check the unlearning effect

wger Workout Manager is a free, open source web application that helps you manage your personal workouts, weight and diet plans and can also be used as a simple gym management utility.

wger (ˈvɛɡɐ) Workout Manager is a free, open source web application that helps you manage your personal workouts, weight and diet plans and can also be used as a simple gym management utility.

Zero-dependency Cryptography Python Module with a self made method

TesohhCrypt TesohhCrypt is a zero-dependency Cryptography Python Module, with a method that i made. (likely someone already made a similar one, but i

Efficient Training of Visual Transformers with Small Datasets

Official codes for "Efficient Training of Visual Transformers with Small Datasets", NerIPS 2021.

Training Very Deep Neural Networks Without Skip-Connections

DiracNets v2 update (January 2018): The code was updated for DiracNets-v2 in which we removed NCReLU by adding per-channel a and b multipliers without

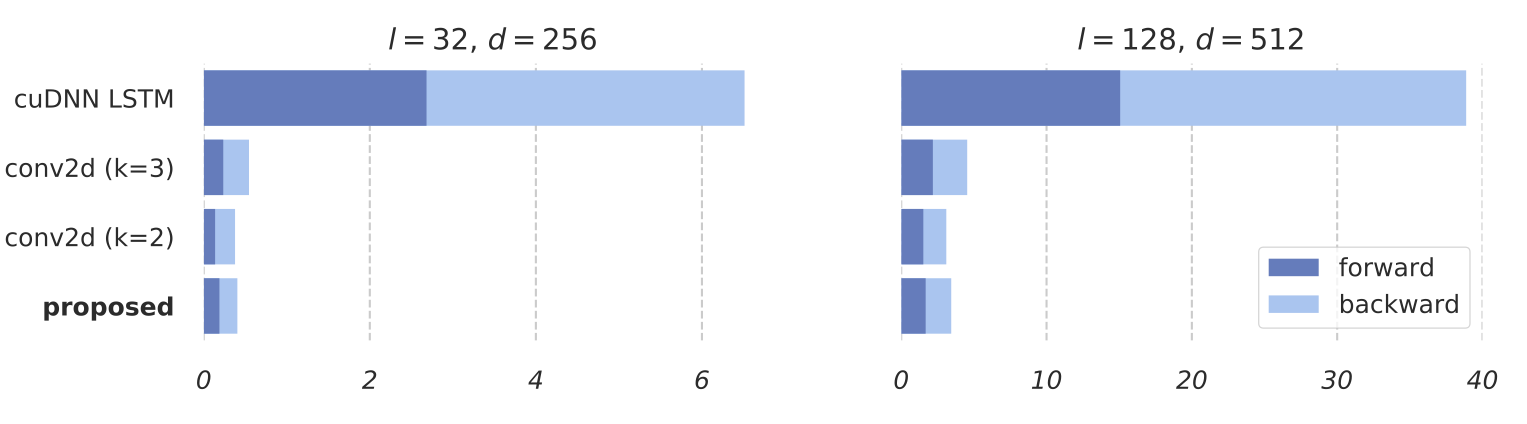

Training RNNs as Fast as CNNs

News SRU++, a new SRU variant, is released. [tech report] [blog] The experimental code and SRU++ implementation are available on the dev branch which

PyTorch Implementation of Unsupervised Depth Completion with Calibrated Backprojection Layers (ORAL, ICCV 2021)

Unsupervised Depth Completion with Calibrated Backprojection Layers PyTorch implementation of Unsupervised Depth Completion with Calibrated Backprojec

Self-supervised learning on Graph Representation Learning (node-level task)

graph_SSL Self-supervised learning on Graph Representation Learning (node-level task) How to run the code To run GRACE, sh run_GRACE.sh To run GCA, sh

Official implementation of NeurIPS 2021 paper "Contextual Similarity Aggregation with Self-attention for Visual Re-ranking"

CSA: Contextual Similarity Aggregation with Self-attention for Visual Re-ranking PyTorch training code for CSA (Contextual Similarity Aggregation). We

This repository is the official implementation of Unleashing the Power of Contrastive Self-Supervised Visual Models via Contrast-Regularized Fine-Tuning (NeurIPS21).

Core-tuning This repository is the official implementation of ``Unleashing the Power of Contrastive Self-Supervised Visual Models via Contrast-Regular

PyTorch Implementation of Unsupervised Depth Completion with Calibrated Backprojection Layers (ORAL, ICCV 2021)

PyTorch Implementation of Unsupervised Depth Completion with Calibrated Backprojection Layers (ORAL, ICCV 2021)

CVNets: A library for training computer vision networks

CVNets: A library for training computer vision networks This repository contains the source code for training computer vision models. Specifically, it

This repo is for Self-Supervised Monocular Depth Estimation with Internal Feature Fusion(arXiv), BMVC2021

DIFFNet This repo is for Self-Supervised Monocular Depth Estimation with Internal Feature Fusion(arXiv), BMVC2021 A new backbone for self-supervised d

Codebase for the self-supervised goal reaching benchmark introduced in the LEXA paper

LEXA Benchmark Codebase for the self-supervised goal reaching benchmark introduced in the LEXA paper (Discovering and Achieving Goals via World Models

![[NeurIPS 2021 Spotlight] Aligning Pretraining for Detection via Object-Level Contrastive Learning](https://github.com/hologerry/SoCo/raw/main/figures/overview.png)

[NeurIPS 2021 Spotlight] Aligning Pretraining for Detection via Object-Level Contrastive Learning

SoCo [NeurIPS 2021 Spotlight] Aligning Pretraining for Detection via Object-Level Contrastive Learning By Fangyun Wei*, Yue Gao*, Zhirong Wu, Han Hu,

Simple Discord bot for snekbox (sandboxed Python code execution), self-host or use a global instance

snakeboxed Simple Discord bot for snekbox (sandboxed Python code execution), self-host or use a global instance

This tutorial will guide you through the process of self-hosting Polygon

Hosting guide This tutorial will guide you through the process of self-hosting Polygon Before starting Make sure you have the following tools installe

BERT model training impelmentation using 1024 A100 GPUs for MLPerf Training v1.1

Pre-trained checkpoint and bert config json file Location of checkpoint and bert config json file This MLCommons members Google Drive location contain

For auto aligning, cropping, and scaling HR and LR images for training image based neural networks

ImgAlign For auto aligning, cropping, and scaling HR and LR images for training image based neural networks Usage Make sure OpenCV is installed, 'pip

NAS-HPO-Bench-II is the first benchmark dataset for joint optimization of CNN and training HPs.

NAS-HPO-Bench-II API Overview NAS-HPO-Bench-II is the first benchmark dataset for joint optimization of CNN and training HPs. It helps a fair and low-

Dynamic Bottleneck for Robust Self-Supervised Exploration

Dynamic Bottleneck Introduction This is a TensorFlow based implementation for our paper on "Dynamic Bottleneck for Robust Self-Supervised Exploration"

Improving Non-autoregressive Generation with Mixup Training

MIST Training MIST TRAIN_FILE=/your/path/to/train.json VALID_FILE=/your/path/to/valid.json OUTPUT_DIR=/your/path/to/save_checkpoints CACHE_DIR=/your/p

Official codes: Self-Supervised Learning by Estimating Twin Class Distribution

TWIST: Self-Supervised Learning by Estimating Twin Class Distributions Codes and pretrained models for TWIST: @article{wang2021self, title={Self-Sup

NeurIPS'21: Probabilistic Margins for Instance Reweighting in Adversarial Training (Pytorch implementation).

source code for NeurIPS21 paper robabilistic Margins for Instance Reweighting in Adversarial Training

Code release for "Cycle Self-Training for Domain Adaptation" (NeurIPS 2021)

CST Code release for "Cycle Self-Training for Domain Adaptation" (NeurIPS 2021) Prerequisites torch=1.7.0 torchvision qpsolvers numpy prettytable tqd

![[BMVC 2021] Official PyTorch Implementation of Self-supervised learning of Image Scale and Orientation Estimation](https://github.com/bluedream1121/self-sca-ori/raw/main/assets/architecture.png)

[BMVC 2021] Official PyTorch Implementation of Self-supervised learning of Image Scale and Orientation Estimation

Self-Supervised Learning of Image Scale and Orientation Estimation (BMVC 2021) This is the official implementation of the paper "Self-Supervised Learn

A library for preparing, training, and evaluating scalable deep learning hybrid recommender systems using PyTorch.

collie Collie is a library for preparing, training, and evaluating implicit deep learning hybrid recommender systems, named after the Border Collie do

Council Data Project is an open-source project dedicated to providing journalists, activists, researchers, and all members of each community we serve with the tools they need to stay informed and hold their Council Members accountable.

CDP - Self Council Data Project Council Data Project is an open-source project dedicated to providing journalists, activists, researchers, and all mem

QuakeLabeler is a Python package to create and manage your seismic training data, processes, and visualization in a single place — so you can focus on building the next big thing.

QuakeLabeler Quake Labeler was born from the need for seismologists and developers who are not AI specialists to easily, quickly, and independently bu

🔥🔥High-Performance Face Recognition Library on PaddlePaddle & PyTorch🔥🔥

face.evoLVe: High-Performance Face Recognition Library based on PaddlePaddle & PyTorch Evolve to be more comprehensive, effective and efficient for fa

The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization

PRIMER The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization. PRIMER is a pre-trained model for mu

Code for MarioNette: Self-Supervised Sprite Learning, in NeurIPS 2021

MarioNette | Webpage | Paper | Video MarioNette: Self-Supervised Sprite Learning Dmitriy Smirnov, Michaël Gharbi, Matthew Fisher, Vitor Guizilini, Ale

Implements the training, testing and editing tools for "Pluralistic Image Completion"

Pluralistic Image Completion ArXiv | Project Page | Online Demo | Video(demo) This repository implements the training, testing and editing tools for "

A Deep Learning based project for creating line art portraits.

ArtLine The main aim of the project is to create amazing line art portraits. Sounds Intresting,let's get to the pictures!! Model-(Smooth) Model-(Quali

The Self-Supervised Learner can be used to train a classifier with fewer labeled examples needed using self-supervised learning.

Published by SpaceML • About SpaceML • Quick Colab Example Self-Supervised Learner The Self-Supervised Learner can be used to train a classifier with

PyTorch image models, scripts, pretrained weights -- ResNet, ResNeXT, EfficientNet, EfficientNetV2, NFNet, Vision Transformer, MixNet, MobileNet-V3/V2, RegNet, DPN, CSPNet, and more

PyTorch Image Models Sponsors What's New Introduction Models Features Results Getting Started (Documentation) Train, Validation, Inference Scripts Awe

Synthetic structured data generators

Join us on What is Synthetic Data? Synthetic data is artificially generated data that is not collected from real world events. It replicates the stati

Source code for the paper "PLOME: Pre-training with Misspelled Knowledge for Chinese Spelling Correction" in ACL2021

PLOME:Pre-training with Misspelled Knowledge for Chinese Spelling Correction (ACL2021) This repository provides the code and data of the work in ACL20

PaSST: Efficient Training of Audio Transformers with Patchout

PaSST: Efficient Training of Audio Transformers with Patchout This is the implementation for Efficient Training of Audio Transformers with Patchout Pa

Scalable training for dense retrieval models.

Scalable implementation of dense retrieval. Training on cluster By default it trains locally: PYTHONPATH=.:$PYTHONPATH python dpr_scale/main.py traine

NVIDIA Merlin is an open source library providing end-to-end GPU-accelerated recommender systems, from feature engineering and preprocessing to training deep learning models and running inference in production.

NVIDIA Merlin NVIDIA Merlin is an open source library designed to accelerate recommender systems on NVIDIA’s GPUs. It enables data scientists, machine

The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization

PRIMER The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization. PRIMER is a pre-trained model for mu

DPC: Unsupervised Deep Point Correspondence via Cross and Self Construction (3DV 2021)

DPC: Unsupervised Deep Point Correspondence via Cross and Self Construction (3DV 2021) This repo is the implementation of DPC. Tested environment Pyth

MG-GCN: Scalable Multi-GPU GCN Training Framework

MG-GCN MG-GCN: multi-GPU GCN training framework. For more information, please read our paper. After cloning our repository, run git submodule update -

Demystifying How Self-Supervised Features Improve Training from Noisy Labels

Demystifying How Self-Supervised Features Improve Training from Noisy Labels This code is a PyTorch implementation of the paper "[Demystifying How Sel

Self-Supervised Monocular DepthEstimation with Internal Feature Fusion(arXiv), BMVC2021

DIFFNet This repo is for Self-Supervised Monocular DepthEstimation with Internal Feature Fusion(arXiv), BMVC2021 A new backbone for self-supervised de

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System Authors: Yixuan Su, Lei Shu, Elman Mansimov, Arshit Gupta, Deng Cai, Yi-An Lai

Codes for CIKM'21 paper 'Self-Supervised Graph Co-Training for Session-based Recommendation'.

COTREC Codes for CIKM'21 paper 'Self-Supervised Graph Co-Training for Session-based Recommendation'. Requirements: Python 3.7, Pytorch 1.6.0 Best Hype

Codes for AAAI'21 paper 'Self-Supervised Hypergraph Convolutional Networks for Session-based Recommendation'

DHCN Codes for AAAI 2021 paper 'Self-Supervised Hypergraph Convolutional Networks for Session-based Recommendation'. Please note that the default link

Self-supervised Graph Learning for Recommendation

SGL This is our Tensorflow implementation for our SIGIR 2021 paper: Jiancan Wu, Xiang Wang, Fuli Feng, Xiangnan He, Liang Chen, Jianxun Lian,and Xing

Implementation of ICCV 2021 oral paper -- A Novel Self-Supervised Learning for Gaussian Mixture Model

SS-GMM Implementation of ICCV 2021 oral paper -- Self-Supervised Image Prior Learning with GMM from a Single Noisy Image with supplementary material R

Designing a Practical Degradation Model for Deep Blind Image Super-Resolution (ICCV, 2021) (PyTorch) - We released the training code!

Designing a Practical Degradation Model for Deep Blind Image Super-Resolution Kai Zhang, Jingyun Liang, Luc Van Gool, Radu Timofte Computer Vision Lab

Code associated with the paper "Towards Understanding the Data Dependency of Mixup-style Training".

Mixup-Data-Dependency Code associated with the paper "Towards Understanding the Data Dependency of Mixup-style Training". Running Alternating Line Exp

Anomaly detection in multi-agent trajectories: Code for training, evaluation and the OpenAI highway simulation.

Anomaly Detection in Multi-Agent Trajectories for Automated Driving This is the official project page including the paper, code, simulation, baseline

CPC-big and k-means clustering for zero-resource speech processing

The CPC-big model and k-means checkpoints used in Analyzing Speaker Information in Self-Supervised Models to Improve Zero-Resource Speech Processing.

collect training and calibration data for gaze tracking

Collect Training and Calibration Data for Gaze Tracking This tool allows collecting gaze data necessary for personal calibration or training of eye-tr

Code for SALT: Stackelberg Adversarial Regularization, EMNLP 2021.

SALT: Stackelberg Adversarial Regularization Code for Adversarial Regularization as Stackelberg Game: An Unrolled Optimization Approach, EMNLP 2021. R

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System Authors: Yixuan Su, Lei Shu, Elman Mansimov, Arshit Gupta, Deng Cai, Yi-An Lai

Traffic4D: Single View Reconstruction of Repetitious Activity Using Longitudinal Self-Supervision

Traffic4D: Single View Reconstruction of Repetitious Activity Using Longitudinal Self-Supervision Project | PDF | Poster Fangyu Li, N. Dinesh Reddy, X

Official Repository for our ICCV2021 paper: Continual Learning on Noisy Data Streams via Self-Purified Replay

Continual Learning on Noisy Data Streams via Self-Purified Replay This repository contains the official PyTorch implementation for our ICCV2021 paper.

Propose a principled and practically effective framework for unsupervised accuracy estimation and error detection tasks with theoretical analysis and state-of-the-art performance.

Detecting Errors and Estimating Accuracy on Unlabeled Data with Self-training Ensembles This project is for the paper: Detecting Errors and Estimating

An Agnostic Computer Vision Framework - Pluggable to any Training Library: Fastai, Pytorch-Lightning with more to come

IceVision is the first agnostic computer vision framework to offer a curated collection with hundreds of high-quality pre-trained models from torchvision, MMLabs, and soon Pytorch Image Models. It orchestrates the end-to-end deep learning workflow allowing to train networks with easy-to-use robust high-performance libraries such as Pytorch-Lightning and Fastai

Official PyTorch Implementation of Learning Self-Similarity in Space and Time as Generalized Motion for Video Action Recognition, ICCV 2021

Official PyTorch Implementation of Learning Self-Similarity in Space and Time as Generalized Motion for Video Action Recognition, ICCV 2021

Composing methods for ML training efficiency

MosaicML Composer contains a library of methods, and ways to compose them together for more efficient ML training.

This is a library for training and applying sparse fine-tunings with torch and transformers.

This is a library for training and applying sparse fine-tunings with torch and transformers. Please refer to our paper Composable Sparse Fine-Tuning f

ECCV18 Workshops - Enhanced SRGAN. Champion PIRM Challenge on Perceptual Super-Resolution. The training codes are in BasicSR.

ESRGAN (Enhanced SRGAN) [ 🚀 BasicSR] [Real-ESRGAN] ✨ New Updates. We have extended ESRGAN to Real-ESRGAN, which is a more practical algorithm for rea

Cluster-GCN: An Efficient Algorithm for Training Deep and Large Graph Convolutional Networks

Cluster-GCN: An Efficient Algorithm for Training Deep and Large Graph Convolutional Networks This repository contains a TensorFlow implementation of "

![[ICML 2020]](https://github.com/Shen-Lab/SS-GCNs/raw/master/ssgcn.png)

[ICML 2020] "When Does Self-Supervision Help Graph Convolutional Networks?" by Yuning You, Tianlong Chen, Zhangyang Wang, Yang Shen

When Does Self-Supervision Help Graph Convolutional Networks? PyTorch implementation for When Does Self-Supervision Help Graph Convolutional Networks?

Graph Attention Networks

GAT Graph Attention Networks (Veličković et al., ICLR 2018): https://arxiv.org/abs/1710.10903 GAT layer t-SNE + Attention coefficients on Cora Overvie

PyTorch implementation of paper “Unbiased Scene Graph Generation from Biased Training”

A new codebase for popular Scene Graph Generation methods (2020). Visualization & Scene Graph Extraction on custom images/datasets are provided. It's also a PyTorch implementation of paper “Unbiased Scene Graph Generation from Biased Training CVPR 2020”

Code for reproducing experiments in "Improved Training of Wasserstein GANs"

Improved Training of Wasserstein GANs Code for reproducing experiments in "Improved Training of Wasserstein GANs". Prerequisites Python, NumPy, Tensor

Supervision Exists Everywhere: A Data Efficient Contrastive Language-Image Pre-training Paradigm

DeCLIP Supervision Exists Everywhere: A Data Efficient Contrastive Language-Image Pre-training Paradigm. Our paper is available in arxiv Updates ** Ou

Solutions of Reinforcement Learning 2nd Edition

Solutions of Reinforcement Learning, An Introduction

PyTorch implementation of "LayoutTransformer: Layout Generation and Completion with Self-attention"

PyTorch implementation of "LayoutTransformer: Layout Generation and Completion with Self-attention" to appear in ICCV 2021

A JAX implementation of Broaden Your Views for Self-Supervised Video Learning, or BraVe for short.

BraVe This is a JAX implementation of Broaden Your Views for Self-Supervised Video Learning, or BraVe for short. The model provided in this package wa

Self-Supervised Speech Pre-training and Representation Learning Toolkit.

What's New Sep 2021: We host a challenge in AAAI workshop: The 2nd Self-supervised Learning for Audio and Speech Processing! See SUPERB official site

UniSpeech - Large Scale Self-Supervised Learning for Speech

UniSpeech The family of UniSpeech: UniSpeech (ICML 2021): Unified Pre-training for Self-Supervised Learning and Supervised Learning for ASR UniSpeech-

Explaining in Style: Training a GAN to explain a classifier in StyleSpace

Explaining in Style: Official TensorFlow Colab Explaining in Style: Training a GAN to explain a classifier in StyleSpace Oran Lang, Yossi Gandelsman,

Regularizing Nighttime Weirdness: Efficient Self-supervised Monocular Depth Estimation in the Dark (ICCV 2021)

Regularizing Nighttime Weirdness: Efficient Self-supervised Monocular Depth Estimation in the Dark (ICCV 2021) Kun Wang, Zhenyu Zhang, Zhiqiang Yan, X

This is the implementation of "SELF SUPERVISED REPRESENTATION LEARNING WITH DEEP CLUSTERING FOR ACOUSTIC UNIT DISCOVERY FROM RAW SPEECH" submitted to ICASSP 2022

CPC_DeepCluster This is the implementation of "SELF SUPERVISED REPRESENTATION LEARNING WITH DEEP CLUSTERING FOR ACOUSTIC UNIT DISCOVERY FROM RAW SPEEC

The self-supervised goal reaching benchmark introduced in Discovering and Achieving Goals via World Models

Lexa-Benchmark Codebase for the self-supervised goal reaching benchmark introduced in 'Discovering and Achieving Goals via World Models'. Setup Create

Official implementation of "Motif-based Graph Self-Supervised Learning forMolecular Property Prediction"

Motif-based Graph Self-Supervised Learning for Molecular Property Prediction Official Pytorch implementation of NeurIPS'21 paper "Motif-based Graph Se