2719 Repositories

Python self-training-text-generation Libraries

Pytorch codes for "Self-supervised Multi-view Stereo via Effective Co-Segmentation and Data-Augmentation"

Self-Supervised-MVS This repository is the official PyTorch implementation of our AAAI 2021 paper: "Self-supervised Multi-view Stereo via Effective Co

(CVPR2021) Kaleido-BERT: Vision-Language Pre-training on Fashion Domain

Kaleido-BERT: Vision-Language Pre-training on Fashion Domain Mingchen Zhuge*, Dehong Gao*, Deng-Ping Fan#, Linbo Jin, Ben Chen, Haoming Zhou, Minghui

TensorFlow Decision Forests (TF-DF) is a collection of state-of-the-art algorithms for the training, serving and interpretation of Decision Forest models.

TensorFlow Decision Forests (TF-DF) is a collection of state-of-the-art algorithms for the training, serving and interpretation of Decision Forest models. The library is a collection of Keras models and supports classification, regression and ranking. TF-DF is a TensorFlow wrapper around the Yggdrasil Decision Forests C++ libraries. Models trained with TF-DF are compatible with Yggdrasil Decision Forests' models, and vice versa.

VMD Audio/Text control with natural language

This repository is a proof of principle for performing Molecular Dynamics analysis, in this case with the program VMD, via natural language commands.

Code for our paper "Mask-Align: Self-Supervised Neural Word Alignment" in ACL 2021

Mask-Align: Self-Supervised Neural Word Alignment This is the implementation of our work Mask-Align: Self-Supervised Neural Word Alignment. @inproceed

TikTok X-Gorgon & X-Khronos Generation Algorithm

TikTok X-Gorgon & X-Khronos Generation Algorithm X-Gorgon and X-Khronos headers are required to call tiktok api. I will provide you API as rental or s

ActNN: Reducing Training Memory Footprint via 2-Bit Activation Compressed Training

ActNN : Activation Compressed Training This is the official project repository for ActNN: Reducing Training Memory Footprint via 2-Bit Activation Comp

Orchest is a browser based IDE for Data Science.

Orchest is a browser based IDE for Data Science. It integrates your favorite Data Science tools out of the box, so you don’t have to. The application is easy to use and can run on your laptop as well as on a large scale cloud cluster.

When Does Pretraining Help? Assessing Self-Supervised Learning for Law and the CaseHOLD Dataset of 53,000+ Legal Holdings

When Does Pretraining Help? Assessing Self-Supervised Learning for Law and the CaseHOLD Dataset of 53,000+ Legal Holdings This is the repository for t

PyTorch code of my ICDAR 2021 paper Vision Transformer for Fast and Efficient Scene Text Recognition (ViTSTR)

Vision Transformer for Fast and Efficient Scene Text Recognition (ICDAR 2021) ViTSTR is a simple single-stage model that uses a pre-trained Vision Tra

![[CVPR'21 Oral] Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning](https://github.com/researchmm/soho/raw/master/resources/soho.png)

[CVPR'21 Oral] Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning

Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning [CVPR'21, Oral] By Zhicheng Huang*, Zhaoyang Zeng*, Yupan H

Tensorflow implementation for Self-supervised Graph Learning for Recommendation

If the compilation is successful, the evaluator of cpp implementation will be called automatically. Otherwise, the evaluator of python implementation will be called.

Unsupervised Pre-training for Person Re-identification (LUPerson)

LUPerson Unsupervised Pre-training for Person Re-identification (LUPerson). The repository is for our CVPR2021 paper Unsupervised Pre-training for Per

Procedural 3D data generation pipeline for architecture

Synthetic Dataset Generator Authors: Stanislava Fedorova Alberto Tono Meher Shashwat Nigam Jiayao Zhang Amirhossein Ahmadnia Cecilia bolognesi Dominik

EfficientNetV2 implementation using PyTorch

EfficientNetV2-S implementation using PyTorch Train Steps Configure imagenet path by changing data_dir in train.py python main.py --benchmark for mode

Pytorch Implementation of Google's Parallel Tacotron 2: A Non-Autoregressive Neural TTS Model with Differentiable Duration Modeling

Parallel Tacotron2 Pytorch Implementation of Google's Parallel Tacotron 2: A Non-Autoregressive Neural TTS Model with Differentiable Duration Modeling

Contains code for the paper "Vision Transformers are Robust Learners".

Vision Transformers are Robust Learners This repository contains the code for the paper Vision Transformers are Robust Learners by Sayak Paul* and Pin

PyTorch implementation of Self-supervised Contrastive Regularization for DG (SelfReg)

SelfReg PyTorch official implementation of Self-supervised Contrastive Regularization for Domain Generalization (SelfReg, https://arxiv.org/abs/2104.0

Self-training with Weak Supervision (NAACL 2021)

This repo holds the code for our weak supervision framework, ASTRA, described in our NAACL 2021 paper: "Self-Training with Weak Supervision"

Text to Image Generation with Semantic-Spatial Aware GAN

text2image This repository includes the implementation for Text to Image Generation with Semantic-Spatial Aware GAN This repo is not completely. Netwo

A collection of Korean Text Datasets ready to use using Tensorflow-Datasets.

tfds-korean A collection of Korean Text Datasets ready to use using Tensorflow-Datasets. TensorFlow-Datasets를 이용한 한국어/한글 데이터셋 모음입니다. Dataset Catalog |

[WWW 2021] Source code for "Graph Contrastive Learning with Adaptive Augmentation"

GCA Source code for Graph Contrastive Learning with Adaptive Augmentation (WWW 2021) For example, to run GCA-Degree under WikiCS, execute: python trai

Standalone pre-training recipe with JAX+Flax

Sabertooth Sabertooth is standalone pre-training recipe based on JAX+Flax, with data pipelines implemented in Rust. It runs on CPU, GPU, and/or TPU, b

Code for paper "Document-Level Argument Extraction by Conditional Generation". NAACL 21'

Argument Extraction by Generation Code for paper "Document-Level Argument Extraction by Conditional Generation". NAACL 21' Dependencies pytorch=1.6 tr

A PyTorch Lightning solution to training OpenAI's CLIP from scratch.

train-CLIP 📎 A PyTorch Lightning solution to training CLIP from scratch. Goal ⚽ Our aim is to create an easy to use Lightning implementation of OpenA

An Artificial Intelligence trying to drive a car by itself on a user created map

An Artificial Intelligence trying to drive a car by itself on a user created map

(SIGIR2020) “Asymmetric Tri-training for Debiasing Missing-Not-At-Random Explicit Feedback’’

Asymmetric Tri-training for Debiasing Missing-Not-At-Random Explicit Feedback About This repository accompanies the real-world experiments conducted i

tsai is an open-source deep learning package built on top of Pytorch & fastai focused on state-of-the-art techniques for time series classification, regression and forecasting.

Time series Timeseries Deep Learning Pytorch fastai - State-of-the-art Deep Learning with Time Series and Sequences in Pytorch / fastai

Code from the paper "High-Performance Brain-to-Text Communication via Handwriting"

High-Performance Brain-to-Text Communication via Handwriting Overview This repo is associated with this manuscript, preprint and dataset. The code can

Resources for the "Evaluating the Factual Consistency of Abstractive Text Summarization" paper

Evaluating the Factual Consistency of Abstractive Text Summarization Authors: Wojciech Kryściński, Bryan McCann, Caiming Xiong, and Richard Socher Int

Unofficial implementation of Google's FNet: Mixing Tokens with Fourier Transforms

FNet: Mixing Tokens with Fourier Transforms Pytorch implementation of Fnet : Mixing Tokens with Fourier Transforms. Citation: @misc{leethorp2021fnet,

![[NAACL & ACL 2021] SapBERT: Self-alignment pretraining for BERT.](https://github.com/cambridgeltl/sapbert/raw/main/sapbert_front_graphs_v6.png?raw=true)

[NAACL & ACL 2021] SapBERT: Self-alignment pretraining for BERT.

SapBERT: Self-alignment pretraining for BERT This repo holds code for the SapBERT model presented in our NAACL 2021 paper: Self-Alignment Pretraining

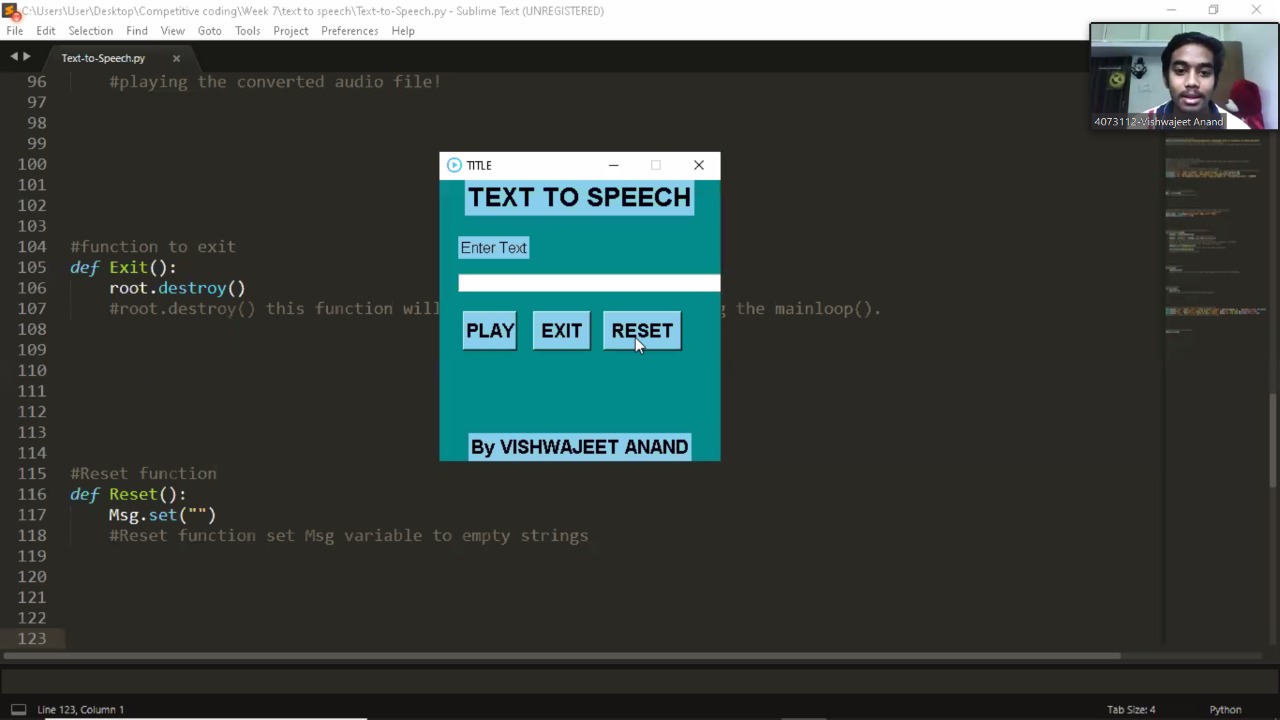

Text to speech is a process to convert any text into voice. Text to speech project takes words on digital devices and convert them into audio. Here I have used Google-text-to-speech library popularly known as gTTS library to convert text file to .mp3 file. Hope you like my project!

Text to speech (using Python) Text to speech is a process to convert any text into voice. Text to speech project takes words on digital devices and co

A framework for cleaning Chinese dialog data

A framework for cleaning Chinese dialog data

Code from the paper "High-Performance Brain-to-Text Communication via Handwriting"

Code from the paper "High-Performance Brain-to-Text Communication via Handwriting"

A general-purpose multi-agent training framework.

MALib A general-purpose multi-agent training framework. Installation step1: build environment conda create -n malib python==3.7 -y conda activate mali

Multi-Track Music Generation with the Transfomer and the Johann Sebastian Bach Chorales dataset

MMM: Exploring Conditional Multi-Track Music Generation with the Transformer and the Johann Sebastian Bach Chorales Dataset. Implementation of the pap

Self-Supervised Contrastive Learning of Music Spectrograms

Self-Supervised Music Analysis Self-Supervised Contrastive Learning of Music Spectrograms Dataset Songs on the Billboard Year End Hot 100 were collect

A fast Text-to-Speech (TTS) model. Work well for English, Mandarin/Chinese, Japanese, Korean, Russian and Tibetan (so far). 快速语音合成模型,适用于英语、普通话/中文、日语、韩语、俄语和藏语(当前已测试)。

简体中文 | English 并行语音合成 [TOC] 新进展 2021/04/20 合并 wavegan 分支到 main 主分支,删除 wavegan 分支! 2021/04/13 创建 encoder 分支用于开发语音风格迁移模块! 2021/04/13 softdtw 分支 支持使用 Sof

This repository describes our reproducible framework for assessing self-supervised representation learning from speech

LeBenchmark: a reproducible framework for assessing SSL from speech Self-Supervised Learning (SSL) using huge unlabeled data has been successfully exp

MILES is a multilingual text simplifier inspired by LSBert - A BERT-based lexical simplification approach proposed in 2018. Unlike LSBert, MILES uses the bert-base-multilingual-uncased model, as well as simple language-agnostic approaches to complex word identification (CWI) and candidate ranking.

MILES Multilingual Lexical Simplifier Explore the docs » Read LSBert Paper · Report Bug · Request Feature About The Project MILES is a multilingual te

Self-supervised Augmentation Consistency for Adapting Semantic Segmentation (CVPR 2021)

Self-supervised Augmentation Consistency for Adapting Semantic Segmentation This repository contains the official implementation of our paper: Self-su

This is the implementation of the paper "Self-supervised Outdoor Scene Relighting"

Self-supervised Outdoor Scene Relighting This is the implementation of the paper "Self-supervised Outdoor Scene Relighting". The model is implemented

Code for "ShineOn: Illuminating Design Choices for Practical Video-based Virtual Clothing Try-on", accepted at WACV 2021 Generation of Human Behavior Workshop.

ShineOn: Illuminating Design Choices for Practical Video-based Virtual Clothing Try-on [ Paper ] [ Project Page ] This repository contains the code fo

Self-Supervised Multi-Frame Monocular Scene Flow (CVPR 2021)

Self-Supervised Multi-Frame Monocular Scene Flow 3D visualization of estimated depth and scene flow (overlayed with input image) from temporally conse

Code for our paper Domain Adaptive Semantic Segmentation with Self-Supervised Depth Estimation

CorDA Code for our paper Domain Adaptive Semantic Segmentation with Self-Supervised Depth Estimation Prerequisite Please create and activate the follo

DeepFaceEditing: Deep Face Generation and Editing with Disentangled Geometry and Appearance Control

DeepFaceEditing: Deep Face Generation and Editing with Disentangled Geometry and Appearance Control One version of our system is implemented using the

The pytorch implementation of DG-Font: Deformable Generative Networks for Unsupervised Font Generation

DG-Font: Deformable Generative Networks for Unsupervised Font Generation The source code for 'DG-Font: Deformable Generative Networks for Unsupervised

Word2Wave: a framework for generating short audio samples from a text prompt using WaveGAN and COALA.

Word2Wave is a simple method for text-controlled GAN audio generation. You can either follow the setup instructions below and use the source code and CLI provided in this repo or you can have a play around in the Colab notebook provided. Note that, in both cases, you will need to train a WaveGAN model first

Euporie is a text-based user interface for running and editing Jupyter notebooks

Euporie is a text-based user interface for running and editing Jupyter notebooks

Parrot is a paraphrase based utterance augmentation framework purpose built to accelerate training NLU models

Parrot is a paraphrase based utterance augmentation framework purpose built to accelerate training NLU models. A paraphrase framework is more than just a paraphrasing model.

DeepSpeed is a deep learning optimization library that makes distributed training easy, efficient, and effective.

DeepSpeed is a deep learning optimization library that makes distributed training easy, efficient, and effective.

Integrating the Best of TF into PyTorch, for Machine Learning, Natural Language Processing, and Text Generation. This is part of the CASL project: http://casl-project.ai/

Texar-PyTorch is a toolkit aiming to support a broad set of machine learning, especially natural language processing and text generation tasks. Texar

Pytorch implementation of Tacotron

Tacotron-pytorch A pytorch implementation of Tacotron: A Fully End-to-End Text-To-Speech Synthesis Model. Requirements Install python 3 Install pytorc

An implementation of WaveNet with fast generation

pytorch-wavenet This is an implementation of the WaveNet architecture, as described in the original paper. Features Automatic creation of a dataset (t

A PyTorch Implementation of End-to-End Models for Speech-to-Text

speech Speech is an open-source package to build end-to-end models for automatic speech recognition. Sequence-to-sequence models with attention, Conne

A simple command line tool for text to image generation, using OpenAI's CLIP and a BigGAN.

Ryan Murdock has done it again, combining OpenAI's CLIP and the generator from a BigGAN! This repository wraps up his work so it is easily accessible to anyone who owns a GPU.

DeepMetaHandles: Learning Deformation Meta-Handles of 3D Meshes with Biharmonic Coordinates

DeepMetaHandles (CVPR2021 Oral) [paper] [animations] DeepMetaHandles is a shape deformation technique. It learns a set of meta-handles for each given

A Distributional Approach To Controlled Text Generation

A Distributional Approach To Controlled Text Generation This is the repository code for the ICLR 2021 paper "A Distributional Approach to Controlled T

AdaSpeech 2: Adaptive Text to Speech with Untranscribed Data

AdaSpeech 2: Adaptive Text to Speech with Untranscribed Data [WIP] Unofficial Pytorch implementation of AdaSpeech 2. Requirements : All code written i

Text Generation by Learning from Demonstrations

Text Generation by Learning from Demonstrations The README was last updated on March 7, 2021. The repo is based on fairseq (v0.9.?). Paper arXiv Prere

[EMNLP 2020] Keep CALM and Explore: Language Models for Action Generation in Text-based Games

Contextual Action Language Model (CALM) and the ClubFloyd Dataset Code and data for paper Keep CALM and Explore: Language Models for Action Generation

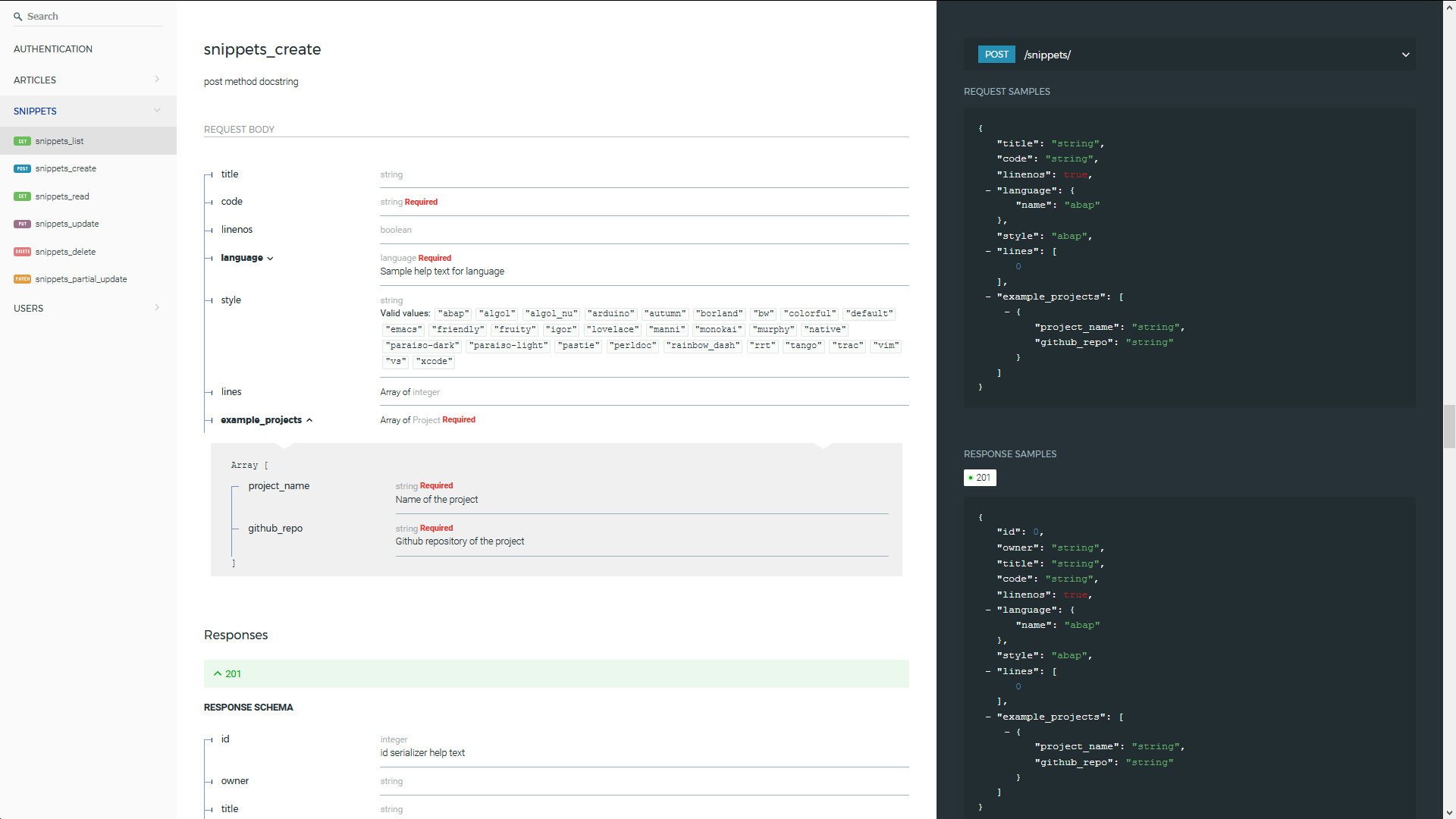

Automated generation of real Swagger/OpenAPI 2.0 schemas from Django REST Framework code.

drf-yasg - Yet another Swagger generator Generate real Swagger/OpenAPI 2.0 specifications from a Django Rest Framework API. Compatible with Django Res

Physics-Aware Training (PAT) is a method to train real physical systems with backpropagation.

Physics-Aware Training (PAT) is a method to train real physical systems with backpropagation. It was introduced in Wright, Logan G. & Onodera, Tatsuhiro et al. (2021)1 to train Physical Neural Networks (PNNs) - neural networks whose building blocks are physical systems.

Joji convert a text to corresponding emoji if emoji is available

Joji Joji convert a text to corresponding emoji if emoji is available How it Works ? 1. There is a json file with emoji names as keys and correspondin

LightSeq: A High-Performance Inference Library for Sequence Processing and Generation

LightSeq is a high performance inference library for sequence processing and generation implemented in CUDA. It enables highly efficient computation of modern NLP models such as BERT, GPT2, Transformer, etc. It is therefore best useful for Machine Translation, Text Generation, Dialog, Language Modelling, and other related tasks using these models.

Pytorch implementation of CoCon: A Self-Supervised Approach for Controlled Text Generation

COCON_ICLR2021 This is our Pytorch implementation of COCON. CoCon: A Self-Supervised Approach for Controlled Text Generation (ICLR 2021) Alvin Chan, Y

PyTorch code for Vision Transformers training with the Self-Supervised learning method DINO

Self-Supervised Vision Transformers with DINO PyTorch implementation and pretrained models for DINO. For details, see Emerging Properties in Self-Supe

Code to use Augmented Shapiro Wilks Stopping, as well as code for the paper "Statistically Signifigant Stopping of Neural Network Training"

This codebase is being actively maintained, please create and issue if you have issues using it Basics All data files are included under losses and ea

[CVPR 2021] MiVOS - Mask Propagation module. Reproduced STM (and better) with training code :star2:. Semi-supervised video object segmentation evaluation.

MiVOS (CVPR 2021) - Mask Propagation Ho Kei Cheng, Yu-Wing Tai, Chi-Keung Tang [arXiv] [Paper PDF] [Project Page] [Papers with Code] This repo impleme

TorchFlare is a simple, beginner-friendly, and easy-to-use PyTorch Framework train your models effortlessly.

TorchFlare TorchFlare is a simple, beginner-friendly and an easy-to-use PyTorch Framework train your models without much effort. It provides an almost

Code for "Graph-Evolving Meta-Learning for Low-Resource Medical Dialogue Generation". [AAAI 2021]

Graph Evolving Meta-Learning for Low-resource Medical Dialogue Generation Code to be further cleaned... This repo contains the code of the following p

![The code of “Similarity Reasoning and Filtration for Image-Text Matching” [AAAI2021]](https://github.com/Paranioar/SGRAF/raw/main/fig/model.png)

The code of “Similarity Reasoning and Filtration for Image-Text Matching” [AAAI2021]

SGRAF PyTorch implementation for AAAI2021 paper of “Similarity Reasoning and Filtration for Image-Text Matching”. It is built on top of the SCAN and C

TorchShard is a lightweight engine for slicing a PyTorch tensor into parallel shards

TorchShard is a lightweight engine for slicing a PyTorch tensor into parallel shards. It can reduce GPU memory and scale up the training when the model has massive linear layers (e.g., ViT, BERT and GPT) or huge classes (millions). It has the same API design as PyTorch.

NLPretext packages in a unique library all the text preprocessing functions you need to ease your NLP project.

NLPretext packages in a unique library all the text preprocessing functions you need to ease your NLP project.

A library for preparing, training, and evaluating scalable deep learning hybrid recommender systems using PyTorch.

collie_recs Collie is a library for preparing, training, and evaluating implicit deep learning hybrid recommender systems, named after the Border Coll

An official implementation for "CLIP4Clip: An Empirical Study of CLIP for End to End Video Clip Retrieval"

The implementation of paper CLIP4Clip: An Empirical Study of CLIP for End to End Video Clip Retrieval. CLIP4Clip is a video-text retrieval model based

CorNet Correlation Networks for Extreme Multi-label Text Classification

CorNet Correlation Networks for Extreme Multi-label Text Classification Prerequisites python==3.6.3 pytorch==1.2.0 torchgpipe==0.0.5 click==7.0 ruamel

Code for Dual Contrastive Learning for Unsupervised Image-to-Image Translation, NTIRE, CVPRW 2021.

arXiv Dual Contrastive Learning Adversarial Generative Networks (DCLGAN) We provide our PyTorch implementation of DCLGAN, which is a simple yet powerf

BYOL for Audio: Self-Supervised Learning for General-Purpose Audio Representation

BYOL for Audio: Self-Supervised Learning for General-Purpose Audio Representation This is a demo implementation of BYOL for Audio (BYOL-A), a self-sup

A Practical Debugging Tool for Training Deep Neural Networks

Cockpit is a visual and statistical debugger specifically designed for deep learning!

Code for the paper "Improving Vision-and-Language Navigation with Image-Text Pairs from the Web" (ECCV 2020)

Improving Vision-and-Language Navigation with Image-Text Pairs from the Web Arjun Majumdar, Ayush Shrivastava, Stefan Lee, Peter Anderson, Devi Parikh

Reimplementation of the paper `Human Attention Maps for Text Classification: Do Humans and Neural Networks Focus on the Same Words? (ACL2020)`

Human Attention for Text Classification Re-implementation of the paper Human Attention Maps for Text Classification: Do Humans and Neural Networks Foc

Code for "Learning the Best Pooling Strategy for Visual Semantic Embedding", CVPR 2021

Learning the Best Pooling Strategy for Visual Semantic Embedding Official PyTorch implementation of the paper Learning the Best Pooling Strategy for V

Just Go with the Flow: Self-Supervised Scene Flow Estimation

Just Go with the Flow: Self-Supervised Scene Flow Estimation Code release for the paper Just Go with the Flow: Self-Supervised Scene Flow Estimation,

PlenOctrees: NeRF-SH Training & Conversion

PlenOctrees Official Repo: NeRF-SH training and conversion This repository contains code to train NeRF-SH and to extract the PlenOctree, constituting

Pytorch implementation of "Training a 85.4% Top-1 Accuracy Vision Transformer with 56M Parameters on ImageNet"

Token Labeling: Training an 85.4% Top-1 Accuracy Vision Transformer with 56M Parameters on ImageNet (arxiv) This is a Pytorch implementation of our te

FID calculation with proper image resizing and quantization steps

clean-fid: Fixing Inconsistencies in FID Project | Paper The FID calculation involves many steps that can produce inconsistencies in the final metric.

SiT: Self-supervised vIsion Transformer

This repository contains the official PyTorch self-supervised pretraining, finetuning, and evaluation codes for SiT (Self-supervised image Transformer).

(CVPR 2021) ST3D: Self-training for Unsupervised Domain Adaptation on 3D Object Detection

ST3D Code release for the paper ST3D: Self-training for Unsupervised Domain Adaptation on 3D Object Detection, CVPR 2021 Authors: Jihan Yang*, Shaoshu

Coreference resolution for English, German and Polish, optimised for limited training data and easily extensible for further languages

Coreferee Author: Richard Paul Hudson, msg systems ag 1. Introduction 1.1 The basic idea 1.2 Getting started 1.2.1 English 1.2.2 German 1.2.3 Polish 1

Training code of Spatial Time Memory Network. Semi-supervised video object segmentation.

Training-code-of-STM This repository fully reproduces Space-Time Memory Networks Performance on Davis17 val set&Weights backbone training stage traini

TalkNet 2: Non-Autoregressive Depth-Wise Separable Convolutional Model for Speech Synthesis with Explicit Pitch and Duration Prediction.

TalkNet 2 [WIP] TalkNet 2: Non-Autoregressive Depth-Wise Separable Convolutional Model for Speech Synthesis with Explicit Pitch and Duration Predictio

Focus on Algorithm Design, Not on Data Wrangling

The dataTap Python library is the primary interface for using dataTap's rich data management tools. Create datasets, stream annotations, and analyze model performance all with one library.

Self-Supervised Learning for Domain Adaptation on Point-Clouds

Self-Supervised Learning for Domain Adaptation on Point-Clouds Introduction Self-supervised learning (SSL) allows to learn useful representations from

VideoGPT: Video Generation using VQ-VAE and Transformers

VideoGPT: Video Generation using VQ-VAE and Transformers [Paper][Website][Colab][Gradio Demo] We present VideoGPT: a conceptually simple architecture

DVG-Face: Dual Variational Generation for Heterogeneous Face Recognition, TPAMI 2021

DVG-Face: Dual Variational Generation for HFR This repo is a PyTorch implementation of DVG-Face: Dual Variational Generation for Heterogeneous Face Re

Scene Text Retrieval via Joint Text Detection and Similarity Learning

This is the code of "Scene Text Retrieval via Joint Text Detection and Similarity Learning". For more details, please refer to our CVPR2021 paper.

Code for Pose-Controllable Talking Face Generation by Implicitly Modularized Audio-Visual Representation (CVPR 2021)

Pose-Controllable Talking Face Generation by Implicitly Modularized Audio-Visual Representation (CVPR 2021) Hang Zhou, Yasheng Sun, Wayne Wu, Chen Cha

skweak: A software toolkit for weak supervision applied to NLP tasks

Labelled data remains a scarce resource in many practical NLP scenarios. This is especially the case when working with resource-poor languages (or text domains), or when using task-specific labels without pre-existing datasets. The only available option is often to collect and annotate texts by hand, which is expensive and time-consuming.