849 Repositories

Python Training-Progress-Email-Notifier Libraries

Pytorch implementation of the paper "COAD: Contrastive Pre-training with Adversarial Fine-tuning for Zero-shot Expert Linking."

Expert-Linking Pytorch implementation of the paper "COAD: Contrastive Pre-training with Adversarial Fine-tuning for Zero-shot Expert Linking." This is

Certificate generating and sending system written in Python.

Certificate Generator & Sender How to use git clone https://github.com/saadhaxxan/Certificate-Generator-Sender.git cd Certificate-Generator-Sender Add

Reproduce results and replicate training fo T0 (Multitask Prompted Training Enables Zero-Shot Task Generalization)

T-Zero This repository serves primarily as codebase and instructions for training, evaluation and inference of T0. T0 is the model developed in Multit

Align and Prompt: Video-and-Language Pre-training with Entity Prompts

ALPRO Align and Prompt: Video-and-Language Pre-training with Entity Prompts [Paper] Dongxu Li, Junnan Li, Hongdong Li, Juan Carlos Niebles, Steven C.H

Command line interface for sending email using SMTP (ships with Gmail configuration).

mailsend Description Lightweight command line interface for sending email using SMTP. Default configuration is set for Gmail (smtp.gmail.com at port 5

Pre-Training Graph Neural Networks for Cold-Start Users and Items Representation.

Pretrain-Recsys This is our Tensorflow implementation for our WSDM 2021 paper: Bowen Hao, Jing Zhang, Hongzhi Yin, Cuiping Li, Hong Chen. Pre-Training

Pre-training of Graph Augmented Transformers for Medication Recommendation

G-Bert Pre-training of Graph Augmented Transformers for Medication Recommendation Intro G-Bert combined the power of Graph Neural Networks and BERT (B

Code for KDD'20 "Generative Pre-Training of Graph Neural Networks"

GPT-GNN: Generative Pre-Training of Graph Neural Networks GPT-GNN is a pre-training framework to initialize GNNs by generative pre-training. It can be

code for "Self-supervised edge features for improved Graph Neural Network training", arxivlink

Self-supervised edge features for improved Graph Neural Network training Data availability: Here is a link to the raw data for the organoids dataset.

![[ICML 2020] DrRepair: Learning to Repair Programs from Error Messages](https://github.com/michiyasunaga/DrRepair/raw/master/DrRepair-overview.png)

[ICML 2020] DrRepair: Learning to Repair Programs from Error Messages

DrRepair: Learning to Repair Programs from Error Messages This repo provides the source code & data of our paper: Graph-based, Self-Supervised Program

Autoregressive Predictive Coding: An unsupervised autoregressive model for speech representation learning

Autoregressive Predictive Coding This repository contains the official implementation (in PyTorch) of Autoregressive Predictive Coding (APC) proposed

Code and training data for our ECCV 2016 paper on Unsupervised Learning

Shuffle and Learn (Shuffle Tuple) Created by Ishan Misra Based on the ECCV 2016 Paper - "Shuffle and Learn: Unsupervised Learning using Temporal Order

![[NeurIPS'20] Self-supervised Co-Training for Video Representation Learning. Tengda Han, Weidi Xie, Andrew Zisserman.](https://github.com/TengdaHan/CoCLR/raw/main/asset/teaser.png)

[NeurIPS'20] Self-supervised Co-Training for Video Representation Learning. Tengda Han, Weidi Xie, Andrew Zisserman.

CoCLR: Self-supervised Co-Training for Video Representation Learning This repository contains the implementation of: InfoNCE (MoCo on videos) UberNCE

PyTorch code for training MM-DistillNet for multimodal knowledge distillation

There is More than Meets the Eye: Self-Supervised Multi-Object Detection and Tracking with Sound by Distilling Multimodal Knowledge MM-DistillNet is a

AdaFocus V2: End-to-End Training of Spatial Dynamic Networks for Video Recognition

AdaFocusV2 This repo contains the official code and pre-trained models for AdaFo

Deep Learning Training Scripts With Python

Deep Learning Training Scripts DNN Frameworks Caffe PyTorch Tensorflow CNN Models VGG ResNet DenseNet Inception Language Modeling GatedCNN-LM Attentio

Aplicação que envia regularmente um email ao utilizador com todos os filmes disponíveis no cartaz dos cinemas Nos.

Cartaz-Cinemas-Nos Aplicação que envia regularmente uma notificação ao utilizador com todos os filmes disponíveis no cartaz dos cinemas Nos. Só funcio

Email pass separator

email-pass-separator hii check out our new tool in kali linux use 'filename ' Dont forget to put inverted comma email:password separator Image Command

Hub is a dataset format with a simple API for creating, storing, and collaborating on AI datasets of any size.

Hub is a dataset format with a simple API for creating, storing, and collaborating on AI datasets of any size. The hub data layout enables rapid transformations and streaming of data while training models at scale. Hub is used by Google, Waymo, Red Cross, Oxford University, and Omdena.

Django Email Sender

Email-Sender Django Email Sender Installation 1.clone Repository & Install Packages git clone https://github.com/telman03/Email-Sender.git pip install

Code to use Augmented Shapiro Wilks Stopping, as well as code for the paper "Statistically Signifigant Stopping of Neural Network Training"

This codebase is being actively maintained, please create and issue if you have issues using it Basics All data files are included under losses and ea

Python Email Sender (PES) is a program made with Python using smtplib, socket and tkinter.

Python Email Sender (PES) is a program made with Python using smtplib, socket and tkinter. This program was made for sender email to be a gmail account because that's what I used when testing it out, to make it work for a gmail account turn off secure app and then put in your email and password into the right variables and the program should be good to run!

💡Python package for HTTP/1.1 style headers. Parse headers to objects. Most advanced available structure for http headers.

HTTP Headers, the Complete Toolkit 🧰 Object-oriented headers. Kind of structured headers. ❓ Why No matter if you are currently dealing with code usin

PyTorch implementation of Rethinking Positional Encoding in Language Pre-training

TUPE PyTorch implementation of Rethinking Positional Encoding in Language Pre-training. Quickstart Clone this repository. git clone https://github.com

Code release for SLIP Self-supervision meets Language-Image Pre-training

SLIP: Self-supervision meets Language-Image Pre-training What you can find in this repo: Pre-trained models (with ViT-Small, Base, Large) and code to

Django email backend with AWS SES and Celery

Django Celery SES Django Email Backend with Amazon Web Service SES and Celery, developed and used by StreetVoice. This packages provide a EmailBackend

Apprise - Push Notifications that work with just about every platform!

ap·prise / verb To inform or tell (someone). To make one aware of something. Apprise allows you to send a notification to almost all of the most popul

Turn any live video stream or locally stored video into a dataset of interesting samples for ML training, or any other type of analysis.

Sieve Video Data Collection Example Find samples that are interesting within hours of raw video, for free and completely automatically using Sieve API

Implementation of our paper "DMT: Dynamic Mutual Training for Semi-Supervised Learning"

DMT: Dynamic Mutual Training for Semi-Supervised Learning This repository contains the code for our paper DMT: Dynamic Mutual Training for Semi-Superv

A PyTorch Extension: Tools for easy mixed precision and distributed training in Pytorch

Introduction This is a Python package available on PyPI for NVIDIA-maintained utilities to streamline mixed precision and distributed training in Pyto

ONNX Runtime: cross-platform, high performance ML inferencing and training accelerator

ONNX Runtime is a cross-platform inference and training machine-learning accelerator. ONNX Runtime inference can enable faster customer experiences an

Python SDK for building, training, and deploying ML models

Overview of Kubeflow Fairing Kubeflow Fairing is a Python package that streamlines the process of building, training, and deploying machine learning (

An automation program that checks whether email addresses are real, whether they exist and whether they are a validated mail

Email Validator It is an automation program that checks whether email addresses are real, whether they exist and whether they are a validated mail. Re

This is the mail server that handles responses from the Contact Form

mailserver About This is the mail server that handles responses from the Contact Form Contributors ✨ Thanks goes to these wonderful people (emoji key)

An offline Phishing Email Analyzer.

An offline Phishing Email Analyzer.

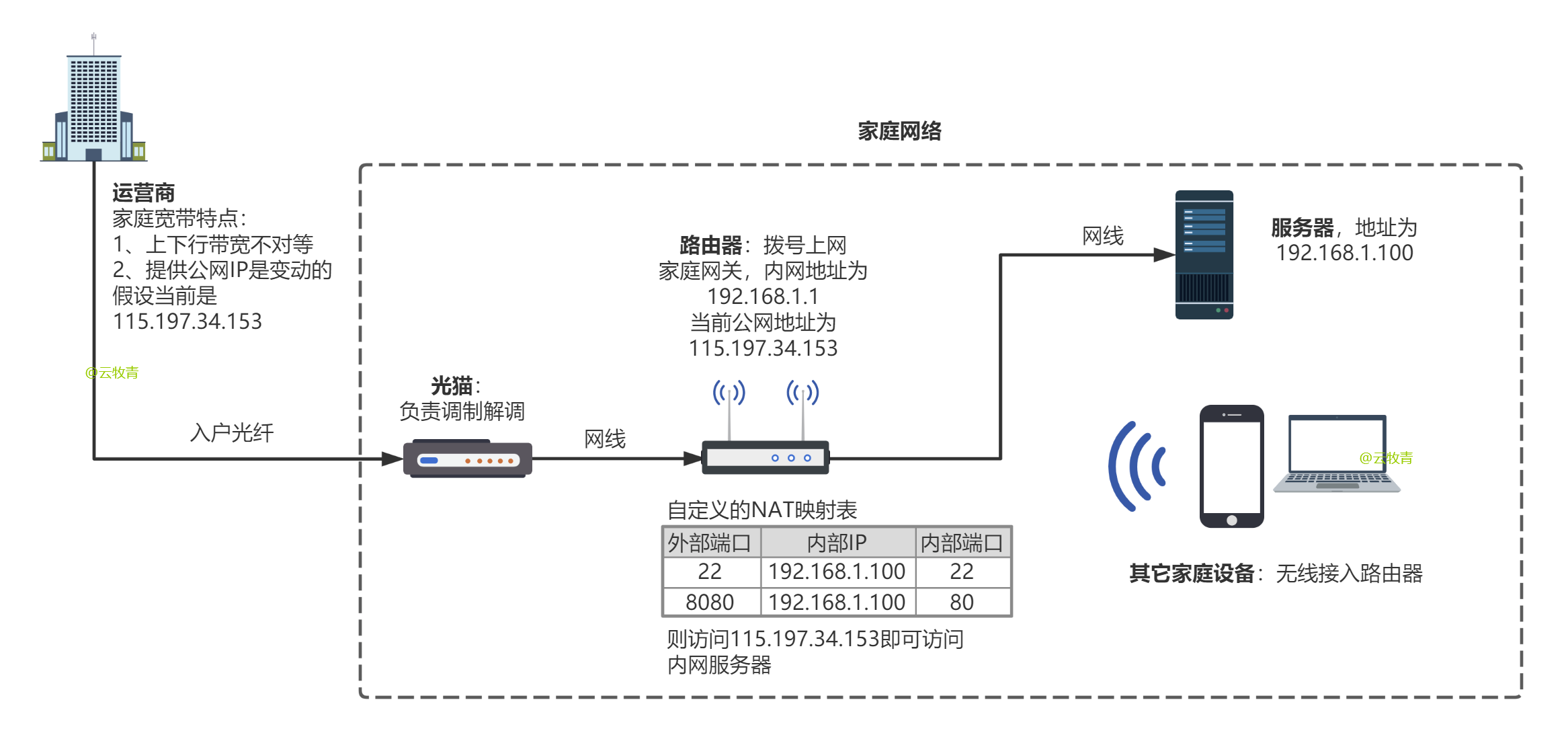

A great python/java dynamic DNS service for NameSilo, with log, email reminder...

English NameSilo DDNS is a DDNS service for NameSilo domain names for home broadband , it can automatically detect IP changes in home broadband

This is a web crawler that works on employ email data by gmane.org and visualizes it in different ways.

crawler_to_visual_gmane Analyzing an EMAIL Archive from gmane and vizualizing the data using the D3 JavaScript library. This is a set of tools that al

A simple free API that allows you to extract abuse emails from IPs.

Abuse-Email-API A simple free API that allows you to extract abuse emails from IPs. also isnt worth 500 dollars :) Requirements A Debian based OS The

An OSINT program that allows you to uncover a censored domain in an email adress

An OSINT program that allows you to uncover a censored domain in an email adress. Useful when you extract email from Instagram or Twitter password recovery function.

Search email inbox with python and filter with search criteria via IMAP4 and fastapi or console

Search email inbox with python and filter with search criteria via IMAP4 and fastapi or console

Training deep models using anime, illustration images.

animeface deep models for anime images. Datasets anime-face-dataset Anime faces collected from Getchu.com. Based on Mckinsey666's dataset. 63.6K image

learned_optimization: Training and evaluating learned optimizers in JAX

learned_optimization: Training and evaluating learned optimizers in JAX learned_optimization is a research codebase for training learned optimizers. I

A full pipeline AutoML tool for tabular data

HyperGBM Doc | 中文 We Are Hiring! Dear folks,we are offering challenging opportunities located in Beijing for both professionals and students who are k

Training PyTorch models with differential privacy

Opacus is a library that enables training PyTorch models with differential privacy. It supports training with minimal code changes required on the cli

DaProfiler allows you to get emails, social medias, adresses, works and more on your target using web scraping and google dorking techniques

DaProfiler allows you to get emails, social medias, adresses, works and more on your target using web scraping and google dorking techniques, based in France Only. The particularity of this program is its ability to find your target's e-mail adresses.

Codes for building and training the neural network model described in Domain-informed neural networks for interaction localization within astroparticle experiments.

Domain-informed Neural Networks Codes for building and training the neural network model described in Domain-informed neural networks for interaction

On the Complementarity between Pre-Training and Back-Translation for Neural Machine Translation (Findings of EMNLP 2021))

PTvsBT On the Complementarity between Pre-Training and Back-Translation for Neural Machine Translation (Findings of EMNLP 2021) Citation Please cite a

Ensembling Off-the-shelf Models for GAN Training

Vision-aided GAN video (3m) | website | paper Can the collective knowledge from a large bank of pretrained vision models be leveraged to improve GAN t

Fine-grained Post-training for Improving Retrieval-based Dialogue Systems - NAACL 2021

Fine-grained Post-training for Multi-turn Response Selection Implements the model described in the following paper Fine-grained Post-training for Impr

Training and Evaluation Code for Neural Volumes

Neural Volumes This repository contains training and evaluation code for the paper Neural Volumes. The method learns a 3D volumetric representation of

Ensembling Off-the-shelf Models for GAN Training

Data-Efficient GANs with DiffAugment project | paper | datasets | video | slides Generated using only 100 images of Obama, grumpy cats, pandas, the Br

Performance-Efficiency Trade-offs in Unsupervised Pre-training for Speech Recognition

SEW (Squeezed and Efficient Wav2vec) The repo contains the code of the paper "Performance-Efficiency Trade-offs in Unsupervised Pre-training for Speec

Transformer training code for sequential tasks

Sequential Transformer This is a code for training Transformers on sequential tasks such as language modeling. Unlike the original Transformer archite

Pre-training with Extracted Gap-sentences for Abstractive SUmmarization Sequence-to-sequence models

PEGASUS library Pre-training with Extracted Gap-sentences for Abstractive SUmmarization Sequence-to-sequence models, or PEGASUS, uses self-supervised

Large-scale Self-supervised Pre-training Across Tasks, Languages, and Modalities

Hiring We are hiring at all levels (including FTE researchers and interns)! If you are interested in working with us on NLP and large-scale pre-traine

Unsupervised Language Model Pre-training for French

FlauBERT and FLUE FlauBERT is a French BERT trained on a very large and heterogeneous French corpus. Models of different sizes are trained using the n

Code for EMNLP20 paper: "ProphetNet: Predicting Future N-gram for Sequence-to-Sequence Pre-training"

ProphetNet-X This repo provides the code for reproducing the experiments in ProphetNet. In the paper, we propose a new pre-trained language model call

ELECTRA: Pre-training Text Encoders as Discriminators Rather Than Generators

ELECTRA Introduction ELECTRA is a method for self-supervised language representation learning. It can be used to pre-train transformer networks using

A Pytorch reproduction of Range Loss, which is proposed in paper 《Range Loss for Deep Face Recognition with Long-Tailed Training Data》

RangeLoss Pytorch This is a Pytorch reproduction of Range Loss, which is proposed in paper 《Range Loss for Deep Face Recognition with Long-Tailed Trai

Efficient and Scalable Physics-Informed Deep Learning and Scientific Machine Learning on top of Tensorflow for multi-worker distributed computing

Notice: Support for Python 3.6 will be dropped in v.0.2.1, please plan accordingly! Efficient and Scalable Physics-Informed Deep Learning Collocation-

Using CNN to mimic the driver based on training data from Torcs

Behavioural-Cloning-in-autonomous-driving Using CNN to mimic the driver based on training data from Torcs. Approach First, the data was collected from

Automatic class scheduler for Texas A&M written with Python+Django and React+Typescript

Rev Registration Description Rev Registration is an automatic class scheduler for Texas A&M, aimed at easing the process of course registration by gen

Scrapes Every Email Address of Every Society in Every University

society-email-scrape Site Live at https://kcsoc.github.io/society-email-scrape/ How to automatically generate new data Go to unis.yml Add your uni Cre

A simple program for training and testing vit

Vit This is a simple program for training and testing vit. Key requirements: torch, torchvision and timm. Dataset I put 5 categories of the cub classi

PantheonRL is a package for training and testing multi-agent reinforcement learning environments.

PantheonRL is a package for training and testing multi-agent reinforcement learning environments. PantheonRL supports cross-play, fine-tuning, ad-hoc coordination, and more.

A high-performance distributed deep learning system targeting large-scale and automated distributed training.

HETU Documentation | Examples Hetu is a high-performance distributed deep learning system targeting trillions of parameters DL model training, develop

ScreenshotLogger works just like a keylogger but instead of capturing keystroke,it captures the screen, stores it or sends via email

ScreenshotLogger works just like a keylogger but instead of capturing keystroke,it captures the screen, stores it or sends via email. Scrapeasy is super easy to use and handles everything for you. Just specify what website you want to scrape and what media you want to receive back, and the rest is done for you.

A voice assistant which can be used to interact with your computer and controls your pc operations

Introduction 👨💻 It is a voice assistant which can be used to interact with your computer and also you have been seeing it in Iron man movies, but t

A python interface for training Reinforcement Learning bots to battle on pokemon showdown

The pokemon showdown Python environment A Python interface to create battling pokemon agents. poke-env offers an easy-to-use interface for creating ru

PyGAD, a Python 3 library for building the genetic algorithm and training machine learning algorithms (Keras & PyTorch).

PyGAD: Genetic Algorithm in Python PyGAD is an open-source easy-to-use Python 3 library for building the genetic algorithm and optimizing machine lear

A python wrapper for the mangadex API V5. Work in progress

mangadex A python wrapper for the mangadex API V5. It uses the requests library and all the aditional arguments can be viewed in the Official Mangadex

Periodically check the manuscript state in the scholar one system and send email when finding a new state.

ScholarOne-manuscript-checker Periodically check the manuscript state in the scholar one system and send email when finding a new state. Parameters ne

A simple file transfer tools, similar to rz / sz but compatible with tmux (control mode), which works with iTerm2 and has a nice progress bar

trzsz A simple file transfer tools, similar to rz/sz but compatible with tmux (control mode), which works with iTerm2 and has a nice progress bar. Why

Atari2600 Training / Evaluation with RLlib

Training Atari2600 by Reinforcement Learning Train Atari2600 and check how it works! How to Setup You can setup packages on your local env. $ make set

A lightweight library designed to accelerate the process of training PyTorch models by providing a minimal

A lightweight library designed to accelerate the process of training PyTorch models by providing a minimal, but extensible training loop which is flexible enough to handle the majority of use cases, and capable of utilizing different hardware options with no code changes required.

A system for quickly generating training data with weak supervision

Programmatically Build and Manage Training Data Announcement The Snorkel team is now focusing their efforts on Snorkel Flow, an end-to-end AI applicat

The Pytorch implementation for "Video-Text Pre-training with Learned Regions"

Region_Learner The Pytorch implementation for "Video-Text Pre-training with Learned Regions" (arxiv) We are still cleaning up the code further and pre

Official code for "Maximum Likelihood Training of Score-Based Diffusion Models", NeurIPS 2021 (spotlight)

Maximum Likelihood Training of Score-Based Diffusion Models This repo contains the official implementation for the paper Maximum Likelihood Training o

A module for use with Pygame. Includes fully customisable buttons, textboxes, sliders and many more, as well as the ability to create and run animations on these widgets.

Pygame Widgets A helper module for common widgets that may be required in developing applications with Pygame. It supports fully customisable buttons,

rastrainer is a QGIS plugin to training remote sensing semantic segmentation model based on PaddlePaddle.

rastrainer rastrainer is a QGIS plugin to training remote sensing semantic segmentation model based on PaddlePaddle. UI TODO Init UI. Add Block. Add l

in-progress decompilation of Gauntlet Legends decompression code on the N64

Gauntlet-Legends A in-progress decompilation of Gauntlet-Legends (N64) decompression code. This project currently supports the US release. Building (L

Unimodal Face Classification with Multimodal Training

Unimodal Face Classification with Multimodal Training This is a PyTorch implementation of the following paper: Unimodal Face Classification with Multi

Official Implementation of SimIPU: Simple 2D Image and 3D Point Cloud Unsupervised Pre-Training for Spatial-Aware Visual Representations

Official Implementation of SimIPU SimIPU: Simple 2D Image and 3D Point Cloud Unsupervised Pre-Training for Spatial-Aware Visual Representations Since

VarCLR: Variable Semantic Representation Pre-training via Contrastive Learning

VarCLR: Variable Representation Pre-training via Contrastive Learning New: Paper accepted by ICSE 2022. Preprint at arXiv! This repository contain

iBOT: Image BERT Pre-Training with Online Tokenizer

Image BERT Pre-Training with iBOT Official PyTorch implementation and pretrained models for paper iBOT: Image BERT Pre-Training with Online Tokenizer.

Code for CVPR2019 paper《Unequal Training for Deep Face Recognition with Long Tailed Noisy Data》

Unequal-Training-for-Deep-Face-Recognition-with-Long-Tailed-Noisy-Data. This is the code of CVPR 2019 paper《Unequal Training for Deep Face Recognition

Conceptual 12M is a dataset containing (image-URL, caption) pairs collected for vision-and-language pre-training.

Conceptual 12M We introduce the Conceptual 12M (CC12M), a dataset with ~12 million image-text pairs meant to be used for vision-and-language pre-train

Repo for CReST: A Class-Rebalancing Self-Training Framework for Imbalanced Semi-Supervised Learning

CReST in Tensorflow 2 Code for the paper: "CReST: A Class-Rebalancing Self-Training Framework for Imbalanced Semi-Supervised Learning" by Chen Wei, Ki

Uni-Fold: Training your own deep protein-folding models.

Uni-Fold: Training your own deep protein-folding models. This package provides and implementation of a trainable, Transformer-based deep protein foldi

Torchrecipes provides a set of reproduci-able, re-usable, ready-to-run RECIPES for training different types of models, across multiple domains, on PyTorch Lightning.

Recipes are a standard, well supported set of blueprints for machine learning engineers to rapidly train models using the latest research techniques without significant engineering overhead.Specifically, recipes aims to provide- Consistent access to pre-trained SOTA models ready for production- Reference implementations for SOTA research reproducibility, and infrastructure to guarantee correctness, efficiency, and interoperability.

iBOT: Image BERT Pre-Training with Online Tokenizer

Image BERT Pre-Training with iBOT Official PyTorch implementation and pretrained models for paper iBOT: Image BERT Pre-Training with Online Tokenizer.

An end to end ASR Transformer model training repo

END TO END ASR TRANSFORMER 本项目基于transformer 6*encoder+6*decoder的基本结构构造的端到端的语音识别系统 Model Instructions 1.数据准备: 自行下载数据,遵循文件结构如下: ├── data │ ├── train │

Training code for Korean multi-class sentiment analysis

KoSentimentAnalysis Bert implementation for the Korean multi-class sentiment analysis 왜 한국어 감정 다중분류 모델은 거의 없는 것일까?에서 시작된 프로젝트 Environment: Pytorch, Da

This repository is an implementation of paper : Improving the Training of Graph Neural Networks with Consistency Regularization

CRGNN Paper : Improving the Training of Graph Neural Networks with Consistency Regularization Environments Implementing environment: GeForce RTX™ 3090

GLIP: Grounded Language-Image Pre-training

GLIP: Grounded Language-Image Pre-training Updates 12/06/2021: GLIP paper on arxiv https://arxiv.org/abs/2112.03857. Code and Model are under internal

This repository is an implementation of paper : Improving the Training of Graph Neural Networks with Consistency Regularization

CRGNN Paper : Improving the Training of Graph Neural Networks with Consistency Regularization Environments Implementing environment: GeForce RTX™ 3090

Post-Training Quantization for Vision transformers.

PTQ4ViT Post-Training Quantization Framework for Vision Transformers. We use the twin uniform quantization method to reduce the quantization error on

Uni-Fold: Training your own deep protein-folding models

Uni-Fold: Training your own deep protein-folding models. This package provides an implementation of a trainable, Transformer-based deep protein foldin

The easiest tool for extracting radiomics features and training ML models on them.

Simple pipeline for experimenting with radiomics features Installation git clone https://github.com/piotrekwoznicki/ClassyRadiomics.git cd classrad pi

Training Structured Neural Networks Through Manifold Identification and Variance Reduction

Training Structured Neural Networks Through Manifold Identification and Variance Reduction This repository is a pytorch implementation of the Regulari