373 Repositories

Python pre-commit-ci-config Libraries

The Hailo Model Zoo includes pre-trained models and a full building and evaluation environment

Hailo Model Zoo The Hailo Model Zoo provides pre-trained models for high-performance deep learning applications. Using the Hailo Model Zoo you can mea

Official repository for the paper, MidiBERT-Piano: Large-scale Pre-training for Symbolic Music Understanding.

MidiBERT-Piano Authors: Yi-Hui (Sophia) Chou, I-Chun (Bronwin) Chen Introduction This is the official repository for the paper, MidiBERT-Piano: Large-

Cross-platform .NET Core pre-commit hooks

dotnet-core-pre-commit Cross-platform .NET Core pre-commit hooks How to use Add this to your .pre-commit-config.yaml - repo: https://github.com/juan

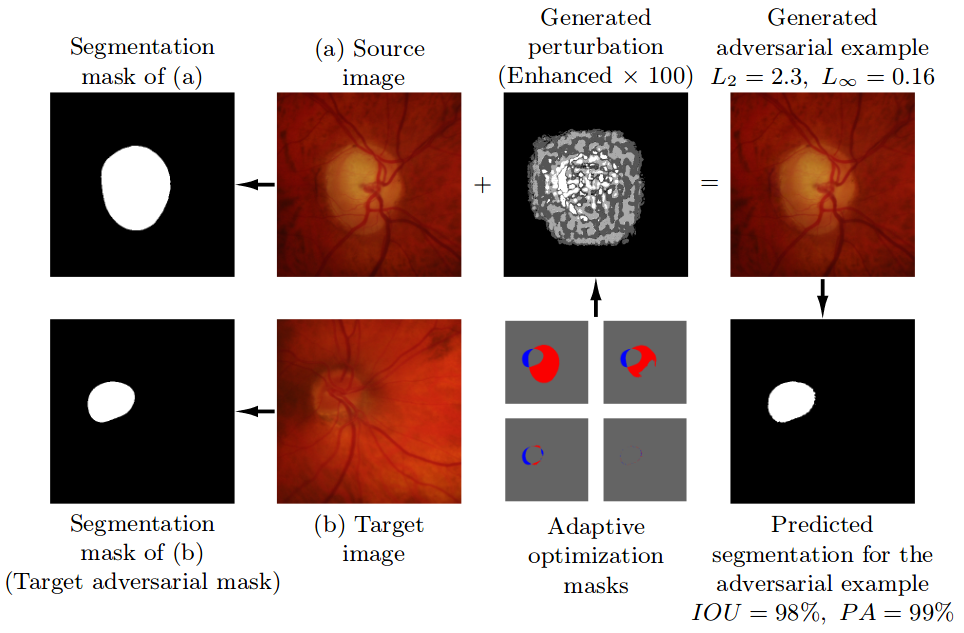

Pre-trained model, code, and materials from the paper "Impact of Adversarial Examples on Deep Learning Models for Biomedical Image Segmentation" (MICCAI 2019).

Adaptive Segmentation Mask Attack This repository contains the implementation of the Adaptive Segmentation Mask Attack (ASMA), a targeted adversarial

Tomli is a Python library for parsing TOML

Tomli A lil' TOML parser Table of Contents generated with mdformat-toc Intro Installation Usage Parse a TOML string Parse a TOML file Handle invalid T

Source code for the paper "PLOME: Pre-training with Misspelled Knowledge for Chinese Spelling Correction" in ACL2021

PLOME:Pre-training with Misspelled Knowledge for Chinese Spelling Correction (ACL2021) This repository provides the code and data of the work in ACL20

Source code for paper: Knowledge Inheritance for Pre-trained Language Models

Knowledge-Inheritance Source code paper: Knowledge Inheritance for Pre-trained Language Models (preprint). The trained model parameters (in Fairseq fo

TAP: Text-Aware Pre-training for Text-VQA and Text-Caption, CVPR 2021 (Oral)

TAP: Text-Aware Pre-training TAP: Text-Aware Pre-training for Text-VQA and Text-Caption by Zhengyuan Yang, Yijuan Lu, Jianfeng Wang, Xi Yin, Dinei Flo

CVPR 2021 Official Pytorch Code for UC2: Universal Cross-lingual Cross-modal Vision-and-Language Pre-training

UC2 UC2: Universal Cross-lingual Cross-modal Vision-and-Language Pre-training Mingyang Zhou, Luowei Zhou, Shuohang Wang, Yu Cheng, Linjie Li, Zhou Yu,

Tomli is a Python library for parsing TOML. Tomli is fully compatible with TOML v1.0.0.

Tomli A lil' TOML parser Table of Contents generated with mdformat-toc Intro Installation Usage Parse a TOML string Parse a TOML file Handle invalid T

TunBERT is the first release of a pre-trained BERT model for the Tunisian dialect using a Tunisian Common-Crawl-based dataset.

TunBERT is the first release of a pre-trained BERT model for the Tunisian dialect using a Tunisian Common-Crawl-based dataset. TunBERT was applied to three NLP downstream tasks: Sentiment Analysis (SA), Tunisian Dialect Identification (TDI) and Reading Comprehension Question-Answering (RCQA)

VIMPAC: Video Pre-Training via Masked Token Prediction and Contrastive Learning

This is a release of our VIMPAC paper to illustrate the implementations. The pretrained checkpoints and scripts will be soon open-sourced in HuggingFace transformers.

We envision models that are pre-trained on a vast range of domain-relevant tasks to become key for molecule property prediction

We envision models that are pre-trained on a vast range of domain-relevant tasks to become key for molecule property prediction. This repository aims to give easy access to state-of-the-art pre-trained models.

Your missing PO formatter and linter

pofmt Your missing PO formatter and linter Features Wrap msgid and msgstr with a constant max width. Can act as a pre-commit hook. Display lint errors

Code for CPM-2 Pre-Train

CPM-2 Pre-Train Pre-train CPM-2 此分支为110亿非 MoE 模型的预训练代码,MoE 模型的预训练代码请切换到 moe 分支 CPM-2技术报告请参考link。 0 模型下载 请在智源资源下载页面进行申请,文件介绍如下: 文件名 描述 参数大小 100000.tar

A pre-attack hacker tool which aims to find out sensitives comments in HTML comment tag and to help on reconnaissance process

Find Out in Comment Find sensetive comment out in HTML ⚈ About This is a pre-attack hacker tool that searches for sensitives words in HTML comments ta

Code + pre-trained models for the paper Keeping Your Eye on the Ball Trajectory Attention in Video Transformers

Motionformer This is an official pytorch implementation of paper Keeping Your Eye on the Ball: Trajectory Attention in Video Transformers. In this rep

The official PyTorch implementation of recent paper - SAINT: Improved Neural Networks for Tabular Data via Row Attention and Contrastive Pre-Training

This repository is the official PyTorch implementation of SAINT. Find the paper on arxiv SAINT: Improved Neural Networks for Tabular Data via Row Atte

Today I Commit (1일 1커밋) 챌린지 알림 봇

Today I Commit Challenge 1일1커밋 챌린지를 위한 알림 봇 config.py github_token = "github private access key" slack_token = "slack authorization token" channel = "

Source code and dataset for ACL2021 paper: "ERICA: Improving Entity and Relation Understanding for Pre-trained Language Models via Contrastive Learning".

ERICA Source code and dataset for ACL2021 paper: "ERICA: Improving Entity and Relation Understanding for Pre-trained Language Models via Contrastive L

Pre-Auth Blind NoSQL Injection leading to Remote Code Execution in Rocket Chat 3.12.1

CVE-2021-22911 Pre-Auth Blind NoSQL Injection leading to Remote Code Execution in Rocket Chat 3.12.1 The getPasswordPolicy method is vulnerable to NoS

![[CVPR 2021]](https://github.com/VITA-Group/CV_LTH_Pre-training/raw/main/Figs/Teaser.png)

[CVPR 2021] "The Lottery Tickets Hypothesis for Supervised and Self-supervised Pre-training in Computer Vision Models" Tianlong Chen, Jonathan Frankle, Shiyu Chang, Sijia Liu, Yang Zhang, Michael Carbin, Zhangyang Wang

The Lottery Tickets Hypothesis for Supervised and Self-supervised Pre-training in Computer Vision Models Codes for this paper The Lottery Tickets Hypo

Generate config files and qr codes for wireguard vpn

wireguard config generator for python Generate config files and qr codes for wireguard vpn You will need to install qrcode and pillow in python and yo

Chinese clinical named entity recognition using pre-trained BERT model

Chinese clinical named entity recognition (CNER) using pre-trained BERT model Introduction Code for paper Chinese clinical named entity recognition wi

This repo contains the official code and pre-trained models for the Dynamic Vision Transformer (DVT).

Dynamic-Vision-Transformer (Pytorch) This repo contains the official code and pre-trained models for the Dynamic Vision Transformer (DVT). Not All Ima

Simple dotfile pre-processor with a per-file configuration

ix (eeks) Simple dotfile pre-processor with a per-file configuration Summary (TL;DR) ix.py is all you need config is an ini file. files to be processe

Code for CPM-2 Pre-Train

CPM-2 Pre-Train Pre-train CPM-2 此分支为110亿非 MoE 模型的预训练代码,MoE 模型的预训练代码请切换到 moe 分支 CPM-2技术报告请参考link。 0 模型下载 请在智源资源下载页面进行申请,文件介绍如下: 文件名 描述 参数大小 100000.tar

Third party Pytorch implement of Image Processing Transformer (Pre-Trained Image Processing Transformer arXiv:2012.00364v2)

ImageProcessingTransformer Third party Pytorch implement of Image Processing Transformer (Pre-Trained Image Processing Transformer arXiv:2012.00364v2)

(CVPR2021) Kaleido-BERT: Vision-Language Pre-training on Fashion Domain

Kaleido-BERT: Vision-Language Pre-training on Fashion Domain Mingchen Zhuge*, Dehong Gao*, Deng-Ping Fan#, Linbo Jin, Ben Chen, Haoming Zhou, Minghui

![[CVPR'21 Oral] Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning](https://github.com/researchmm/soho/raw/master/resources/soho.png)

[CVPR'21 Oral] Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning

Seeing Out of tHe bOx: End-to-End Pre-training for Vision-Language Representation Learning [CVPR'21, Oral] By Zhicheng Huang*, Zhaoyang Zeng*, Yupan H

Unsupervised Pre-training for Person Re-identification (LUPerson)

LUPerson Unsupervised Pre-training for Person Re-identification (LUPerson). The repository is for our CVPR2021 paper Unsupervised Pre-training for Per

Standalone pre-training recipe with JAX+Flax

Sabertooth Sabertooth is standalone pre-training recipe based on JAX+Flax, with data pipelines implemented in Rust. It runs on CPU, GPU, and/or TPU, b

Simple but maybe too simple config management through python data classes. We use it for machine learning.

👩✈️ Coqpit Simple, light-weight and no dependency config handling through python data classes with to/from JSON serialization/deserialization. Curre

Transparently load variables from environment or JSON/YAML file.

A thin wrapper over Pydantic's settings management. Allows you to define configuration variables and load them from environment or JSON/YAML file. Also generates initial configuration files and documentation for your defined configuration.

One Stop Anomaly Shop: Anomaly detection using two-phase approach: (a) pre-labeling using statistics, Natural Language Processing and static rules; (b) anomaly scoring using supervised and unsupervised machine learning.

One Stop Anomaly Shop (OSAS) Quick start guide Step 1: Get/build the docker image Option 1: Use precompiled image (might not reflect latest changes):

Pytorch implementation of our paper under review — Lottery Jackpots Exist in Pre-trained Models

Lottery Jackpots Exist in Pre-trained Models (Paper Link) Requirements Python = 3.7.4 Pytorch = 1.6.1 Torchvision = 0.4.1 Reproduce the Experiment

SSH-Restricted deploys an SSH compliance rule (AWS Config) with auto-remediation via AWS Lambda if SSH access is public.

SSH-Restricted SSH-Restricted deploys an SSH compliance rule with auto-remediation via AWS Lambda if SSH access is public. SSH-Auto-Restricted checks

Simple, light-weight config handling through python data classes with to/from JSON serialization/deserialization.

Simple but maybe too simple config management through python data classes. We use it for machine learning.

Official code of our work, Unified Pre-training for Program Understanding and Generation [NAACL 2021].

PLBART Code pre-release of our work, Unified Pre-training for Program Understanding and Generation accepted at NAACL 2021. Note. A detailed documentat

A modern, geometric typeface by @chrismsimpson (last commit @ 85fa625 Jun 9, 2020 before deletion)

Metropolis A modern, geometric typeface. Influenced by other popular geometric, minimalist sans-serif typefaces of the new millenium. Designed for opt

《K-Adapter: Infusing Knowledge into Pre-Trained Models with Adapters》(2020)

K-Adapter: Infusing Knowledge into Pre-Trained Models with Adapters This repository is the implementation of the paper "K-Adapter: Infusing Knowledge

Code for pre-training CharacterBERT models (as well as BERT models).

Pre-training CharacterBERT (and BERT) This is a repository for pre-training BERT and CharacterBERT. DISCLAIMER: The code was largely adapted from an o

Official repository for "PAIR: Planning and Iterative Refinement in Pre-trained Transformers for Long Text Generation"

pair-emnlp2020 Official repository for the paper: Xinyu Hua and Lu Wang: PAIR: Planning and Iterative Refinement in Pre-trained Transformers for Long

Code, Data and Demo for Paper: Controllable Generation from Pre-trained Language Models via Inverse Prompting

InversePrompting Paper: Controllable Generation from Pre-trained Language Models via Inverse Prompting Code: The code is provided in the "chinese_ip"

Top2Vec is an algorithm for topic modeling and semantic search.

Top2Vec is an algorithm for topic modeling and semantic search. It automatically detects topics present in text and generates jointly embedded topic, document and word vectors.

This tool extracts Credit card numbers, NTLM(DCE-RPC, HTTP, SQL, LDAP, etc), Kerberos (AS-REQ Pre-Auth etype 23), HTTP Basic, SNMP, POP, SMTP, FTP, IMAP, etc from a pcap file or from a live interface.

This tool extracts Credit card numbers, NTLM(DCE-RPC, HTTP, SQL, LDAP, etc), Kerberos (AS-REQ Pre-Auth etype 23), HTTP Basic, SNMP, POP, SMTP, FTP, IMAP, etc from a pcap file or from a live interface.

source code and pre-trained/fine-tuned checkpoint for NAACL 2021 paper LightningDOT

LightningDOT: Pre-training Visual-Semantic Embeddings for Real-Time Image-Text Retrieval This repository contains source code and pre-trained/fine-tun

Minimal, self-hosted, 0-config alternative to ngrok. Caddy+OpenSSH+50 lines of Python.

If you have a webserver running on one computer (say your development laptop), and you want to expose it securely (ie HTTPS) via a public URL, SirTunnel allows you to easily do that.

PyTorch implementation of "Contrast to Divide: self-supervised pre-training for learning with noisy labels"

Contrast to Divide: self-supervised pre-training for learning with noisy labels This is an official implementation of "Contrast to Divide: self-superv

Monocular Depth Estimation - Weighted-average prediction from multiple pre-trained depth estimation models

merged_depth runs (1) AdaBins, (2) DiverseDepth, (3) MiDaS, (4) SGDepth, and (5) Monodepth2, and calculates a weighted-average per-pixel absolute dept

My personal Home Assistant configuration.

About This is my personal Home Assistant configuration. My guiding princile is to have full local control of all my devices. I intend everything to ru

Pre-Recognize Library - library with algorithms for improving OCR quality.

PRLib - Pre-Recognition Library. The main aim of the library - prepare image for recogntion. Image processing can really help to improve recognition q

Use Convolutional Recurrent Neural Network to recognize the Handwritten line text image without pre segmentation into words or characters. Use CTC loss Function to train.

Handwritten Line Text Recognition using Deep Learning with Tensorflow Description Use Convolutional Recurrent Neural Network to recognize the Handwrit

Official code of our work, Unified Pre-training for Program Understanding and Generation [NAACL 2021].

PLBART Code pre-release of our work, Unified Pre-training for Program Understanding and Generation accepted at NAACL 2021. Note. A detailed documentat

ProxyLogon Pre-Auth SSRF To Arbitrary File Write

ProxyLogon Pre-Auth SSRF To Arbitrary File Write For Education and Research Usage: C:\python proxylogon.py mail.evil.corp [email protected] At

![[CVPR 2021] Involution: Inverting the Inherence of Convolution for Visual Recognition, a brand new neural operator](https://github.com/d-li14/involution/raw/main/fig/involution.png)

[CVPR 2021] Involution: Inverting the Inherence of Convolution for Visual Recognition, a brand new neural operator

involution Official implementation of a neural operator as described in Involution: Inverting the Inherence of Convolution for Visual Recognition (CVP

![[CVPR2021 Oral] UP-DETR: Unsupervised Pre-training for Object Detection with Transformers](https://github.com/dddzg/up-detr/raw/master/.github/UP-DETR.png)

[CVPR2021 Oral] UP-DETR: Unsupervised Pre-training for Object Detection with Transformers

UP-DETR: Unsupervised Pre-training for Object Detection with Transformers This is the official PyTorch implementation and models for UP-DETR paper: @a

Code associated with the "Data Augmentation using Pre-trained Transformer Models" paper

Data Augmentation using Pre-trained Transformer Models Code associated with the Data Augmentation using Pre-trained Transformer Models paper Code cont

Implementation of COCO-LM, Correcting and Contrasting Text Sequences for Language Model Pretraining, in Pytorch

COCO LM Pretraining (wip) Implementation of COCO-LM, Correcting and Contrasting Text Sequences for Language Model Pretraining, in Pytorch. They were a

validation for pre-commit.ci configuration

pre-commit-ci-config validation for pre-commit.ci configuration installation pip install pre-commit-ci-config api pre_commit_ci_config.SCHEMA a cfgv s

Pre-commit hook for upgrading type hints

This is a pre-commit hook configured to automatically upgrade your type hints to the new native types implemented in PEP 585.

Pre-trained NFNets with 99% of the accuracy of the official paper

NFNet Pytorch Implementation This repo contains pretrained NFNet models F0-F6 with high ImageNet accuracy from the paper High-Performance Large-Scale

:fishing_pole_and_fish: List of `pre-commit` hooks to ensure the quality of your `dbt` projects.

pre-commit-dbt List of pre-commit hooks to ensure the quality of your dbt projects. BETA NOTICE: This tool is still BETA and may have some bugs, so pl

GAP-text2SQL: Learning Contextual Representations for Semantic Parsing with Generation-Augmented Pre-Training

GAP-text2SQL: Learning Contextual Representations for Semantic Parsing with Generation-Augmented Pre-Training Code and model from our AAAI 2021 paper

Run `black` on python code blocks in documentation files

blacken-docs Run black on python code blocks in documentation files. install pip install blacken-docs usage blacken-docs provides a single executable

A tool (and pre-commit hook) to automatically add trailing commas to calls and literals.

add-trailing-comma A tool (and pre-commit hook) to automatically add trailing commas to calls and literals. Installation pip install add-trailing-comm

A tool (and pre-commit hook) to automatically upgrade syntax for newer versions of the language.

pyupgrade A tool (and pre-commit hook) to automatically upgrade syntax for newer versions of the language. Installation pip install pyupgrade As a pre

A drop-in replacement for argparse that allows options to also be set via config files and/or environment variables.

ConfigArgParse Overview Applications with more than a handful of user-settable options are best configured through a combination of command line args,

Configuration Management for Python ⚙

dynaconf - Configuration Management for Python. Features Inspired by the 12-factor application guide Settings management (default values, validation,

Type-safe YAML parser and validator.

StrictYAML StrictYAML is a type-safe YAML parser that parses and validates a restricted subset of the YAML specification. Priorities: Beautiful API Re

Utilities for implementing a modified pre-order traversal tree in django.

django-mptt Utilities for implementing Modified Preorder Tree Traversal with your Django Models and working with trees of Model instances. Project hom

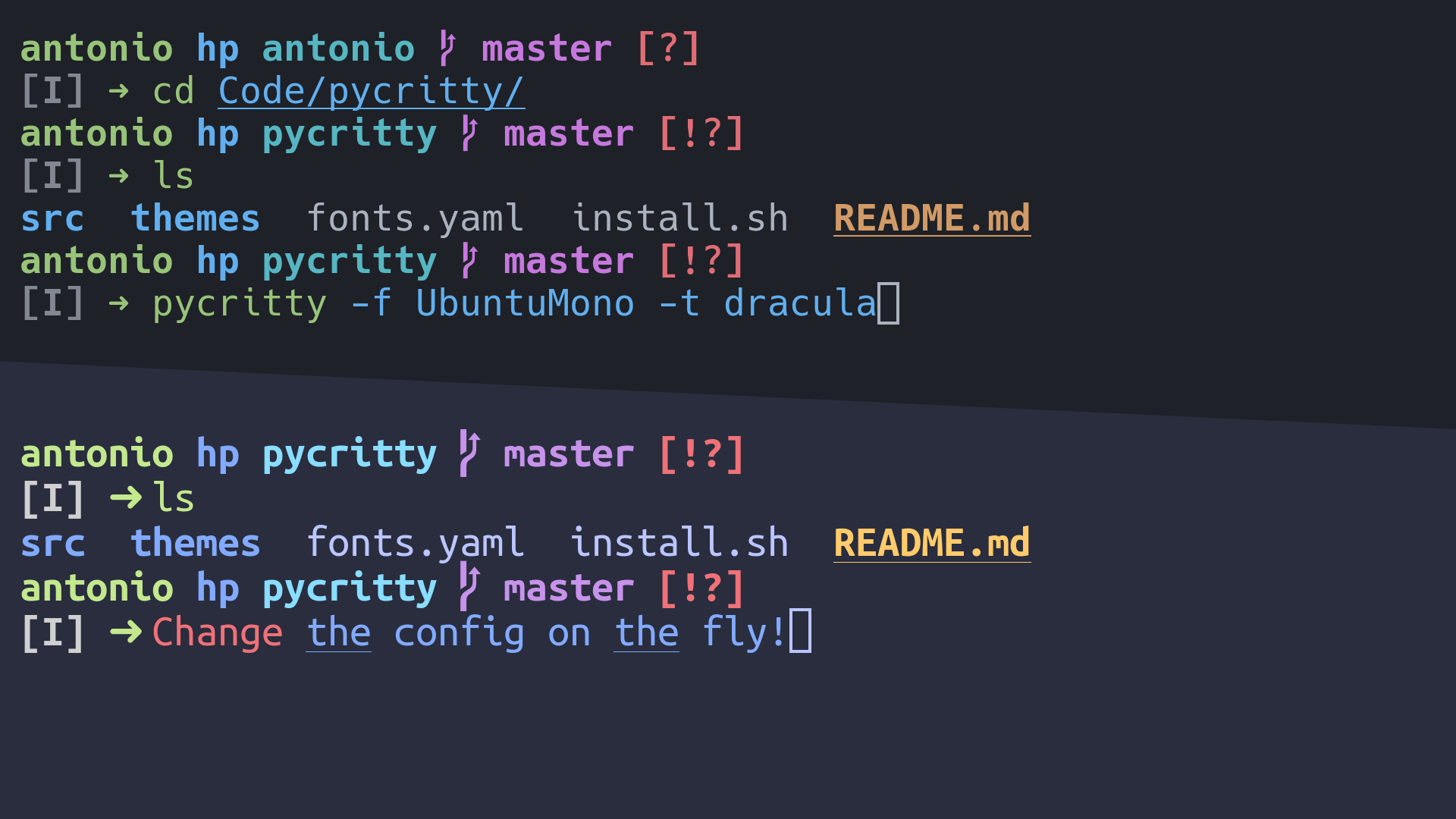

CLI program that allows you to change your Alacritty config with one command without editing the config file.

Pycritty Change your alacritty config on the fly! Installation: pip install pycritty By default, only the program itself will be installed, but you ca

Strict separation of config from code.

Python Decouple: Strict separation of settings from code Decouple helps you to organize your settings so that you can change parameters without having