941 Repositories

Python quantization-aware-training Libraries

training script for space time memory network

Trainig Script for Space Time Memory Network This codebase implemented training code for Space Time Memory Network with some cyclic features. Requirem

Training Certifiably Robust Neural Networks with Efficient Local Lipschitz Bounds (Local-Lip)

Training Certifiably Robust Neural Networks with Efficient Local Lipschitz Bounds (Local-Lip) Introduction TL;DR: We propose an efficient and trainabl

Elucidating Robust Learning with Uncertainty-Aware Corruption Pattern Estimation

Elucidating Robust Learning with Uncertainty-Aware Corruption Pattern Estimation Introduction 📋 Official implementation of Explainable Robust Learnin

This codebase facilitates fast experimentation of differentially private training of Hugging Face transformers.

private-transformers This codebase facilitates fast experimentation of differentially private training of Hugging Face transformers. What is this? Why

Coin-based opinion monitoring system

介绍 本仓库提供了基于币安 (Binance) 的二级市场舆情系统,可以根据自己的需求修改代码,设定各类告警提示 代码结构 binance.py - 与币安API交互 data_loader.py - 数据相关的读写 monitor.py - 监控的核心方法实现 analyze.py - 基于历史数

An interactive pygame implementation of quadtree spatial quantization

QuadTree-py An interactive pygame implementation of quadtree spatial quantization Contents Installation Usage API Reference TODO Installation Clone th

Enhancing Knowledge Tracing via Adversarial Training

Enhancing Knowledge Tracing via Adversarial Training This repository contains source code for the paper "Enhancing Knowledge Tracing via Adversarial T

SEC'21: Sparse Bitmap Compression for Memory-Efficient Training onthe Edge

Training Deep Learning Models on The Edge Training on the Edge enables continuous learning from new data for deployed neural networks on memory-constr

A Shading-Guided Generative Implicit Model for Shape-Accurate 3D-Aware Image Synthesis

A Shading-Guided Generative Implicit Model for Shape-Accurate 3D-Aware Image Synthesis Figure: Shape-Accurate 3D-Aware Image Synthesis. A Shading-Guid

Implementation of average- and worst-case robust flatness measures for adversarial training.

Relating Adversarially Robust Generalization to Flat Minima This repository contains code corresponding to the MLSys'21 paper: D. Stutz, M. Hein, B. S

A complete end-to-end machine learning portal that covers processes starting from model training to the model predicting results using FastAPI.

Machine Learning Portal Goal Application Workflow Process Design Live Project Goal A complete end-to-end machine learning portal that covers processes

Chinese NER(Named Entity Recognition) using BERT(Softmax, CRF, Span)

Chinese NER(Named Entity Recognition) using BERT(Softmax, CRF, Span)

Open source single image super-resolution toolbox containing various functionality for training a diverse number of state-of-the-art super-resolution models. Also acts as the companion code for the IEEE signal processing letters paper titled 'Improving Super-Resolution Performance using Meta-Attention Layers’.

Deep-FIR Codebase - Super Resolution Meta Attention Networks About This repository contains the main coding framework accompanying our work on meta-at

BMVC 2021 Oral: code for BI-GCN: Boundary-Aware Input-Dependent Graph Convolution for Biomedical Image Segmentation

BMVC 2021 BI-GConv: Boundary-Aware Input-Dependent Graph Convolution for Biomedical Image Segmentation Necassary Dependencies: PyTorch 1.2.0 Python 3.

OREO: Object-Aware Regularization for Addressing Causal Confusion in Imitation Learning (NeurIPS 2021)

OREO: Object-Aware Regularization for Addressing Causal Confusion in Imitation Learning (NeurIPS 2021) Video demo We here provide a video demo from co

Implementation for On Provable Benefits of Depth in Training Graph Convolutional Networks

Implementation for On Provable Benefits of Depth in Training Graph Convolutional Networks Setup This implementation is based on PyTorch = 1.0.0. Smal

Colossal-AI: A Unified Deep Learning System for Large-Scale Parallel Training

ColossalAI An integrated large-scale model training system with efficient parallelization techniques. arXiv: Colossal-AI: A Unified Deep Learning Syst

Data, model training, and evaluation code for "PubTables-1M: Towards a universal dataset and metrics for training and evaluating table extraction models".

PubTables-1M This repository contains training and evaluation code for the paper "PubTables-1M: Towards a universal dataset and metrics for training a

Predicting lncRNA–protein interactions based on graph autoencoders and collaborative training

Predicting lncRNA–protein interactions based on graph autoencoders and collaborative training Code for our paper "Predicting lncRNA–protein interactio

Colossal-AI: A Unified Deep Learning System for Large-Scale Parallel Training

ColossalAI An integrated large-scale model training system with efficient parallelization techniques Installation PyPI pip install colossalai Install

AugMax: Adversarial Composition of Random Augmentations for Robust Training

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

Exponential Graph is Provably Efficient for Decentralized Deep Training

Exponential Graph is Provably Efficient for Decentralized Deep Training This code repository is for the paper Exponential Graph is Provably Efficient

![[NeurIPS'21]](https://github.com/VITA-Group/AugMax/raw/main/images/AugMax.PNG)

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

AugMax: Adversarial Composition of Random Augmentations for Robust Training Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Anima Anandkumar, an

[NeurIPS 2021] Source code for the paper "Qu-ANTI-zation: Exploiting Neural Network Quantization for Achieving Adversarial Outcomes"

Qu-ANTI-zation This repository contains the code for reproducing the results of our paper: Qu-ANTI-zation: Exploiting Quantization Artifacts for Achie

Improving Transferability of Representations via Augmentation-Aware Self-Supervision

Improving Transferability of Representations via Augmentation-Aware Self-Supervision Accepted to NeurIPS 2021 TL;DR: Learning augmentation-aware infor

In this project I played with mlflow, streamlit and fastapi to create a training and prediction app on digits

Fastapi + MLflow + streamlit Setup env. I hope I covered all. pip install -r requirements.txt Start app Go in the root dir and run these Streamlit str

Qimera: Data-free Quantization with Synthetic Boundary Supporting Samples

Qimera: Data-free Quantization with Synthetic Boundary Supporting Samples This repository is the official implementation of paper [Qimera: Data-free Q

YOLOv5 Series Multi-backbone, Pruning and quantization Compression Tool Box.

YOLOv5-Compression Update News Requirements 环境安装 pip install -r requirements.txt Evaluation metric Visdrone Model mAP mAP@50 Parameters(M) GFLOPs FPS@

Asterisk is a framework to generate high-quality training datasets at scale

Asterisk is a framework to generate high-quality training datasets at scale

![[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training](https://github.com/TLMichael/Delusive-Adversary/raw/main/fig/Thumbnail.png)

[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training

Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training Code for NeurIPS 2021 paper "Better Safe Than Sorry: Preventing Delu

code for generating data set ES-ImageNet with corresponding training code

es-imagenet-master code for generating data set ES-ImageNet with corresponding training code dataset generator some codes of ODG algorithm The variabl

Building blocks for uncertainty-aware cycle consistency presented at NeurIPS'21.

UncertaintyAwareCycleConsistency This repository provides the building blocks and the API for the work presented in the NeurIPS'21 paper Robustness vi

A Context-aware Visual Attention-based training pipeline for Object Detection from a Webpage screenshot!

CoVA: Context-aware Visual Attention for Webpage Information Extraction Abstract Webpage information extraction (WIE) is an important step to create k

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

DECAF: Generating Fair Synthetic Data Using Causally-Aware Generative Networks

DECAF (DEbiasing CAusal Fairness) Code Author: Trent Kyono This repository contains the code used for the "DECAF: Generating Fair Synthetic Data Using

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

This is the official source code for SLATE. We provide the code for the model, the training code, and a dataset loader for the 3D Shapes dataset. This code is implemented in Pytorch.

SLATE This is the official source code for SLATE. We provide the code for the model, the training code and a dataset loader for the 3D Shapes dataset.

PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Condition Layer Normalization and Semi-Supervised Training in Text-To-Speech

Cross-Speaker-Emotion-Transfer - PyTorch Implementation PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Conditio

PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data.

Anti-Backdoor Learning PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data. Check the unlearning effect

Efficient Training of Visual Transformers with Small Datasets

Official codes for "Efficient Training of Visual Transformers with Small Datasets", NerIPS 2021.

Training Very Deep Neural Networks Without Skip-Connections

DiracNets v2 update (January 2018): The code was updated for DiracNets-v2 in which we removed NCReLU by adding per-channel a and b multipliers without

Training RNNs as Fast as CNNs

News SRU++, a new SRU variant, is released. [tech report] [blog] The experimental code and SRU++ implementation are available on the dev branch which

CVNets: A library for training computer vision networks

CVNets: A library for training computer vision networks This repository contains the source code for training computer vision models. Specifically, it

Official implementation for the paper: "Multi-label Classification with Partial Annotations using Class-aware Selective Loss"

Multi-label Classification with Partial Annotations using Class-aware Selective Loss Paper | Pretrained models Official PyTorch Implementation Emanuel

BERT model training impelmentation using 1024 A100 GPUs for MLPerf Training v1.1

Pre-trained checkpoint and bert config json file Location of checkpoint and bert config json file This MLCommons members Google Drive location contain

For auto aligning, cropping, and scaling HR and LR images for training image based neural networks

ImgAlign For auto aligning, cropping, and scaling HR and LR images for training image based neural networks Usage Make sure OpenCV is installed, 'pip

NAS-HPO-Bench-II is the first benchmark dataset for joint optimization of CNN and training HPs.

NAS-HPO-Bench-II API Overview NAS-HPO-Bench-II is the first benchmark dataset for joint optimization of CNN and training HPs. It helps a fair and low-

Improving Non-autoregressive Generation with Mixup Training

MIST Training MIST TRAIN_FILE=/your/path/to/train.json VALID_FILE=/your/path/to/valid.json OUTPUT_DIR=/your/path/to/save_checkpoints CACHE_DIR=/your/p

Official implementation for the paper: Multi-label Classification with Partial Annotations using Class-aware Selective Loss

Multi-label Classification with Partial Annotations using Class-aware Selective Loss Paper | Pretrained models Official PyTorch Implementation Emanuel

NeurIPS'21: Probabilistic Margins for Instance Reweighting in Adversarial Training (Pytorch implementation).

source code for NeurIPS21 paper robabilistic Margins for Instance Reweighting in Adversarial Training

Code release for "Cycle Self-Training for Domain Adaptation" (NeurIPS 2021)

CST Code release for "Cycle Self-Training for Domain Adaptation" (NeurIPS 2021) Prerequisites torch=1.7.0 torchvision qpsolvers numpy prettytable tqd

Code and datasets for the paper "KnowPrompt: Knowledge-aware Prompt-tuning with Synergistic Optimization for Relation Extraction"

KnowPrompt Code and datasets for our paper "KnowPrompt: Knowledge-aware Prompt-tuning with Synergistic Optimization for Relation Extraction" Requireme

A library for preparing, training, and evaluating scalable deep learning hybrid recommender systems using PyTorch.

collie Collie is a library for preparing, training, and evaluating implicit deep learning hybrid recommender systems, named after the Border Collie do

QuakeLabeler is a Python package to create and manage your seismic training data, processes, and visualization in a single place — so you can focus on building the next big thing.

QuakeLabeler Quake Labeler was born from the need for seismologists and developers who are not AI specialists to easily, quickly, and independently bu

🔥🔥High-Performance Face Recognition Library on PaddlePaddle & PyTorch🔥🔥

face.evoLVe: High-Performance Face Recognition Library based on PaddlePaddle & PyTorch Evolve to be more comprehensive, effective and efficient for fa

PyTorch implementation of the paper Ultra Fast Structure-aware Deep Lane Detection

PyTorch implementation of the paper Ultra Fast Structure-aware Deep Lane Detection

The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization

PRIMER The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization. PRIMER is a pre-trained model for mu

![Official PyTorch Implementation of Mask-aware IoU and maYOLACT Detector [BMVC2021]](https://github.com/kemaloksuz/Mask-aware-IoU/raw/master/assets/Teaser.png)

Official PyTorch Implementation of Mask-aware IoU and maYOLACT Detector [BMVC2021]

The official implementation of Mask-aware IoU and maYOLACT detector. Our implementation is based on mmdetection. Mask-aware IoU for Anchor Assignment

Implements the training, testing and editing tools for "Pluralistic Image Completion"

Pluralistic Image Completion ArXiv | Project Page | Online Demo | Video(demo) This repository implements the training, testing and editing tools for "

A Deep Learning based project for creating line art portraits.

ArtLine The main aim of the project is to create amazing line art portraits. Sounds Intresting,let's get to the pictures!! Model-(Smooth) Model-(Quali

PyTorch image models, scripts, pretrained weights -- ResNet, ResNeXT, EfficientNet, EfficientNetV2, NFNet, Vision Transformer, MixNet, MobileNet-V3/V2, RegNet, DPN, CSPNet, and more

PyTorch Image Models Sponsors What's New Introduction Models Features Results Getting Started (Documentation) Train, Validation, Inference Scripts Awe

Synthetic structured data generators

Join us on What is Synthetic Data? Synthetic data is artificially generated data that is not collected from real world events. It replicates the stati

Source code for the paper "PLOME: Pre-training with Misspelled Knowledge for Chinese Spelling Correction" in ACL2021

PLOME:Pre-training with Misspelled Knowledge for Chinese Spelling Correction (ACL2021) This repository provides the code and data of the work in ACL20

PaSST: Efficient Training of Audio Transformers with Patchout

PaSST: Efficient Training of Audio Transformers with Patchout This is the implementation for Efficient Training of Audio Transformers with Patchout Pa

Vector Quantization, in Pytorch

Vector Quantization - Pytorch A vector quantization library originally transcribed from Deepmind's tensorflow implementation, made conveniently into a

Building blocks for uncertainty-aware cycle consistency presented at NeurIPS'21.

UncertaintyAwareCycleConsistency This repository provides the building blocks and the API for the work presented in the NeurIPS'21 paper Robustness vi

Scalable training for dense retrieval models.

Scalable implementation of dense retrieval. Training on cluster By default it trains locally: PYTHONPATH=.:$PYTHONPATH python dpr_scale/main.py traine

Official PyTorch Implementation of HELP: Hardware-adaptive Efficient Latency Prediction for NAS via Meta-Learning (NeurIPS 2021 Spotlight)

[NeurIPS 2021 Spotlight] HELP: Hardware-adaptive Efficient Latency Prediction for NAS via Meta-Learning [Paper] This is Official PyTorch implementatio

NVIDIA Merlin is an open source library providing end-to-end GPU-accelerated recommender systems, from feature engineering and preprocessing to training deep learning models and running inference in production.

NVIDIA Merlin NVIDIA Merlin is an open source library designed to accelerate recommender systems on NVIDIA’s GPUs. It enables data scientists, machine

The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization

PRIMER The official code for PRIMER: Pyramid-based Masked Sentence Pre-training for Multi-document Summarization. PRIMER is a pre-trained model for mu

Official PyTorch implementation of MAAD: A Model and Dataset for Attended Awareness

MAAD: A Model for Attended Awareness in Driving Install // Datasets // Training // Experiments // Analysis // License Official PyTorch implementation

MG-GCN: Scalable Multi-GPU GCN Training Framework

MG-GCN MG-GCN: multi-GPU GCN training framework. For more information, please read our paper. After cloning our repository, run git submodule update -

This is the 3D Implementation of 《Inconsistency-aware Uncertainty Estimation for Semi-supervised Medical Image Segmentation》

CoraNet This is the 3D Implementation of 《Inconsistency-aware Uncertainty Estimation for Semi-supervised Medical Image Segmentation》 Environment pytor

Official implementation of deep-multi-trajectory-based single object tracking (IEEE T-CSVT 2021).

DeepMTA_PyTorch Officical PyTorch Implementation of "Dynamic Attention-guided Multi-TrajectoryAnalysis for Single Object Tracking", Xiao Wang, Zhe Che

Demystifying How Self-Supervised Features Improve Training from Noisy Labels

Demystifying How Self-Supervised Features Improve Training from Noisy Labels This code is a PyTorch implementation of the paper "[Demystifying How Sel

3D-aware GANs based on NeRF (arXiv).

CIPS-3D This repository will contain the code of the paper, CIPS-3D: A 3D-Aware Generator of GANs Based on Conditionally-Independent Pixel Synthesis.

![Official PyTorch Implementation of Mask-aware IoU and maYOLACT Detector [BMVC2021]](https://github.com/kemaloksuz/Mask-aware-IoU/raw/master/assets/Teaser.png)

Official PyTorch Implementation of Mask-aware IoU and maYOLACT Detector [BMVC2021]

The official implementation of Mask-aware IoU and maYOLACT detector. Our implementation is based on mmdetection. Mask-aware IoU for Anchor Assignment

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System Authors: Yixuan Su, Lei Shu, Elman Mansimov, Arshit Gupta, Deng Cai, Yi-An Lai

Codes for CIKM'21 paper 'Self-Supervised Graph Co-Training for Session-based Recommendation'.

COTREC Codes for CIKM'21 paper 'Self-Supervised Graph Co-Training for Session-based Recommendation'. Requirements: Python 3.7, Pytorch 1.6.0 Best Hype

Knowledge-aware Coupled Graph Neural Network for Social Recommendation

KCGN AAAI-2021 《Knowledge-aware Coupled Graph Neural Network for Social Recommendation》 Environments python 3.8 pytorch-1.6 DGL 0.5.3 (https://github.

A tensorflow implementation of the RecoGCN model in a CIKM'19 paper, titled with "Relation-Aware Graph Convolutional Networks for Agent-Initiated Social E-Commerce Recommendation".

This repo contains a tensorflow implementation of RecoGCN and the experiment dataset Running the RecoGCN model python train.py Example training outp

Price-aware Recommendation with Graph Convolutional Networks,

PUP This is the official implementation of our ICDE'20 paper: Yu Zheng, Chen Gao, Xiangnan He, Yong Li, Depeng Jin, Price-aware Recommendation with Gr

Code for the paper "Location-aware Single Image Reflection Removal"

Location-aware Single Image Reflection Removal The shown images are provided by the datasets from IBCLN, ERRNet, SIR2 and the Internet images. The cod

PyTorch implementation of paper "StarEnhancer: Learning Real-Time and Style-Aware Image Enhancement" (ICCV 2021 Oral)

StarEnhancer StarEnhancer: Learning Real-Time and Style-Aware Image Enhancement (ICCV 2021 Oral) Abstract: Image enhancement is a subjective process w

Designing a Practical Degradation Model for Deep Blind Image Super-Resolution (ICCV, 2021) (PyTorch) - We released the training code!

Designing a Practical Degradation Model for Deep Blind Image Super-Resolution Kai Zhang, Jingyun Liang, Luc Van Gool, Radu Timofte Computer Vision Lab

Code associated with the paper "Towards Understanding the Data Dependency of Mixup-style Training".

Mixup-Data-Dependency Code associated with the paper "Towards Understanding the Data Dependency of Mixup-style Training". Running Alternating Line Exp

Anomaly detection in multi-agent trajectories: Code for training, evaluation and the OpenAI highway simulation.

Anomaly Detection in Multi-Agent Trajectories for Automated Driving This is the official project page including the paper, code, simulation, baseline

collect training and calibration data for gaze tracking

Collect Training and Calibration Data for Gaze Tracking This tool allows collecting gaze data necessary for personal calibration or training of eye-tr

Code for SALT: Stackelberg Adversarial Regularization, EMNLP 2021.

SALT: Stackelberg Adversarial Regularization Code for Adversarial Regularization as Stackelberg Game: An Unrolled Optimization Approach, EMNLP 2021. R

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System

Multi-Task Pre-Training for Plug-and-Play Task-Oriented Dialogue System Authors: Yixuan Su, Lei Shu, Elman Mansimov, Arshit Gupta, Deng Cai, Yi-An Lai

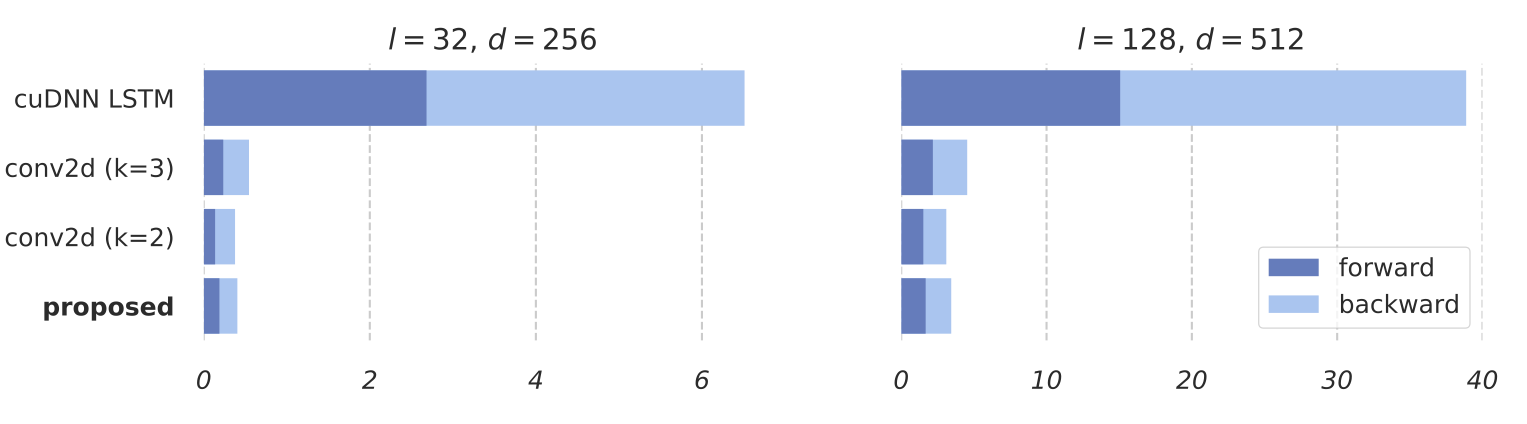

Deeply Supervised, Layer-wise Prediction-aware (DSLP) Transformer for Non-autoregressive Neural Machine Translation

Non-Autoregressive Translation with Layer-Wise Prediction and Deep Supervision Training Efficiency We show the training efficiency of our DSLP model b

Propose a principled and practically effective framework for unsupervised accuracy estimation and error detection tasks with theoretical analysis and state-of-the-art performance.

Detecting Errors and Estimating Accuracy on Unlabeled Data with Self-training Ensembles This project is for the paper: Detecting Errors and Estimating

Code for the ICME 2021 paper "Exploring Driving-Aware Salient Object Detection via Knowledge Transfer"

TSOD Code for the ICME 2021 paper "Exploring Driving-Aware Salient Object Detection via Knowledge Transfer" Usage For training, open train_test, run p

Source Code for our paper: Understand me, if you refer to Aspect Knowledge: Knowledge-aware Gated Recurrent Memory Network

KaGRMN-DSG_ABSA This repository contains the PyTorch source Code for our paper: Understand me, if you refer to Aspect Knowledge: Knowledge-aware Gated

Spatial color quantization in Rust

rscolorq Rust port of Derrick Coetzee's scolorq, based on the 1998 paper "On spatial quantization of color images" by Jan Puzicha, Markus Held, Jens K

An Agnostic Computer Vision Framework - Pluggable to any Training Library: Fastai, Pytorch-Lightning with more to come

IceVision is the first agnostic computer vision framework to offer a curated collection with hundreds of high-quality pre-trained models from torchvision, MMLabs, and soon Pytorch Image Models. It orchestrates the end-to-end deep learning workflow allowing to train networks with easy-to-use robust high-performance libraries such as Pytorch-Lightning and Fastai

Composing methods for ML training efficiency

MosaicML Composer contains a library of methods, and ways to compose them together for more efficient ML training.

This is a library for training and applying sparse fine-tunings with torch and transformers.

This is a library for training and applying sparse fine-tunings with torch and transformers. Please refer to our paper Composable Sparse Fine-Tuning f

Deeply Supervised, Layer-wise Prediction-aware (DSLP) Transformer for Non-autoregressive Neural Machine Translation

Non-Autoregressive Translation with Layer-Wise Prediction and Deep Supervision Training Efficiency We show the training efficiency of our DSLP model b

Density-aware Single Image De-raining using a Multi-stream Dense Network (CVPR 2018)

DID-MDN Density-aware Single Image De-raining using a Multi-stream Dense Network He Zhang, Vishal M. Patel [Paper Link] (CVPR'18) We present a novel d