106 Repositories

Python spark-kafka-connector Libraries

A data engineering project with Kafka, Spark Streaming, dbt, Docker, Airflow, Terraform, GCP and much more!

Streamify A data pipeline with Kafka, Spark Streaming, dbt, Docker, Airflow, Terraform, GCP and much more! Description Objective The project will stre

Free Data Engineering course!

Data Engineering Zoomcamp Register in DataTalks.Club's Slack Join the #course-data-engineering channel The videos are published to DataTalks.Club's Yo

Python library to receive live stream events like comments and gifts in realtime from TikTok LIVE.

TikTokLive A python library to connect to and read events from TikTok's LIVE service A python library to receive and decode livestream events such as

Databricks Certified Associate Spark Developer preparation toolkit to setup single node Standalone Spark Cluster along with material in the form of Jupyter Notebooks.

Databricks Certification Spark Databricks Certified Associate Spark Developer preparation toolkit to setup single node Standalone Spark Cluster along

Containerized Demo of Apache Spark MLlib on a Data Lakehouse (2022)

Spark-DeltaLake-Demo Reliable, Scalable Machine Learning (2022) This project was completed in an attempt to become better acquainted with the latest b

Esse é o meu primeiro repo tratando de fim a fim, uma pipeline de dados abertos do governo brasileiro relacionado a compras de contrato e cronogramas anuais com spark, em pyspark e SQL!

Olá! Esse é o meu primeiro repo tratando de fim a fim, uma pipeline de dados abertos do governo brasileiro relacionado a compras de contrato e cronogr

Python-kafka-reset-consumergroup-offset-example - Python Kafka reset consumergroup offset example

Python Kafka reset consumergroup offset example This is a simple example of how

🏅 Top 5% in 제2회 연구개발특구 인공지능 경진대회 AI SPARK 챌린지

AI_SPARK_CHALLENG_Object_Detection 제2회 연구개발특구 인공지능 경진대회 AI SPARK 챌린지 🏅 Top 5% in mAP(0.75) (443명 중 13등, mAP: 0.98116) 대회 설명 Edge 환경에서의 가축 Object Dete

Web3 Solidity Connector

With this project, you can compile your sol files and create new transactions including creating contract and calling the state changer functions. You can integrate integrate your sol files with Python and you can call functions with using Python.

A data structure that extends pyspark.sql.DataFrame with metadata information.

MetaFrame A data structure that extends pyspark.sql.DataFrame with metadata info

PySpark Structured Streaming ROS Kafka ApacheSpark Cassandra

PySpark-Structured-Streaming-ROS-Kafka-ApacheSpark-Cassandra The purpose of this project is to demonstrate a structured streaming pipeline with Apache

Event-driven-model-serving - Unified API of Apache Kafka and Google PubSub

event-driven-model-serving Unified API of Apache Kafka and Google PubSub 1. Proj

HA-Edge-Connector - HA Edge Connector For Python

HA-Edge-Connector 1. Required a. Smartthings Hub & Homeassistant must be in same

A simple healthcheck wrapper to monitor Kafka.

kafka-healthcheck A simple healthcheck wrapper to monitor Kafka. Kafka Healthcheck is a simple server that provides a singular API endpoint to determi

SynapseML - an open source library to simplify the creation of scalable machine learning pipelines

Synapse Machine Learning SynapseML (previously MMLSpark) is an open source library to simplify the creation of scalable machine learning pipelines. Sy

MariaDB connector using python and flask

MariaDB connector using python and flask This should work with flask and to be deployed on docker. Setting up stuff 1. Docker build and run docker bui

macOS development environment setup: Setting up a new developer machine can be an ad-hoc, manual, and time-consuming process.

dev-setup Motivation Setting up a new developer machine can be an ad-hoc, manual, and time-consuming process. dev-setup aims to simplify the process w

A Time Series Library for Apache Spark

Flint: A Time Series Library for Apache Spark The ability to analyze time series data at scale is critical for the success of finance and IoT applicat

Apache Spark & Python (pySpark) tutorials for Big Data Analysis and Machine Learning as IPython / Jupyter notebooks

Spark Python Notebooks This is a collection of IPython notebook/Jupyter notebooks intended to train the reader on different Apache Spark concepts, fro

Distributed deep learning on Hadoop and Spark clusters.

Note: we're lovingly marking this project as Archived since we're no longer supporting it. You are welcome to read the code and fork your own version

BigDL - Evaluate the performance of BigDL (Distributed Deep Learning on Apache Spark) in big data analysis problems

Evaluate the performance of BigDL (Distributed Deep Learning on Apache Spark) in big data analysis problems.

Kafka Connect JDBC Docker Image.

kafka-connect-jdbc This is a dockerized version of the Confluent JDBC database connector. Usage This image is running the connect-standalone command w

Stream-Kafka-ELK-Stack - Weather data streaming using Apache Kafka and Elastic Stack.

Streaming Data Pipeline - Kafka + ELK Stack Streaming weather data using Apache Kafka and Elastic Stack. Data source: https://openweathermap.org/api O

Spark-movie-lens - An on-line movie recommender using Spark, Python Flask, and the MovieLens dataset

A scalable on-line movie recommender using Spark and Flask This Apache Spark tutorial will guide you step-by-step into how to use the MovieLens datase

Use Jupyter Notebooks to demonstrate how to build a Recommender with Apache Spark & Elasticsearch

Recommendation engines are one of the most well known, widely used and highest value use cases for applying machine learning. Despite this, while there are many resources available for the basics of training a recommendation model, there are relatively few that explain how to actually deploy these models to create a large-scale recommender system.

X-news - Pipeline data use scrapy, kafka, spark streaming, spark ML and elasticsearch, Kibana

X-news - Pipeline data use scrapy, kafka, spark streaming, spark ML and elasticsearch, Kibana

Bigdata - This Scrapy project uses Redis and Kafka to create a distributed on demand scraping cluster

Scrapy Cluster This Scrapy project uses Redis and Kafka to create a distributed

City-seeds - A random generator of cultural characteristics intended to spark ideas and help draw threads

City Seeds This is a random generator of cultural characteristics intended to sp

This Scrapy project uses Redis and Kafka to create a distributed on demand scraping cluster

This Scrapy project uses Redis and Kafka to create a distributed on demand scraping cluster.

Example Python codes that works with MySQL and Excel files (.xlsx)

Python x MySQL x Excel by Zinglecode Example Python codes that do the processes between MySQL database and Excel spreadsheet files. YouTube videos MyS

This mini project showcase how to build and debug Apache Spark application using Python

Spark app can't be debugged using normal procedure. This mini project showcase how to build and debug Apache Spark application using Python programming language. There are also options to run Spark application on Spark container

Pandas and Spark DataFrame comparison for humans

DataComPy DataComPy is a package to compare two Pandas DataFrames. Originally started to be something of a replacement for SAS's PROC COMPARE for Pand

Fetching tweets and integrating it with Kafka and PySpark

KafkaPySpark Zookeeper bin/zookeeper-server-start.sh config/zookeeper.properties Kafka Server bin/kafka-server-start.sh config/server.properties Kafka

Make Your Company Data Driven. Connect to any data source, easily visualize, dashboard and share your data.

Redash is designed to enable anyone, regardless of the level of technical sophistication, to harness the power of data big and small. SQL users levera

Monitor the stability of a pandas or spark dataframe ⚙︎

Population Shift Monitoring popmon is a package that allows one to check the stability of a dataset. popmon works with both pandas and spark datasets.

Implementation of K-Nearest Neighbors Algorithm Using PySpark

KNN With Spark Implementation of KNN using PySpark. The KNN was used on two separate datasets (https://archive.ics.uci.edu/ml/datasets/iris and https:

A web application (with multiple API project options) that uses MariaDB HTAP!

Bookings Bookings is a web application that, backed by the power of the MariaDB Connectors and the MariaDB X4 Platform, unleashes the power of smart t

Apache (Py)Spark type annotations (stub files).

PySpark Stubs A collection of the Apache Spark stub files. These files were generated by stubgen and manually edited to include accurate type hints. T

A real-time financial data streaming pipeline and visualization platform using Apache Kafka, Cassandra, and Bokeh.

Realtime Financial Market Data Visualization and Analysis Introduction This repo shows my project about real-time stock data pipeline. All the code is

Pyspark project that able to do joins on the spark data frames.

SPARK JOINS This project is to perform inner, all outer joins and semi joins. create_df.py: load_data.py : helps to put data into Spark data frames. d

Delta Sharing: An Open Protocol for Secure Data Sharing

Delta Sharing: An Open Protocol for Secure Data Sharing Delta Sharing is an open protocol for secure real-time exchange of large datasets, which enabl

SAP HANA Connector in pure Python

SAP HANA Database Client for Python Important Notice This public repository is read-only and no longer maintained. The active maintained alternative i

🔥 Campus-Run Django Server🔥

🏫 Campus-Run Campus-Run is a 3D racing game set on a college campus. Designed this service to comfort university students who are unable to visit the

Logo DYS (Doküman Yönetim Sitemi) API Python Implementation

dys-connector Logo DYS (Dokuman Yonetim Sistemi) API Python Implementation Python Package: https://pypi.org/project/dys-connector Quick Start from dys

The Spark Challenge Student Check-In/Out Tracking Script

The Spark Challenge Student Check-In/Out Tracking Script This Python Script uses the Student ID Database to match the entries with the ID Card Swipe a

Reading streams of Twitter data, save them to Kafka, then process with Kafka Stream API and Spark Streaming

Using Streaming Twitter Data with Kafka and Spark Reading streams of Twitter data, publishing them to Kafka topic, process message using Kafka Stream

FHEM Connector for FHT Heating devices

home-assistant-fht from: https://github.com/Rsclub22 FHEM Connector for FHT Heating devices (connected via FHEM) Requires FHEM to work You can find FH

A robust, low-level connector for the Discord API

Bauxite Bauxite is a robust, low-level connector for the Discord API. What is Bauxite for? Bauxite is made for two main purposes: Creating higher-leve

Simple and Distributed Machine Learning

Synapse Machine Learning SynapseML (previously MMLSpark) is an open source library to simplify the creation of scalable machine learning pipelines. Sy

Deep Learning Pipelines for Apache Spark

Deep Learning Pipelines for Apache Spark The repo only contains HorovodRunner code for local CI and API docs. To use HorovodRunner for distributed tra

Code base of KU AIRS: SPARK Autonomous Vehicle Team

KU AIRS: SPARK Autonomous Vehicle Project Check this link for the blog post describing this project and the video of SPARK in simulation and on parkou

Building house price data pipelines with Apache Beam and Spark on GCP

This project contains the process from building a web crawler to extract the raw data of house price to create ETL pipelines using Google Could Platform services.

PyTorch Kafka Dataset: A definition of a dataset to get training data from Kafka.

PyTorch Kafka Dataset: A definition of a dataset to get training data from Kafka.

Data science Python notebooks: Deep learning (TensorFlow, Theano, Caffe, Keras), scikit-learn, Kaggle, big data (Spark, Hadoop MapReduce, HDFS), matplotlib, pandas, NumPy, SciPy, Python essentials, AWS, and various command lines.

Data science Python notebooks: Deep learning (TensorFlow, Theano, Caffe, Keras), scikit-learn, Kaggle, big data (Spark, Hadoop MapReduce, HDFS), matplotlib, pandas, NumPy, SciPy, Python essentials, AWS, and various command lines.

List of Data Science Cheatsheets to rule the world

Data Science Cheatsheets List of Data Science Cheatsheets to rule the world. Table of Contents Business Science Business Science Problem Framework Dat

Sentiment analysis on streaming twitter data using Spark Structured Streaming & Python

Sentiment analysis on streaming twitter data using Spark Structured Streaming & Python This project is a good starting point for those who have little

Microservice example with Python, Faust-Streaming and Kafka (Redpanda)

Microservices Orchestration with Python, Faust-Streaming and Kafka (Redpanda) Example project for PythonBenin meetup. It demonstrates how to use Faust

University of Missouri - Kansas City: CS451R: Capstone

CS451RC University of Missouri - Kansas City: CS451R: Capstone Installation cd git clone https://github.com/ala2q6/CS451RC.git cd CS451RC pip3 instal

Instant search for and access to many datasets in Pyspark.

SparkDataset Provides instant access to many datasets right from Pyspark (in Spark DataFrame structure). Drop a star if you like the project. 😃 Motiv

Polypheny Connector for Python

Polypheny Connector for Python This enables Python programs to access Polypheny databases, using an API that is compliant with the Python Database API

A minimal configuration for a dockerized kafka project.

Docker Kafka Quickstart A minimal configuration for a dockerized kafka project. Usage: Run this command to build kafka and zookeeper containers, and c

This OctoPrint plugin will make the initial connection to 3D Hub a breeze

3D Hub Connector This OctoPrint plugin will make the initial connection to 3D Hub a breeze. In future it will help in setting up a tunnel connection a

A collection of neat and practical data science and machine learning projects

Data Science A collection of neat and practical data science and machine learning projects Explore the docs » Report Bug · Request Feature Table of Co

DataPrep — The easiest way to prepare data in Python

DataPrep — The easiest way to prepare data in Python

Spark development environment for k8s

Local Spark Dev Env with Docker Development environment for k8s. Using the spark-operator image to ensure it will be the same environment. Start conta

TensorFlowOnSpark brings TensorFlow programs to Apache Spark clusters.

TensorFlowOnSpark TensorFlowOnSpark brings scalable deep learning to Apache Hadoop and Apache Spark clusters. By combining salient features from the T

PySpark Cheat Sheet - learn PySpark and develop apps faster

This cheat sheet will help you learn PySpark and write PySpark apps faster. Everything in here is fully functional PySpark code you can run or adapt to your programs.

Visions provides an extensible suite of tools to support common data analysis operations

Visions And these visions of data types, they kept us up past the dawn. Visions provides an extensible suite of tools to support common data analysis

RabbitMQ asynchronous connector library for Python with built in RPC support

About RabbitMQ connector library for Python that is fully integrated with the aio-pika framework. Introduction BunnyStorm is here to simplify working

Fully reproducible, Dockerized, step-by-step, tutorial on how to mock a "real-time" Kafka data stream from a timestamped csv file. Detailed blog post published on Towards Data Science.

time-series-kafka-demo Mock stream producer for time series data using Kafka. I walk through this tutorial and others here on GitHub and on my Medium

The MLOps platform for innovators 🚀

DS2.ai is an integrated AI operation solution that supports all stages from custom AI development to deployment. It is an AI-specialized platform service that collects data, builds a training dataset through data labeling, and enables automatic development of artificial intelligence and easy deployment and operation.

Store events and publish to Kafka

Create an event from Django ORM object model, store the event into the database and also publish it into Kafka cluster.

A python to scratch API connector. Can fetch data from the API and send it back in cloud variables.

Scratch2py Scratch2py or S2py is a easy to use, versatile tool to communicate with the Scratch API Based of scratchclient by Raihan142857 Installation

Demo project for real time anomaly detection using kafka and python

kafkaml-anomaly-detection Project for real time anomaly detection using kafka and python It's assumed that zookeeper and kafka are running in the loca

[DEPRECATED] Tensorflow wrapper for DataFrames on Apache Spark

TensorFrames (Deprecated) Note: TensorFrames is deprecated. You can use pandas UDF instead. Experimental TensorFlow binding for Scala and Apache Spark

Distributed scikit-learn meta-estimators in PySpark

sk-dist: Distributed scikit-learn meta-estimators in PySpark What is it? sk-dist is a Python package for machine learning built on top of scikit-learn

Distributed Tensorflow, Keras and PyTorch on Apache Spark/Flink & Ray

A unified Data Analytics and AI platform for distributed TensorFlow, Keras and PyTorch on Apache Spark/Flink & Ray What is Analytics Zoo? Analytics Zo

Microsoft Machine Learning for Apache Spark

Microsoft Machine Learning for Apache Spark MMLSpark is an ecosystem of tools aimed towards expanding the distributed computing framework Apache Spark

TensorFlowOnSpark brings TensorFlow programs to Apache Spark clusters.

TensorFlowOnSpark TensorFlowOnSpark brings scalable deep learning to Apache Hadoop and Apache Spark clusters. By combining salient features from the T

Distributed Deep learning with Keras & Spark

Elephas: Distributed Deep Learning with Keras & Spark Elephas is an extension of Keras, which allows you to run distributed deep learning models at sc

BigDL: Distributed Deep Learning Framework for Apache Spark

BigDL: Distributed Deep Learning on Apache Spark What is BigDL? BigDL is a distributed deep learning library for Apache Spark; with BigDL, users can w

Distributed training framework for TensorFlow, Keras, PyTorch, and Apache MXNet.

Horovod Horovod is a distributed deep learning training framework for TensorFlow, Keras, PyTorch, and Apache MXNet. The goal of Horovod is to make dis

Distributed training framework for TensorFlow, Keras, PyTorch, and Apache MXNet.

Horovod Horovod is a distributed deep learning training framework for TensorFlow, Keras, PyTorch, and Apache MXNet. The goal of Horovod is to make dis

Koalas: pandas API on Apache Spark

pandas API on Apache Spark Explore Koalas docs » Live notebook · Issues · Mailing list Help Thirsty Koalas Devastated by Recent Fires The Koalas proje

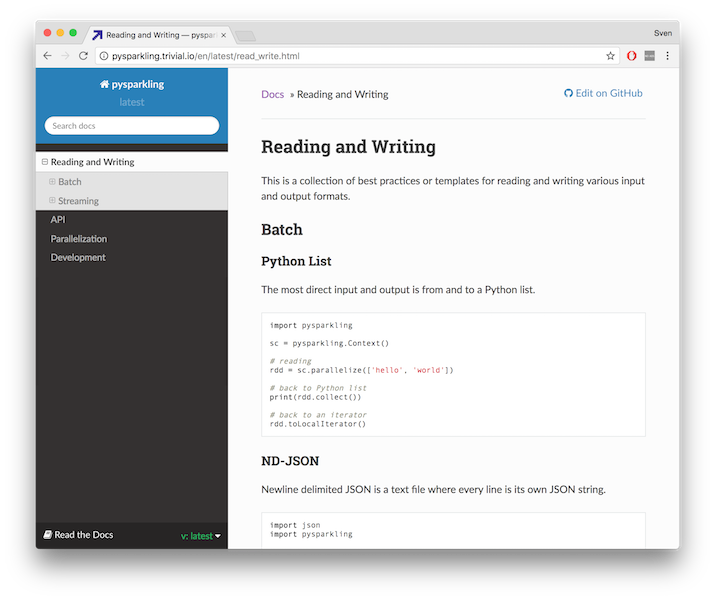

A pure Python implementation of Apache Spark's RDD and DStream interfaces.

pysparkling Pysparkling provides a faster, more responsive way to develop programs for PySpark. It enables code intended for Spark applications to exe

Distributed Deep learning with Keras & Spark

Elephas: Distributed Deep Learning with Keras & Spark Elephas is an extension of Keras, which allows you to run distributed deep learning models at sc

Scalable, Portable and Distributed Gradient Boosting (GBDT, GBRT or GBM) Library, for Python, R, Java, Scala, C++ and more. Runs on single machine, Hadoop, Spark, Dask, Flink and DataFlow

eXtreme Gradient Boosting Community | Documentation | Resources | Contributors | Release Notes XGBoost is an optimized distributed gradient boosting l

PySpark + Scikit-learn = Sparkit-learn

Sparkit-learn PySpark + Scikit-learn = Sparkit-learn GitHub: https://github.com/lensacom/sparkit-learn About Sparkit-learn aims to provide scikit-lear

Open source platform for the machine learning lifecycle

MLflow: A Machine Learning Lifecycle Platform MLflow is a platform to streamline machine learning development, including tracking experiments, packagi

State of the Art Natural Language Processing

Spark NLP: State of the Art Natural Language Processing Spark NLP is a Natural Language Processing library built on top of Apache Spark ML. It provide

State of the Art Natural Language Processing

Spark NLP: State of the Art Natural Language Processing Spark NLP is a Natural Language Processing library built on top of Apache Spark ML. It provide

Scalable, Portable and Distributed Gradient Boosting (GBDT, GBRT or GBM) Library, for Python, R, Java, Scala, C++ and more. Runs on single machine, Hadoop, Spark, Dask, Flink and DataFlow

eXtreme Gradient Boosting Community | Documentation | Resources | Contributors | Release Notes XGBoost is an optimized distributed gradient boosting l

Apache Spark - A unified analytics engine for large-scale data processing

Apache Spark Spark is a unified analytics engine for large-scale data processing. It provides high-level APIs in Scala, Java, Python, and R, and an op

SAP HANA Connector in pure Python

SAP HANA Database Client for Python A pure Python client for the SAP HANA Database based on the SAP HANA Database SQL Command Network Protocol. pyhdb

MongoDB data stream pipeline tools by YouGov (adopted from MongoDB)

mongo-connector The mongo-connector project originated as a MongoDB mongo-labs project and is now community-maintained under the custody of YouGov, Pl

Python interface to Oracle Database conforming to the Python DB API 2.0 specification.

cx_Oracle version 8.2 (Development) cx_Oracle is a Python extension module that enables access to Oracle Database. It conforms to the Python database

Confluent's Kafka Python Client

Confluent's Python Client for Apache KafkaTM confluent-kafka-python provides a high-level Producer, Consumer and AdminClient compatible with all Apach

MySQL database connector for Python (with Python 3 support)

mysqlclient This project is a fork of MySQLdb1. This project adds Python 3 support and fixed many bugs. PyPI: https://pypi.org/project/mysqlclient/ Gi

Serverless proxy for Spark cluster

Hydrosphere Mist Hydrosphere Mist is a serverless proxy for Spark cluster. Mist provides a new functional programming framework and deployment model f