848 Repositories

Python training-pipeline Libraries

Two phase pipeline + StreamlitTwo phase pipeline + Streamlit

Two phase pipeline + Streamlit This is an example project that demonstrates how to create a pipeline that consists of two phases of execution. In betw

PyTorch Kafka Dataset: A definition of a dataset to get training data from Kafka.

PyTorch Kafka Dataset: A definition of a dataset to get training data from Kafka.

Experimental code for paper: Generative Adversarial Networks as Variational Training of Energy Based Models

Experimental code for paper: Generative Adversarial Networks as Variational Training of Energy Based Models, under review at ICLR 2017 requirements: T

RP-GAN: Stable GAN Training with Random Projections

RP-GAN: Stable GAN Training with Random Projections This repository contains a reference implementation of the algorithm described in the paper: Behna

Code for training and evaluation of the model from "Language Generation with Recurrent Generative Adversarial Networks without Pre-training"

Language Generation with Recurrent Generative Adversarial Networks without Pre-training Code for training and evaluation of the model from "Language G

A stable algorithm for GAN training

DRAGAN (Deep Regret Analytic Generative Adversarial Networks) Link to our paper - https://arxiv.org/abs/1705.07215 Pytorch implementation (thanks!) -

Code for ICLR2018 paper: Improving GAN Training via Binarized Representation Entropy (BRE) Regularization - Y. Cao · W Ding · Y.C. Lui · R. Huang

code for "Improving GAN Training via Binarized Representation Entropy (BRE) Regularization" (ICLR2018 paper) paper: https://arxiv.org/abs/1805.03644 G

Training, generation, and analysis code for Learning Particle Physics by Example: Location-Aware Generative Adversarial Networks for Physics

Location-Aware Generative Adversarial Networks (LAGAN) for Physics Synthesis This repository contains all the code used in L. de Oliveira (@lukedeo),

TensorFlow implementation of "Learning from Simulated and Unsupervised Images through Adversarial Training"

Simulated+Unsupervised (S+U) Learning in TensorFlow TensorFlow implementation of Learning from Simulated and Unsupervised Images through Adversarial T

Code for the paper "Improved Techniques for Training GANs"

Status: Archive (code is provided as-is, no updates expected) improved-gan code for the paper "Improved Techniques for Training GANs" MNIST, SVHN, CIF

A data preprocessing and feature engineering script for a machine learning pipeline is prepared.

FEATURE ENGINEERING Business Problem: A data preprocessing and feature engineering script for a machine learning pipeline needs to be prepared. It is

Required for a machine learning pipeline data preprocessing and variable engineering script needs to be prepared

Feature-Engineering Required for a machine learning pipeline data preprocessing and variable engineering script needs to be prepared. When the dataset

A simple python application for running a CI pipeline locally This app currently supports GitLab CI scripts

🏃 Simple Local CI Runner 🏃 A simple python application for running a CI pipeline locally This app currently supports GitLab CI scripts ⚙️ Setup Inst

High performance distributed framework for training deep learning recommendation models based on PyTorch.

High performance distributed framework for training deep learning recommendation models based on PyTorch.

CCQA A New Web-Scale Question Answering Dataset for Model Pre-Training

CCQA: A New Web-Scale Question Answering Dataset for Model Pre-Training This is the official repository for the code and models of the paper CCQA: A N

![[NeurIPS 2021] Deceive D: Adaptive Pseudo Augmentation for GAN Training with Limited Data](https://github.com/EndlessSora/DeceiveD/raw/main/resources/teaser.jpg)

[NeurIPS 2021] Deceive D: Adaptive Pseudo Augmentation for GAN Training with Limited Data

Deceive D: Adaptive Pseudo Augmentation for GAN Training with Limited Data (NeurIPS 2021) This repository will provide the official PyTorch implementa

A deep-learning pipeline for segmentation of ambiguous microscopic images.

Welcome to Official repository of deepflash2 - a deep-learning pipeline for segmentation of ambiguous microscopic images. Quick Start in 30 seconds se

Iterative Training: Finding Binary Weight Deep Neural Networks with Layer Binarization

Iterative Training: Finding Binary Weight Deep Neural Networks with Layer Binarization This repository contains the source code for the paper (link wi

Improving the robustness and performance of biomedical NLP models through adversarial training

RobustBioNLP Improving the robustness and performance of biomedical NLP models through adversarial training In this repository you can find suppliment

TaCL: Improving BERT Pre-training with Token-aware Contrastive Learning

TaCL: Improving BERT Pre-training with Token-aware Contrastive Learning Authors: Yixuan Su, Fangyu Liu, Zaiqiao Meng, Lei Shu, Ehsan Shareghi, and Nig

AdaNet is a lightweight TensorFlow-based framework for automatically learning high-quality models with minimal expert intervention

AdaNet is a lightweight TensorFlow-based framework for automatically learning high-quality models with minimal expert intervention. AdaNet buil

🚪✊Knock Knock: Get notified when your training ends with only two additional lines of code

Knock Knock A small library to get a notification when your training is complete or when it crashes during the process with two additional lines of co

Fast, modular reference implementation and easy training of Semantic Segmentation algorithms in PyTorch.

TorchSeg This project aims at providing a fast, modular reference implementation for semantic segmentation models using PyTorch. Highlights Modular De

Training PSPNet in Tensorflow. Reproduce the performance from the paper.

Training Reproduce of PSPNet. (Updated 2021/04/09. Authors of PSPNet have provided a Pytorch implementation for PSPNet and their new work with support

This code is a toolbox that uses Torch library for training and evaluating the ERFNet architecture for semantic segmentation.

ERFNet This code is a toolbox that uses Torch library for training and evaluating the ERFNet architecture for semantic segmentation. NEW!! New PyTorch

ENet: A Deep Neural Network Architecture for Real-Time Semantic Segmentation.

ENet This work has been published in arXiv: ENet: A Deep Neural Network Architecture for Real-Time Semantic Segmentation. Packages: train contains too

Chainer Implementation of Fully Convolutional Networks. (Training code to reproduce the original result is available.)

fcn - Fully Convolutional Networks Chainer implementation of Fully Convolutional Networks. Installation pip install fcn Inference Inference is done as

Identifies the faulty wafer before it can be used for the fabrication of integrated circuits and, in photovoltaics, to manufacture solar cells.

Identifies the faulty wafer before it can be used for the fabrication of integrated circuits and, in photovoltaics, to manufacture solar cells. The project retrains itself after every prediction, making it more robust and generalized over time.

Training BERT with Compute/Time (Academic) Budget

Training BERT with Compute/Time (Academic) Budget This repository contains scripts for pre-training and finetuning BERT-like models with limited time

A Pytorch implementation of MoveNet from Google. Include training code and pre-train model.

Movenet.Pytorch Intro MoveNet is an ultra fast and accurate model that detects 17 keypoints of a body. This is A Pytorch implementation of MoveNet fro

Created covid data pipeline using PySpark and MySQL that collected data stream from API and do some processing and store it into MYSQL database.

Created covid data pipeline using PySpark and MySQL that collected data stream from API and do some processing and store it into MYSQL database.

Single machine, multiple cards training; mix-precision training; DALI data loader.

Template Script Category Description Category script comparison script train.py, loader.py for single-machine-multiple-cards training train_DP.py, tra

Bodywork deploys machine learning projects developed in Python, to Kubernetes.

Bodywork deploys machine learning projects developed in Python, to Kubernetes. It helps you to: serve models as microservices execute batch jobs run r

SAT: 2D Semantics Assisted Training for 3D Visual Grounding, ICCV 2021 (Oral)

SAT: 2D Semantics Assisted Training for 3D Visual Grounding SAT: 2D Semantics Assisted Training for 3D Visual Grounding by Zhengyuan Yang, Songyang Zh

A Python training and inference implementation of Yolov5 helmet detection in Jetson Xavier nx and Jetson nano

yolov5-helmet-detection-python A Python implementation of Yolov5 to detect head or helmet in the wild in Jetson Xavier nx and Jetson nano. In Jetson X

QAT(quantize aware training) for classification with MQBench

MQBench Quantization Aware Training with PyTorch I am using MQBench(Model Quantization Benchmark)(http://mqbench.tech/) to quantize the model for depl

MQBench Quantization Aware Training with PyTorch

MQBench Quantization Aware Training with PyTorch I am using MQBench(Model Quantization Benchmark)(http://mqbench.tech/) to quantize the model for depl

Official Code Release for "TIP-Adapter: Training-free clIP-Adapter for Better Vision-Language Modeling"

Official Code Release for "TIP-Adapter: Training-free clIP-Adapter for Better Vision-Language Modeling" Pipeline of Tip-Adapter Tip-Adapter can provid

TaCL: Improving BERT Pre-training with Token-aware Contrastive Learning

TaCL: Improving BERT Pre-training with Token-aware Contrastive Learning Authors: Yixuan Su, Fangyu Liu, Zaiqiao Meng, Lei Shu, Ehsan Shareghi, and Nig

MHtyper is an end-to-end pipeline for recognized the Forensic microhaplotypes in Nanopore sequencing data.

MHtyper is an end-to-end pipeline for recognized the Forensic microhaplotypes in Nanopore sequencing data. It is implemented using Python.

TaCL: Improve BERT Pre-training with Token-aware Contrastive Learning

TaCL: Improve BERT Pre-training with Token-aware Contrastive Learning

A pipeline that creates consensus sequences from a Nanopore reads. I

A pipeline that creates consensus sequences from a Nanopore reads. It clusters reads that are similar to each other and creates a consensus that is then identified using BLAST.

Visualize the training curve from the *.csv file (tensorboard format).

Training-Curve-Vis Visualize the training curve from the *.csv file (tensorboard format). Feature Custom labels Curve smoothing Support for multiple c

[ICCV 2021 Oral] Just Ask: Learning to Answer Questions from Millions of Narrated Videos

Just Ask: Learning to Answer Questions from Millions of Narrated Videos Webpage • Demo • Paper This repository provides the code for our paper, includ

![[ICCV' 21]](https://github.com/hansen7/OcCo/raw/master/assets/teaser.png)

[ICCV' 21] "Unsupervised Point Cloud Pre-training via Occlusion Completion"

OcCo: Unsupervised Point Cloud Pre-training via Occlusion Completion This repository is the official implementation of paper: "Unsupervised Point Clou

Dataset and Code for the paper "DepthTrack: Unveiling the Power of RGBD Tracking" (ICCV2021), and "Depth-only Object Tracking" (BMVC2021)

DeT and DOT Code and datasets for "DepthTrack: Unveiling the Power of RGBD Tracking" (ICCV2021) "Depth-only Object Tracking" (BMVC2021) @InProceedings

ICCV2021, Tokens-to-Token ViT: Training Vision Transformers from Scratch on ImageNet

Tokens-to-Token ViT: Training Vision Transformers from Scratch on ImageNet, ICCV 2021 Update: 2021/03/11: update our new results. Now our T2T-ViT-14 w

A customisable game where you have to quickly click on black tiles in order of appearance while avoiding clicking on white squares.

W.I.P-Aim-Memory-Game A customisable game where you have to quickly click on black tiles in order of appearance while avoiding clicking on white squar

Efficient Training of Audio Transformers with Patchout

PaSST: Efficient Training of Audio Transformers with Patchout This is the implementation for Efficient Training of Audio Transformers with Patchout Pa

This is the implementation of the paper LiST: Lite Self-training Makes Efficient Few-shot Learners.

LiST (Lite Self-Training) This is the implementation of the paper LiST: Lite Self-training Makes Efficient Few-shot Learners. LiST is short for Lite S

Inference pipeline for our participation in the FeTA challenge 2021.

feta-inference Inference pipeline for our participation in the FeTA challenge 2021. Team name: TRABIT Installation Download the two folders in https:/

This tool parses log data and allows to define analysis pipelines for anomaly detection.

logdata-anomaly-miner This tool parses log data and allows to define analysis pipelines for anomaly detection. It was designed to run the analysis wit

A Simple Framwork for CV Pre-training Model (SOCO, VirTex, BEiT)

A Simple Framwork for CV Pre-training Model (SOCO, VirTex, BEiT)

AQP is a modular pipeline built to enable the comparison and testing of different quality metric configurations.

Audio Quality Platform - AQP An Open Modular Python Platform for Objective Speech and Audio Quality Metrics AQP is a highly modular pipeline designed

Efficient Sharpness-aware Minimization for Improved Training of Neural Networks

Efficient Sharpness-aware Minimization for Improved Training of Neural Networks Code for “Efficient Sharpness-aware Minimization for Improved Training

Code for EMNLP2021 paper "Allocating Large Vocabulary Capacity for Cross-lingual Language Model Pre-training"

VoCapXLM Code for EMNLP2021 paper Allocating Large Vocabulary Capacity for Cross-lingual Language Model Pre-training Environment DockerFile: dancingso

A simple pytorch pipeline for semantic segmentation.

SegmentationPipeline -- Pytorch A simple pytorch pipeline for semantic segmentation. Requirements : torch=1.9.0 tqdm albumentations=1.0.3 opencv-pyt

The spiritual successor to knockknock for PyTorch Lightning, get notified when your training ends

Who's there? The spiritual successor to knockknock for PyTorch Lightning, to get a notification when your training is complete or when it crashes duri

Pipeline and Dataset helpers for complex algorithm evaluation.

tpcp - Tiny Pipelines for Complex Problems A generic way to build object-oriented datasets and algorithm pipelines and tools to evaluate them pip inst

Revealing and Protecting Labels in Distributed Training

Revealing and Protecting Labels in Distributed Training

training script for space time memory network

Trainig Script for Space Time Memory Network This codebase implemented training code for Space Time Memory Network with some cyclic features. Requirem

Training Certifiably Robust Neural Networks with Efficient Local Lipschitz Bounds (Local-Lip)

Training Certifiably Robust Neural Networks with Efficient Local Lipschitz Bounds (Local-Lip) Introduction TL;DR: We propose an efficient and trainabl

This codebase facilitates fast experimentation of differentially private training of Hugging Face transformers.

private-transformers This codebase facilitates fast experimentation of differentially private training of Hugging Face transformers. What is this? Why

SNV calling pipeline developed explicitly to process individual or trio vcf files obtained from Illumina based pipeline (grch37/grch38).

SNV Pipeline SNV calling pipeline developed explicitly to process individual or trio vcf files obtained from Illumina based pipeline (grch37/grch38).

In this project, ETL pipeline is build on data warehouse hosted on AWS Redshift.

ETL Pipeline for AWS Project Description In this project, ETL pipeline is build on data warehouse hosted on AWS Redshift. The data is loaded from S3 t

MLOps will help you to understand how to build a Continuous Integration and Continuous Delivery pipeline for an ML/AI project.

page_type languages products description sample python azure azure-machine-learning-service azure-devops Code which demonstrates how to set up and ope

fMRIprep Pipeline To Machine Learning

fMRIprep Pipeline To Machine Learning(Demo) 所有配置均在config.py文件下定义 前置环境(lilab) 各个节点均安装docker,并有fmripre的镜像 可以使用conda中的base环境(相应的第三份包之后更新) 1. fmriprep scr

Enhancing Knowledge Tracing via Adversarial Training

Enhancing Knowledge Tracing via Adversarial Training This repository contains source code for the paper "Enhancing Knowledge Tracing via Adversarial T

SEC'21: Sparse Bitmap Compression for Memory-Efficient Training onthe Edge

Training Deep Learning Models on The Edge Training on the Edge enables continuous learning from new data for deployed neural networks on memory-constr

Implementation of average- and worst-case robust flatness measures for adversarial training.

Relating Adversarially Robust Generalization to Flat Minima This repository contains code corresponding to the MLSys'21 paper: D. Stutz, M. Hein, B. S

A complete end-to-end machine learning portal that covers processes starting from model training to the model predicting results using FastAPI.

Machine Learning Portal Goal Application Workflow Process Design Live Project Goal A complete end-to-end machine learning portal that covers processes

PdpCLI is a pandas DataFrame processing CLI tool which enables you to build a pandas pipeline from a configuration file.

PdpCLI Quick Links Introduction Installation Tutorial Basic Usage Data Reader / Writer Plugins Introduction PdpCLI is a pandas DataFrame processing CL

Chinese NER(Named Entity Recognition) using BERT(Softmax, CRF, Span)

Chinese NER(Named Entity Recognition) using BERT(Softmax, CRF, Span)

Open source single image super-resolution toolbox containing various functionality for training a diverse number of state-of-the-art super-resolution models. Also acts as the companion code for the IEEE signal processing letters paper titled 'Improving Super-Resolution Performance using Meta-Attention Layers’.

Deep-FIR Codebase - Super Resolution Meta Attention Networks About This repository contains the main coding framework accompanying our work on meta-at

Implementation for On Provable Benefits of Depth in Training Graph Convolutional Networks

Implementation for On Provable Benefits of Depth in Training Graph Convolutional Networks Setup This implementation is based on PyTorch = 1.0.0. Smal

Colossal-AI: A Unified Deep Learning System for Large-Scale Parallel Training

ColossalAI An integrated large-scale model training system with efficient parallelization techniques. arXiv: Colossal-AI: A Unified Deep Learning Syst

DWIPrep is a robust and easy-to-use pipeline for preprocessing of diverse dMRI data.

DWIPrep: A Robust Preprocessing Pipeline for dMRI Data DWIPrep is a robust and easy-to-use pipeline for preprocessing of diverse dMRI data. The transp

Data, model training, and evaluation code for "PubTables-1M: Towards a universal dataset and metrics for training and evaluating table extraction models".

PubTables-1M This repository contains training and evaluation code for the paper "PubTables-1M: Towards a universal dataset and metrics for training a

Predicting lncRNA–protein interactions based on graph autoencoders and collaborative training

Predicting lncRNA–protein interactions based on graph autoencoders and collaborative training Code for our paper "Predicting lncRNA–protein interactio

Colossal-AI: A Unified Deep Learning System for Large-Scale Parallel Training

ColossalAI An integrated large-scale model training system with efficient parallelization techniques Installation PyPI pip install colossalai Install

AugMax: Adversarial Composition of Random Augmentations for Robust Training

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

Exponential Graph is Provably Efficient for Decentralized Deep Training

Exponential Graph is Provably Efficient for Decentralized Deep Training This code repository is for the paper Exponential Graph is Provably Efficient

![[NeurIPS'21]](https://github.com/VITA-Group/AugMax/raw/main/images/AugMax.PNG)

[NeurIPS'21] "AugMax: Adversarial Composition of Random Augmentations for Robust Training" by Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Animashree Anandkumar, and Zhangyang Wang.

AugMax: Adversarial Composition of Random Augmentations for Robust Training Haotao Wang, Chaowei Xiao, Jean Kossaifi, Zhiding Yu, Anima Anandkumar, an

In this project I played with mlflow, streamlit and fastapi to create a training and prediction app on digits

Fastapi + MLflow + streamlit Setup env. I hope I covered all. pip install -r requirements.txt Start app Go in the root dir and run these Streamlit str

Asterisk is a framework to generate high-quality training datasets at scale

Asterisk is a framework to generate high-quality training datasets at scale

TrackTech: Real-time tracking of subjects and objects on multiple cameras

TrackTech: Real-time tracking of subjects and objects on multiple cameras This project is part of the 2021 spring bachelor final project of the Bachel

![[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training](https://github.com/TLMichael/Delusive-Adversary/raw/main/fig/Thumbnail.png)

[NeurIPS 2021] Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training

Better Safe Than Sorry: Preventing Delusive Adversaries with Adversarial Training Code for NeurIPS 2021 paper "Better Safe Than Sorry: Preventing Delu

code for generating data set ES-ImageNet with corresponding training code

es-imagenet-master code for generating data set ES-ImageNet with corresponding training code dataset generator some codes of ODG algorithm The variabl

A Context-aware Visual Attention-based training pipeline for Object Detection from a Webpage screenshot!

CoVA: Context-aware Visual Attention for Webpage Information Extraction Abstract Webpage information extraction (WIE) is an important step to create k

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

A novel pipeline framework for multi-hop complex KGQA task. About the paper title: Improving Multi-hop Embedded Knowledge Graph Question Answering by Introducing Relational Chain Reasoning

Rce-KGQA A novel pipeline framework for multi-hop complex KGQA task. This framework mainly contains two modules, answering_filtering_module and relati

Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

CoProtector Code for the prototype tool in our paper "CoProtector: Protect Open-Source Code against Unauthorized Training Usage with Data Poisoning".

This is the official source code for SLATE. We provide the code for the model, the training code, and a dataset loader for the 3D Shapes dataset. This code is implemented in Pytorch.

SLATE This is the official source code for SLATE. We provide the code for the model, the training code and a dataset loader for the 3D Shapes dataset.

PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Condition Layer Normalization and Semi-Supervised Training in Text-To-Speech

Cross-Speaker-Emotion-Transfer - PyTorch Implementation PyTorch Implementation of ByteDance's Cross-speaker Emotion Transfer Based on Speaker Conditio

PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data.

Anti-Backdoor Learning PyTorch Code for NeurIPS 2021 paper Anti-Backdoor Learning: Training Clean Models on Poisoned Data. Check the unlearning effect

Python ML pipeline that showcases mltrace functionality.

mltrace tutorial Date: October 2021 This tutorial builds a training and testing pipeline for a toy ML prediction problem: to predict whether a passeng

Efficient Training of Visual Transformers with Small Datasets

Official codes for "Efficient Training of Visual Transformers with Small Datasets", NerIPS 2021.

PyTorch framework for Deep Learning research and development.

Accelerated DL & RL PyTorch framework for Deep Learning research and development. It was developed with a focus on reproducibility, fast experimentati

Training Very Deep Neural Networks Without Skip-Connections

DiracNets v2 update (January 2018): The code was updated for DiracNets-v2 in which we removed NCReLU by adding per-channel a and b multipliers without

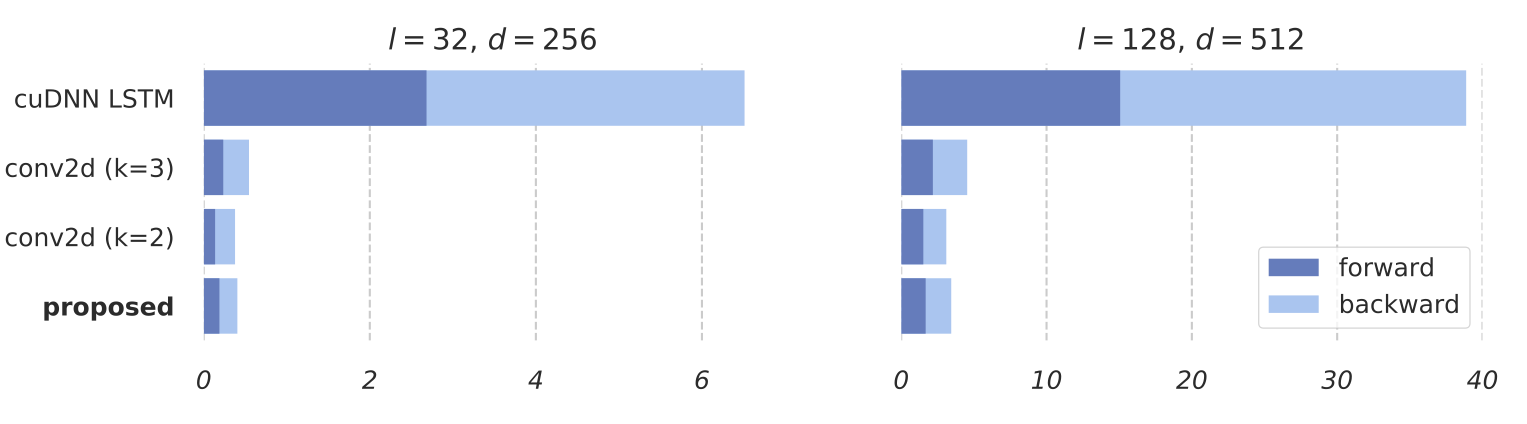

Training RNNs as Fast as CNNs

News SRU++, a new SRU variant, is released. [tech report] [blog] The experimental code and SRU++ implementation are available on the dev branch which