798 Repositories

Python memory-training Libraries

TiP-Adapter: Training-free CLIP-Adapter for Better Vision-Language Modeling

TiP-Adapter: Training-free CLIP-Adapter for Better Vision-Language Modeling This is the official code release for the paper 'TiP-Adapter: Training-fre

A PyTorch implementation of VIOLET

VIOLET: End-to-End Video-Language Transformers with Masked Visual-token Modeling A PyTorch implementation of VIOLET Overview VIOLET is an implementati

Visual Python and C++ nanosecond profiler, logger, tests enabler

Look into Palanteer and get an omniscient view of your program Palanteer is a set of lean and efficient tools to improve the quality of software, for

A complete, self-contained example for training ImageNet at state-of-the-art speed with FFCV

ffcv ImageNet Training A minimal, single-file PyTorch ImageNet training script designed for hackability. Run train_imagenet.py to get... ...high accur

"Moshpit SGD: Communication-Efficient Decentralized Training on Heterogeneous Unreliable Devices", official implementation

Moshpit SGD: Communication-Efficient Decentralized Training on Heterogeneous Unreliable Devices This repository contains the official PyTorch implemen

Holographic Declarative Memory for Python ACT-R

HDM This is the repository for the Holographic Declarative Memory (HDM) module for Python ACT-R. This repository contains: documentation: a paper, con

Implement of "Training deep neural networks via direct loss minimization" in PyTorch for 0-1 loss

This is the implementation of "Training deep neural networks via direct loss minimization" published at ICML 2016 in PyTorch. The implementation targe

Pianote - An application that helps musicians practice piano ear training

Pianote Pianote is an application that helps musicians practice piano ear traini

Implementation of Memory-Compressed Attention, from the paper "Generating Wikipedia By Summarizing Long Sequences"

Memory Compressed Attention Implementation of the Self-Attention layer of the proposed Memory-Compressed Attention, in Pytorch. This repository offers

The accompanying code for the paper "GMAT: Global Memory Augmentation for Transformers" (Ankit Gupta and Jonathan Berant).

GMAT: Global Memory Augmentation for Transformers This repository contains the accompanying code for the paper: "GMAT: Global Memory Augmentation for

Implementation of Memformer, a Memory-augmented Transformer, in Pytorch

Memformer - Pytorch Implementation of Memformer, a Memory-augmented Transformer, in Pytorch. It includes memory slots, which are updated with attentio

🔎 Monitor deep learning model training and hardware usage from your mobile phone 📱

Monitor deep learning model training and hardware usage from mobile. 🔥 Features Monitor running experiments from mobile phone (or laptop) Monitor har

Code for Reciprocal Adversarial Learning for Brain Tumor Segmentation: A Solution to BraTS Challenge 2021 Segmentation Task

BRATS 2021 Solution For Segmentation Task This repo contains the supported pytorch code and configuration files to reproduce 3D medical image segmenta

This repo generates the training data and the model for Morpheus-Deblend

Morpheus-Deblend This repo generates the training data and the model for Morpheus-Deblend. This is the active development repo for the project and as

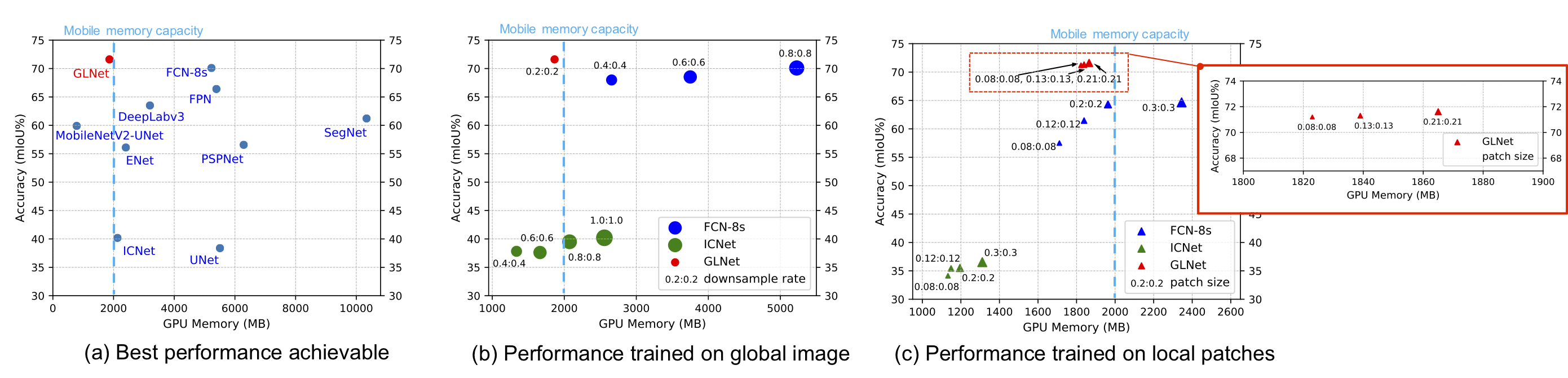

GLNet for Memory-Efficient Segmentation of Ultra-High Resolution Images

GLNet for Memory-Efficient Segmentation of Ultra-High Resolution Images Collaborative Global-Local Networks for Memory-Efficient Segmentation of Ultra-

The code for our paper Semi-Supervised Learning with Multi-Head Co-Training

Semi-Supervised Learning with Multi-Head Co-Training (PyTorch) Abstract Co-training, extended from self-training, is one of the frameworks for semi-su

Explores the python bytecode, provides some tools to access it for fun and profit.

Pyasmtools - looking at the python bytecode for fun and profit. The pyasmtools library is made up of two parts A python bytecode disassembler . See Py

A Python module for the generation and training of an entry-level feedforward neural network.

ff-neural-network A Python module for the generation and training of an entry-level feedforward neural network. This repository serves as a repurposin

Semi-Supervised Semantic Segmentation with Pixel-Level Contrastive Learning from a Class-wise Memory Bank

This repository provides the official code for replicating experiments from the paper: Semi-Supervised Semantic Segmentation with Pixel-Level Contrast

Training a Resilient Q-Network against Observational Interference, Causal Inference Q-Networks

Obs-Causal-Q-Network AAAI 2022 - Training a Resilient Q-Network against Observational Interference Preprint | Slides | Colab Demo | Environment Setup

Intel® Neural Compressor is an open-source Python library running on Intel CPUs and GPUs

Intel® Neural Compressor targeting to provide unified APIs for network compression technologies, such as low precision quantization, sparsity, pruning, knowledge distillation, across different deep learning frameworks to pursue optimal inference performance.

Soft actor-critic is a deep reinforcement learning framework for training maximum entropy policies in continuous domains.

This repository is no longer maintained. Please use our new Softlearning package instead. Soft Actor-Critic Soft actor-critic is a deep reinforcement

Supervised 3D Pre-training on Large-scale 2D Natural Image Datasets for 3D Medical Image Analysis

Introduction This is an implementation of our paper Supervised 3D Pre-training on Large-scale 2D Natural Image Datasets for 3D Medical Image Analysis.

for a paper about leveraging discourse markers for training new models

TSLM-DISCOURSE-MARKERS Scope This repository contains: (1) Code to extract discourse markers from wikipedia (TSA). (1) Code to extract significant dis

A wrapper around ffmpeg to make it work in a concurrent and memory-buffered fashion.

Media Fixer Have you ever had a film or TV show that your TV wasn't able to play its audio? Well this program is for you. Media Fixer is a program whi

Deep learning with TensorFlow and earth observation data.

Deep Learning with TensorFlow and EO Data Complete file set for Jupyter Book Autor: Development Seed Date: 04 October 2021 ISBN: (to come) Notebook tu

Official Implementation for Fast Training of Neural Lumigraph Representations using Meta Learning.

Fast Training of Neural Lumigraph Representations using Meta Learning Project Page | Paper | Data Alexander W. Bergman, Petr Kellnhofer, Gordon Wetzst

Teaches a student network from the knowledge obtained via training of a larger teacher network

Distilling-the-knowledge-in-neural-network Teaches a student network from the knowledge obtained via training of a larger teacher network This is an i

Sign Language Recognition service utilizing a deep learning model with Long Short-Term Memory to perform sign language recognition.

Sign Language Recognition Service This is a Sign Language Recognition service utilizing a deep learning model with Long Short-Term Memory to perform s

Import Python modules from dicts and JSON formatted documents.

Paker Paker is module for importing Python packages/modules from dictionaries and JSON formatted documents. It was inspired by httpimporter. Important

Module for remote in-memory Python package/module loading through HTTP/S

httpimport Python's missing feature! The feature has been suggested in Python Mailing List Remote, in-memory Python package/module importing through H

This is the offline-training-pipeline for our project.

offline-training-pipeline This is the offline-training-pipeline for our project. We adopt the offline training and online prediction Machine Learning

Markdown Presentations for Tech Conferences, Training, Developer Advocates, and Educators.

March 1, 2021: Service on gitpitch.com has been shutdown permanently. GitPitch 4.0 Docs Twitter About Watch the Introducing GitPitch 4.0 Video Visit t

Pi-NAS: Improving Neural Architecture Search by Reducing Supernet Training Consistency Shift (ICCV 2021)

Π-NAS This repository provides the evaluation code of our submitted paper: Pi-NAS: Improving Neural Architecture Search by Reducing Supernet Training

Learnable Boundary Guided Adversarial Training (ICCV2021)

Learnable Boundary Guided Adversarial Training This repository contains the implementation code for the ICCV2021 paper: Learnable Boundary Guided Adve

Seasonal Contrast: Unsupervised Pre-Training from Uncurated Remote Sensing Data

Seasonal Contrast: Unsupervised Pre-Training from Uncurated Remote Sensing Data This is the official PyTorch implementation of the SeCo paper: @articl

Scalene: a high-performance, high-precision CPU, GPU, and memory profiler for Python

Scalene: a high-performance CPU, GPU and memory profiler for Python by Emery Berger, Sam Stern, and Juan Altmayer Pizzorno. Scalene community Slack Ab

Chinese version of GPT2 training code, using BERT tokenizer.

GPT2-Chinese Description Chinese version of GPT2 training code, using BERT tokenizer or BPE tokenizer. It is based on the extremely awesome repository

TensorFlow implementation of AlexNet and its training and testing on ImageNet ILSVRC 2012 dataset

AlexNet training on ImageNet LSVRC 2012 This repository contains an implementation of AlexNet convolutional neural network and its training and testin

Amazon SageMaker Delta Sharing Examples

This repository contains examples and related resources showing you how to preprocess, train, and serve your models using Amazon SageMaker with data fetched from Delta Lake.

The full training script for Enformer (Tensorflow Sonnet) on TPU clusters

Enformer TPU training script (wip) The full training script for Enformer (Tensorflow Sonnet) on TPU clusters, in an effort to migrate the model to pyt

Training DALL-E with volunteers from all over the Internet using hivemind and dalle-pytorch (NeurIPS 2021 demo)

Training DALL-E with volunteers from all over the Internet This repository is a part of the NeurIPS 2021 demonstration "Training Transformers Together

Crowd sourced training data for Rasa NLU models

NLU Training Data Crowd-sourced training data for the development and testing of Rasa NLU models. If you're interested in grabbing some data feel free

Implementations of LSTM: A Search Space Odyssey variants and their training results on the PTB dataset.

An LSTM Odyssey Code for training variants of "LSTM: A Search Space Odyssey" on Fomoro. Check out the blog post. Training Install TensorFlow. Clone th

Contrastive unpaired image-to-image translation, faster and lighter training than cyclegan (ECCV 2020, in PyTorch)

Contrastive Unpaired Translation (CUT) video (1m) | video (10m) | website | paper We provide our PyTorch implementation of unpaired image-to-image tra

Official PyTorch implementation of the paper: DeepSIM: Image Shape Manipulation from a Single Augmented Training Sample

DeepSIM: Image Shape Manipulation from a Single Augmented Training Sample (ICCV 2021 Oral) Project | Paper Official PyTorch implementation of the pape

Meshed-Memory Transformer for Image Captioning. CVPR 2020

M²: Meshed-Memory Transformer This repository contains the reference code for the paper Meshed-Memory Transformer for Image Captioning (CVPR 2020). Pl

PyTorch code for MART: Memory-Augmented Recurrent Transformer for Coherent Video Paragraph Captioning

MART: Memory-Augmented Recurrent Transformer for Coherent Video Paragraph Captioning PyTorch code for our ACL 2020 paper "MART: Memory-Augmented Recur

Augmented CLIP - Training simple models to predict CLIP image embeddings from text embeddings, and vice versa.

Train aug_clip against laion400m-embeddings found here: https://laion.ai/laion-400-open-dataset/ - note that this used the base ViT-B/32 CLIP model. S

TrainingBike - Code, models and schematics I've used to interface my stationary training bike with PC.

TrainingBike Code, models and schematics I've used to interface my stationary training bike with PC. You can find more information about the project i

BasicNeuralNetwork - This project looks over the basic structure of a neural network and how machine learning training algorithms work

BasicNeuralNetwork - This project looks over the basic structure of a neural network and how machine learning training algorithms work. For this project, I used the sigmoid function as an activation function along with stochastic gradient descent to adjust the weights and biases.

U-2-Net: U Square Net - Modified for paired image training of style transfer

U2-Net: U Square Net Modified for paired image training of style transfer This is an unofficial repo making use of the code which was made available b

Predictive Maintenance LSTM

Predictive-Maintenance-LSTM - Predictive maintenance study for Complex case study, we've obtained failure causes by operational error and more deeply by design mistakes.

StyleGAN2-ADA-training-jupyter - Training custom datasets in styleGAN2-ADA by NVIDIA using Jupyter

styleGAN2-ADA-training-jupyter Training custom datasets in styleGAN2-ADA on Jupyter Official StyleGAN2-ADA by NIVIDIA Paper Training Generative Advers

CIFAR-10_train-test - training and testing codes for dataset CIFAR-10

CIFAR-10_train-test - training and testing codes for dataset CIFAR-10

Training Cifar-10 Classifier Using VGG16

opevcvdl-hw3 This project uses pytorch and Qt to achieve the requirements. Version Python 3.6 opencv-contrib-python 3.4.2.17 Matplotlib 3.1.1 pyqt5 5.

Python function to construct an ODS spreadsheet on the fly - without having to store the entire file in memory or disk

stream-write-ods Python function to construct an ODS (OpenDocument Spreadsheet) on the fly - without having to store the entire file in memory or disk

SOTA easy to use PyTorch-based DL training library

Easily train or fine-tune SOTA computer vision models from one training repository. SuperGradients Introduction Welcome to SuperGradients, a free open

“Robust Lightweight Facial Expression Recognition Network with Label Distribution Training”, AAAI 2021.

EfficientFace Zengqun Zhao, Qingshan Liu, Feng Zhou. "Robust Lightweight Facial Expression Recognition Network with Label Distribution Training". AAAI

PyTorch Lightning + Hydra. A feature-rich template for rapid, scalable and reproducible ML experimentation with best practices. ⚡🔥⚡

Lightning-Hydra-Template A clean and scalable template to kickstart your deep learning project 🚀 ⚡ 🔥 Click on Use this template to initialize new re

Memory efficient transducer loss computation

Introduction This project implements the optimization techniques proposed in Improving RNN Transducer Modeling for End-to-End Speech Recognition to re

Pytorch implementation of paper Semi-supervised Knowledge Transfer for Deep Learning from Private Training Data

Pytorch implementation of paper Semi-supervised Knowledge Transfer for Deep Learning from Private Training Data

A simple consistency training framework for semi-supervised image semantic segmentation

PseudoSeg: Designing Pseudo Labels for Semantic Segmentation PseudoSeg is a simple consistency training framework for semi-supervised image semantic s

Semi-Supervised Semantic Segmentation with Cross-Consistency Training (CCT)

Semi-Supervised Semantic Segmentation with Cross-Consistency Training (CCT) Paper, Project Page This repo contains the official implementation of CVPR

Reduce end to end training time from days to hours (or hours to minutes), and energy requirements/costs by an order of magnitude using coresets and data selection.

COResets and Data Subset selection Reduce end to end training time from days to hours (or hours to minutes), and energy requirements/costs by an order

Implementation of ICLR 2020 paper "Revisiting Self-Training for Neural Sequence Generation"

Self-Training for Neural Sequence Generation This repo includes instructions for running noisy self-training algorithms from the following paper: Revi

implementation of the paper "MarginGAN: Adversarial Training in Semi-Supervised Learning"

MarginGAN This repository is the implementation of the paper "MarginGAN: Adversarial Training in Semi-Supervised Learning". 1."preliminary" is the imp

Training neural models with structured signals.

Neural Structured Learning in TensorFlow Neural Structured Learning (NSL) is a new learning paradigm to train neural networks by leveraging structured

PyTorch implementation for Graph Contrastive Learning with Augmentations

Graph Contrastive Learning with Augmentations PyTorch implementation for Graph Contrastive Learning with Augmentations [poster] [appendix] Yuning You*

CCCL: Contrastive Cascade Graph Learning.

CCGL: Contrastive Cascade Graph Learning This repo provides a reference implementation of Contrastive Cascade Graph Learning (CCGL) framework as descr

Memory-efficient optimum einsum using opt_einsum planning and PyTorch kernels.

opt-einsum-torch There have been many implementations of Einstein's summation. numpy's numpy.einsum is the least efficient one as it only runs in sing

In this project, two programs can help you take full agvantage of time on the model training with a remote server

In this project, two programs can help you take full agvantage of time on the model training with a remote server, which can push notification to your phone about the information during model training, like the model indices and unexpected interrupts. Then you can do something in time for your work.

GCC: Graph Contrastive Coding for Graph Neural Network Pre-Training @ KDD 2020

GCC: Graph Contrastive Coding for Graph Neural Network Pre-Training Original implementation for paper GCC: Graph Contrastive Coding for Graph Neural N

Pytorch implementation of the paper "COAD: Contrastive Pre-training with Adversarial Fine-tuning for Zero-shot Expert Linking."

Expert-Linking Pytorch implementation of the paper "COAD: Contrastive Pre-training with Adversarial Fine-tuning for Zero-shot Expert Linking." This is

Python function to construct a ZIP archive with on the fly - without having to store the entire ZIP in memory or disk

Python function to construct a ZIP archive with on the fly - without having to store the entire ZIP in memory or disk

Reproduce results and replicate training fo T0 (Multitask Prompted Training Enables Zero-Shot Task Generalization)

T-Zero This repository serves primarily as codebase and instructions for training, evaluation and inference of T0. T0 is the model developed in Multit

Align and Prompt: Video-and-Language Pre-training with Entity Prompts

ALPRO Align and Prompt: Video-and-Language Pre-training with Entity Prompts [Paper] Dongxu Li, Junnan Li, Hongdong Li, Juan Carlos Niebles, Steven C.H

Pre-Training Graph Neural Networks for Cold-Start Users and Items Representation.

Pretrain-Recsys This is our Tensorflow implementation for our WSDM 2021 paper: Bowen Hao, Jing Zhang, Hongzhi Yin, Cuiping Li, Hong Chen. Pre-Training

Pre-training of Graph Augmented Transformers for Medication Recommendation

G-Bert Pre-training of Graph Augmented Transformers for Medication Recommendation Intro G-Bert combined the power of Graph Neural Networks and BERT (B

Code for KDD'20 "Generative Pre-Training of Graph Neural Networks"

GPT-GNN: Generative Pre-Training of Graph Neural Networks GPT-GNN is a pre-training framework to initialize GNNs by generative pre-training. It can be

code for "Self-supervised edge features for improved Graph Neural Network training", arxivlink

Self-supervised edge features for improved Graph Neural Network training Data availability: Here is a link to the raw data for the organoids dataset.

![[ICML 2020] DrRepair: Learning to Repair Programs from Error Messages](https://github.com/michiyasunaga/DrRepair/raw/master/DrRepair-overview.png)

[ICML 2020] DrRepair: Learning to Repair Programs from Error Messages

DrRepair: Learning to Repair Programs from Error Messages This repo provides the source code & data of our paper: Graph-based, Self-Supervised Program

Autoregressive Predictive Coding: An unsupervised autoregressive model for speech representation learning

Autoregressive Predictive Coding This repository contains the official implementation (in PyTorch) of Autoregressive Predictive Coding (APC) proposed

Code and training data for our ECCV 2016 paper on Unsupervised Learning

Shuffle and Learn (Shuffle Tuple) Created by Ishan Misra Based on the ECCV 2016 Paper - "Shuffle and Learn: Unsupervised Learning using Temporal Order

![[NeurIPS'20] Self-supervised Co-Training for Video Representation Learning. Tengda Han, Weidi Xie, Andrew Zisserman.](https://github.com/TengdaHan/CoCLR/raw/main/asset/teaser.png)

[NeurIPS'20] Self-supervised Co-Training for Video Representation Learning. Tengda Han, Weidi Xie, Andrew Zisserman.

CoCLR: Self-supervised Co-Training for Video Representation Learning This repository contains the implementation of: InfoNCE (MoCo on videos) UberNCE

PyTorch code for training MM-DistillNet for multimodal knowledge distillation

There is More than Meets the Eye: Self-Supervised Multi-Object Detection and Tracking with Sound by Distilling Multimodal Knowledge MM-DistillNet is a

AdaFocus V2: End-to-End Training of Spatial Dynamic Networks for Video Recognition

AdaFocusV2 This repo contains the official code and pre-trained models for AdaFo

Deep Learning Training Scripts With Python

Deep Learning Training Scripts DNN Frameworks Caffe PyTorch Tensorflow CNN Models VGG ResNet DenseNet Inception Language Modeling GatedCNN-LM Attentio

Robust, highly tunable and easy-to-integrate in-memory cache solution written in pure Python, with no dependencies.

Omoide Cache Caching doesn't need to be hard anymore. With just a few lines of code Omoide Cache will instantly bring your Python services to the next

Implementation of Memory-Efficient Neural Networks with Multi-Level Generation, ICCV 2021

Memory-Efficient Multi-Level In-Situ Generation (MLG) By Jiaqi Gu, Hanqing Zhu, Chenghao Feng, Mingjie Liu, Zixuan Jiang, Ray T. Chen and David Z. Pan

Attention for PyTorch with Linear Memory Footprint

Attention for PyTorch with Linear Memory Footprint Unofficially implements https://arxiv.org/abs/2112.05682 to get Linear Memory Cost on Attention (+

Hub is a dataset format with a simple API for creating, storing, and collaborating on AI datasets of any size.

Hub is a dataset format with a simple API for creating, storing, and collaborating on AI datasets of any size. The hub data layout enables rapid transformations and streaming of data while training models at scale. Hub is used by Google, Waymo, Red Cross, Oxford University, and Omdena.

This script allows you to retrieve all functions / variables names of a Python code, and the variables values.

Memory Extractor This script allows you to retrieve all functions / variables names of a Python code, and the variables values. How to use it ? The si

A human-readable PyTorch implementation of "Self-attention Does Not Need O(n^2) Memory"

memory_efficient_attention.pytorch A human-readable PyTorch implementation of "Self-attention Does Not Need O(n^2) Memory" (Rabe&Staats'21). def effic

Code to use Augmented Shapiro Wilks Stopping, as well as code for the paper "Statistically Signifigant Stopping of Neural Network Training"

This codebase is being actively maintained, please create and issue if you have issues using it Basics All data files are included under losses and ea

PyTorch implementation of Rethinking Positional Encoding in Language Pre-training

TUPE PyTorch implementation of Rethinking Positional Encoding in Language Pre-training. Quickstart Clone this repository. git clone https://github.com

Code release for SLIP Self-supervision meets Language-Image Pre-training

SLIP: Self-supervision meets Language-Image Pre-training What you can find in this repo: Pre-trained models (with ViT-Small, Base, Large) and code to

Python Implementation of Scalable In-Memory Updatable Bitmap Indexing

PyUpBit CS490 Large Scale Data Analytics — Implementation of Updatable Compressed Bitmap Indexing Paper Table of Contents About The Project Usage Cont

Simple embedded in memory json database

dbj dbj is a simple embedded in memory json database. It is easy to use, fast and has a simple query language. The code is fully documented, tested an

A low-impact profiler to figure out how much memory each task in Dask is using

dask-memusage If you're using Dask with tasks that use a lot of memory, RAM is your bottleneck for parallelism. That means you want to know how much m